没有合适的资源?快使用搜索试试~ 我知道了~

首页Multi-Agent Actor-Critic for Mixed Cooperative-Competitive Environments

Multi-Agent Actor-Critic for Mixed Cooperative-Competitive Envir...

需积分: 0 30 下载量 146 浏览量

更新于2023-10-21

2

收藏 1.43MB PDF 举报

Multi-Agent Actor-Critic for Mixed Cooperative-Competitive Environments

资源详情

资源推荐

Multi-Agent Actor-Critic for Mixed

Cooperative-Competitive Environments

Ryan Lowe

∗

McGill University

OpenAI

Yi Wu

∗

UC Berkeley

Aviv Tamar

UC Berkeley

Jean Harb

McGill University

OpenAI

Pieter Abbeel

UC Berkeley

OpenAI

Igor Mordatch

OpenAI

Abstract

We explore deep reinforcement learning methods for multi-agent domains. We

begin by analyzing the difficulty of traditional algorithms in the multi-agent case:

Q-learning is challenged by an inherent non-stationarity of the environment, while

policy gradient suffers from a variance that increases as the number of agents grows.

We then present an adaptation of actor-critic methods that considers action policies

of other agents and is able to successfully learn policies that require complex multi-

agent coordination. Additionally, we introduce a training regimen utilizing an

ensemble of policies for each agent that leads to more robust multi-agent policies.

We show the strength of our approach compared to existing methods in cooperative

as well as competitive scenarios, where agent populations are able to discover

various physical and informational coordination strategies.

1 Introduction

Reinforcement learning (RL) has recently been applied to solve challenging problems, from game

playing [

24

,

29

] to robotics [

18

]. In industrial applications, RL is emerging as a practical component

in large scale systems such as data center cooling [

1

]. Most of the successes of RL have been in

single agent domains, where modelling or predicting the behaviour of other actors in the environment

is largely unnecessary.

However, there are a number of important applications that involve interaction between multiple

agents, where emergent behavior and complexity arise from agents co-evolving together. For example,

multi-robot control [

21

], the discovery of communication and language [

31

,

8

,

25

], multiplayer games

[

28

], and the analysis of social dilemmas [

17

] all operate in a multi-agent domain. Related problems,

such as variants of hierarchical reinforcement learning [

6

] can also be seen as a multi-agent system,

with multiple levels of hierarchy being equivalent to multiple agents. Additionally, multi-agent

self-play has recently been shown to be a useful training paradigm [

29

,

32

]. Successfully scaling RL

to environments with multiple agents is crucial to building artificially intelligent systems that can

productively interact with humans and each other.

Unfortunately, traditional reinforcement learning approaches such as Q-Learning or policy gradient

are poorly suited to multi-agent environments. One issue is that each agent’s policy is changing

as training progresses, and the environment becomes non-stationary from the perspective of any

individual agent (in a way that is not explainable by changes in the agent’s own policy). This presents

learning stability challenges and prevents the straightforward use of past experience replay, which is

∗

Equal contribution.

arXiv:1706.02275v4 [cs.LG] 14 Mar 2020

crucial for stabilizing deep Q-learning. Policy gradient methods, on the other hand, usually exhibit

very high variance when coordination of multiple agents is required. Alternatively, one can use model-

based policy optimization which can learn optimal policies via back-propagation, but this requires

a (differentiable) model of the world dynamics and assumptions about the interactions between

agents. Applying these methods to competitive environments is also challenging from an optimization

perspective, as evidenced by the notorious instability of adversarial training methods [11].

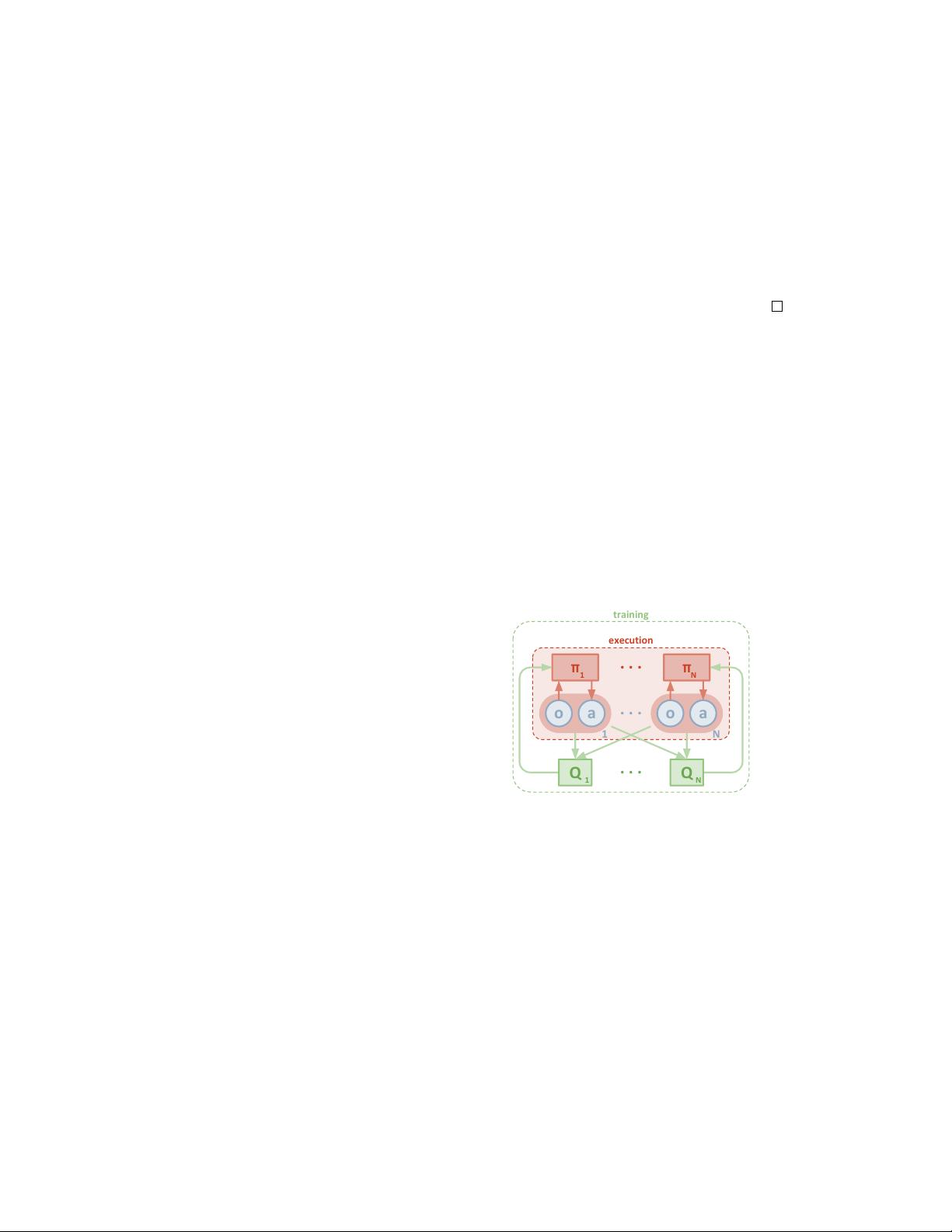

In this work, we propose a general-purpose multi-agent learning algorithm that: (1) leads to learned

policies that only use local information (i.e. their own observations) at execution time, (2) does

not assume a differentiable model of the environment dynamics or any particular structure on the

communication method between agents, and (3) is applicable not only to cooperative interaction

but to competitive or mixed interaction involving both physical and communicative behavior. The

ability to act in mixed cooperative-competitive environments may be critical for intelligent agents;

while competitive training provides a natural curriculum for learning [

32

], agents must also exhibit

cooperative behavior (e.g. with humans) at execution time.

We adopt the framework of centralized training with decentralized execution, allowing the policies

to use extra information to ease training, so long as this information is not used at test time. It is

unnatural to do this with Q-learning without making additional assumptions about the structure of the

environment, as the Q function generally cannot contain different information at training and test

time. Thus, we propose a simple extension of actor-critic policy gradient methods where the critic is

augmented with extra information about the policies of other agents, while the actor only has access

to local information. After training is completed, only the local actors are used at execution phase,

acting in a decentralized manner and equally applicable in cooperative and competitive settings.

Since the centralized critic function explicitly uses the decision-making policies of other agents, we

additionally show that agents can learn approximate models of other agents online and effectively use

them in their own policy learning procedure. We also introduce a method to improve the stability of

multi-agent policies by training agents with an ensemble of policies, thus requiring robust interaction

with a variety of collaborator and competitor policies. We empirically show the success of our

approach compared to existing methods in cooperative as well as competitive scenarios, where agent

populations are able to discover complex physical and communicative coordination strategies.

2 Related Work

The simplest approach to learning in multi-agent settings is to use independently learning agents.

This was attempted with Q-learning in [

36

], but does not perform well in practice [

23

]. As we will

show, independently-learning policy gradient methods also perform poorly. One issue is that each

agent’s policy changes during training, resulting in a non-stationary environment and preventing the

naïve application of experience replay. Previous work has attempted to address this by inputting

other agent’s policy parameters to the Q function [

37

], explicitly adding the iteration index to the

replay buffer, or using importance sampling [

9

]. Deep Q-learning approaches have previously been

investigated in [35] to train competing Pong agents.

The nature of interaction between agents can either be cooperative, competitive, or both and many

algorithms are designed only for a particular nature of interaction. Most studied are cooperative

settings, with strategies such as optimistic and hysteretic Q function updates [

15

,

22

,

26

], which

assume that the actions of other agents are made to improve collective reward. Another approach is to

indirectly arrive at cooperation via sharing of policy parameters [

12

], but this requires homogeneous

agent capabilities. These algorithms are generally not applicable in competitive or mixed settings.

See [27, 4] for surveys of multi-agent learning approaches and applications.

Concurrently to our work, [

7

] proposed a similar idea of using policy gradient methods with a

centralized critic, and test their approach on a StarCraft micromanagement task. Their approach

differs from ours in the following ways: (1) they learn a single centralized critic for all agents, whereas

we learn a centralized critic for each agent, allowing for agents with differing reward functions

including competitive scenarios, (2) we consider environments with explicit communication between

agents, (3) they combine recurrent policies with feed-forward critics, whereas our experiments

use feed-forward policies (although our methods are applicable to recurrent policies), (4) we learn

continuous policies whereas they learn discrete policies.

2

Recent work has focused on learning grounded cooperative communication protocols between agents

to solve various tasks [

31

,

8

,

25

]. However, these methods are usually only applicable when the

communication between agents is carried out over a dedicated, differentiable communication channel.

Our method requires explicitly modeling decision-making process of other agents. The importance

of such modeling has been recognized by both reinforcement learning [

3

,

5

] and cognitive science

communities [

10

]. [

13

] stressed the importance of being robust to the decision making process of

other agents, as do others by building Bayesian models of decision making. We incorporate such

robustness considerations by requiring that agents interact successfully with an ensemble of any

possible policies of other agents, improving training stability and robustness of agents after training.

3 Background

Markov Games

In this work, we consider a multi-agent extension of Markov decision processes

(MDPs) called partially observable Markov games [

20

]. A Markov game for

N

agents is defined by a

set of states

S

describing the possible configurations of all agents, a set of actions

A

1

, ..., A

N

and

a set of observations

O

1

, ..., O

N

for each agent. To choose actions, each agent

i

uses a stochastic

policy

π

π

π

θ

i

: O

i

× A

i

7→ [0, 1]

, which produces the next state according to the state transition function

T : S × A

1

× ... × A

N

7→ S

.

2

Each agent

i

obtains rewards as a function of the state and agent’s

action

r

i

: S × A

i

7→ R

, and receives a private observation correlated with the state

o

i

: S 7→ O

i

.

The initial states are determined by a distribution

ρ : S 7→ [0, 1]

. Each agent

i

aims to maximize its

own total expected return R

i

=

P

T

t=0

γ

t

r

t

i

where γ is a discount factor and T is the time horizon.

Q-Learning and Deep Q-Networks (DQN).

Q-Learning and DQN [

24

] are popular methods in

reinforcement learning and have been previously applied to multi-agent settings [

8

,

37

]. Q-Learning

makes use of an action-value function for policy

π

π

π

as

Q

π

π

π

(s, a) = E[R|s

t

= s, a

t

= a]

. This Q

function can be recursively rewritten as

Q

π

π

π

(s, a) = E

s

0

[r(s, a) + γE

a

0

∼π

π

π

[Q

π

π

π

(s

0

, a

0

)]]

. DQN learns

the action-value function Q

∗

corresponding to the optimal policy by minimizing the loss:

L(θ) = E

s,a,r,s

0

[(Q

∗

(s, a|θ) − y)

2

], where y = r + γ max

a

0

¯

Q

∗

(s

0

, a

0

), (1)

where

¯

Q

is a target Q function whose parameters are periodically updated with the most recent

θ

, which helps stabilize learning. Another crucial component of stabilizing DQN is the use of an

experience replay buffer D containing tuples (s, a, r, s

0

).

Q-Learning can be directly applied to multi-agent settings by having each agent

i

learn an inde-

pendently optimal function

Q

i

[

36

]. However, because agents are independently updating their

policies as learning progresses, the environment appears non-stationary from the view of any one

agent, violating Markov assumptions required for convergence of Q-learning. Another difficulty

observed in [

9

] is that the experience replay buffer cannot be used in such a setting since in general,

P (s

0

|s, a,π

π

π

1

, ...,π

π

π

N

) 6= P (s

0

|s, a,π

π

π

0

1

, ...,π

π

π

0

N

) when any π

π

π

i

6= π

π

π

0

i

.

Policy Gradient (PG) Algorithms.

Policy gradient methods are another popular choice for a

variety of RL tasks. The main idea is to directly adjust the parameters

θ

of the policy in order to

maximize the objective

J(θ) = E

s∼p

π

π

π

,a∼π

π

π

θ

[R]

by taking steps in the direction of

∇

θ

J(θ)

. Using

the Q function defined previously, the gradient of the policy can be written as [34]:

∇

θ

J(θ) = E

s∼p

π

π

π

,a∼π

π

π

θ

[∇

θ

log π

π

π

θ

(a|s)Q

π

π

π

(s, a)], (2)

where

p

π

π

π

is the state distribution. The policy gradient theorem has given rise to several practical

algorithms, which often differ in how they estimate

Q

π

π

π

. For example, one can simply use a sample

return

R

t

=

P

T

i=t

γ

i−t

r

i

, which leads to the REINFORCE algorithm [

39

]. Alternatively, one could

learn an approximation of the true action-value function

Q

π

π

π

(s, a)

by e.g. temporal-difference learning

[33]; this Q

π

π

π

(s, a) is called the critic and leads to a variety of actor-critic algorithms [33].

Policy gradient methods are known to exhibit high variance gradient estimates. This is exacerbated

in multi-agent settings; since an agent’s reward usually depends on the actions of many agents,

the reward conditioned only on the agent’s own actions (when the actions of other agents are not

considered in the agent’s optimization process) exhibits much more variability, thereby increasing the

2

To minimize notation we will often omit θ from the subscript of π

π

π.

3

variance of its gradients. Below, we show a simple setting where the probability of taking a gradient

step in the correct direction decreases exponentially with the number of agents.

Proposition 1.

Consider

N

agents with binary actions:

P (a

i

= 1) = θ

i

, where

R(a

1

, . . . , a

N

) =

1

a

1

=···=a

N

. We assume an uninformed scenario, in which agents are initialized to

θ

i

= 0.5 ∀i

. Then,

if we are estimating the gradient of the cost J with policy gradient, we have:

P (h

ˆ

∇J, ∇Ji > 0) ∝ (0.5)

N

where

ˆ

∇J is the policy gradient estimator from a single sample, and ∇J is the true gradient.

Proof. See Appendix.

The use of baselines, such as value function baselines typically used to ameliorate high variance, is

problematic in multi-agent settings due to the non-stationarity issues mentioned previously.

Deterministic Policy Gradient (DPG) Algorithms.

It is also possible to extend the policy gradient

framework to deterministic policies

µ

µ

µ

θ

: S 7→ A

[

30

]. In particular, under certain conditions we can

write the gradient of the objective J(θ) = E

s∼p

µ

µ

µ

[R(s, a)] as:

∇

θ

J(θ) = E

s∼D

[∇

θ

µ

µ

µ

θ

(a|s)∇

a

Q

µ

µ

µ

(s, a)|

a=µ

µ

µ

θ

(s)

] (3)

Since this theorem relies on

∇

a

Q

µ

µ

µ

(s, a)

, it requires that the action space

A

(and thus the policy

µ

µ

µ

)

be continuous.

Deep deterministic policy gradient (DDPG) [

19

] is a variant of DPG where the policy

µ

µ

µ

and critic

Q

µ

µ

µ

are approximated with deep neural networks. DDPG is an off-policy algorithm, and samples

trajectories from a replay buffer of experiences that are stored throughout training. DDPG also makes

use of a target network, as in DQN [24].

4 Methods

4.1 Multi-Agent Actor Critic

.. ..

.. ..

m

1

m

N

c

1

c

N

l

1

l

M

c

l

a

C

a

b

pool

pool

FC

FC

FC

FC

FC

π

o a

agent 1

. . .

Q

π

o a

agent N

Q

execution

training

. . .

. . .

1

N

N

1

1 N

Figure 1: Overview of our multi-agent decen-

tralized actor, centralized critic approach.

We have argued in the previous section that naïve

policy gradient methods perform poorly in simple

multi-agent settings, and this is supported in our ex-

periments in Section 5. Our goal in this section is to

derive an algorithm that works well in such settings.

However, we would like to operate under the follow-

ing constraints: (1) the learned policies can only use

local information (i.e. their own observations) at ex-

ecution time, (2) we do not assume a differentiable

model of the environment dynamics, unlike in [

25

],

and (3) we do not assume any particular structure on

the communication method between agents (that is, we don’t assume a differentiable communication

channel). Fulfilling the above desiderata would provide a general-purpose multi-agent learning

algorithm that could be applied not just to cooperative games with explicit communication channels,

but competitive games and games involving only physical interactions between agents.

Similarly to [

8

], we accomplish our goal by adopting the framework of centralized training with

decentralized execution. Thus, we allow the policies to use extra information to ease training, so

long as this information is not used at test time. It is unnatural to do this with Q-learning, as the Q

function generally cannot contain different information at training and test time. Thus, we propose

a simple extension of actor-critic policy gradient methods where the critic is augmented with extra

information about the policies of other agents.

More concretely, consider a game with

N

agents with policies parameterized by

θ

θ

θ = {θ

1

, ..., θ

N

}

,

and let

π

π

π = {π

π

π

1

, ...,π

π

π

N

}

be the set of all agent policies. Then we can write the gradient of the

expected return for agent i, J(θ

i

) = E[R

i

] as:

∇

θ

i

J(θ

i

) = E

s∼p

µ

µ

µ

,a

i

∼π

π

π

i

[∇

θ

i

log π

π

π

i

(a

i

|o

i

)Q

π

π

π

i

(x, a

1

, ..., a

N

)]. (4)

4

剩余15页未读,继续阅读

汀、人工智能

- 粉丝: 8w+

- 资源: 400

上传资源 快速赚钱

我的内容管理

收起

我的内容管理

收起

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

会员权益专享

最新资源

- 利用迪杰斯特拉算法的全国交通咨询系统设计与实现

- 全国交通咨询系统C++实现源码解析

- DFT与FFT应用:信号频谱分析实验

- MATLAB图论算法实现:最小费用最大流

- MATLAB常用命令完全指南

- 共创智慧灯杆数据运营公司——抢占5G市场

- 中山农情统计分析系统项目实施与管理策略

- XX省中小学智慧校园建设实施方案

- 中山农情统计分析系统项目实施方案

- MATLAB函数详解:从Text到Size的实用指南

- 考虑速度与加速度限制的工业机器人轨迹规划与实时补偿算法

- Matlab进行统计回归分析:从单因素到双因素方差分析

- 智慧灯杆数据运营公司策划书:抢占5G市场,打造智慧城市新载体

- Photoshop基础与色彩知识:信息时代的PS认证考试全攻略

- Photoshop技能测试:核心概念与操作

- Photoshop试题与答案详解

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功