threads demultiplex events from a larger number of handles.

Conversely, a client application may have a large number of

threads that are communicating with the same server. In this

case, however, allocating a connection-per-thread may con-

sume excessive operating system resources. Thus, it may be

necessary to multiplex events generated by many client threads

onto a smaller number of connections, e.g., by maintaining

a single connection from a client process to each server pro-

cess [11] with which it communicates.

!

For example, one possible OLTP server concurrency

model could allocate a separate thread for each client connec-

tion. However, this thread-per-connection concurrency model

may not handle hundreds or thousands of simultaneous con-

nections scalably. Therefore, our OLTP servers employ a de-

multiplexing model that uses a thread pool to align the number

of server threads to the available processing resources, such

as the number of CPUs, rather than to the number of active

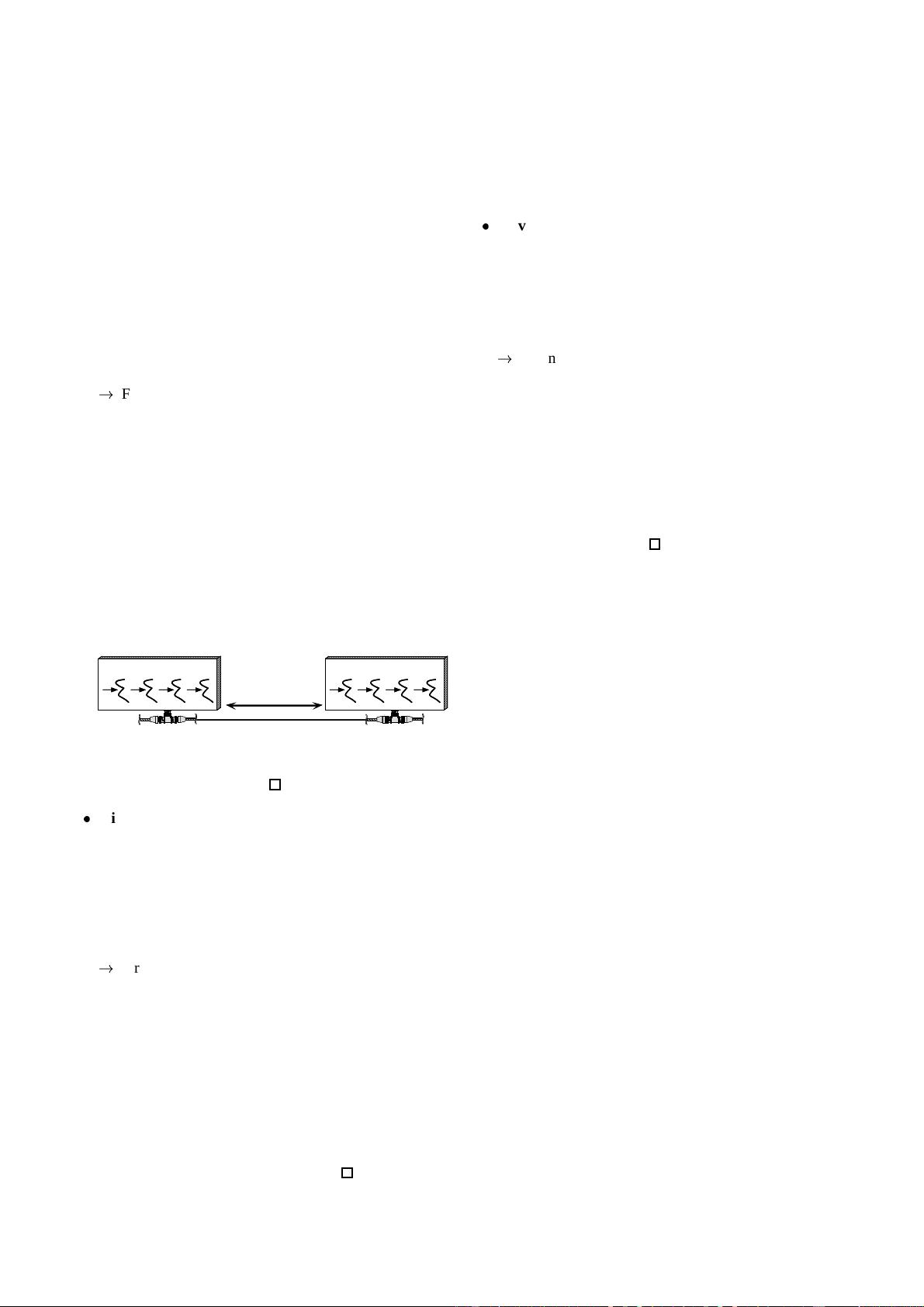

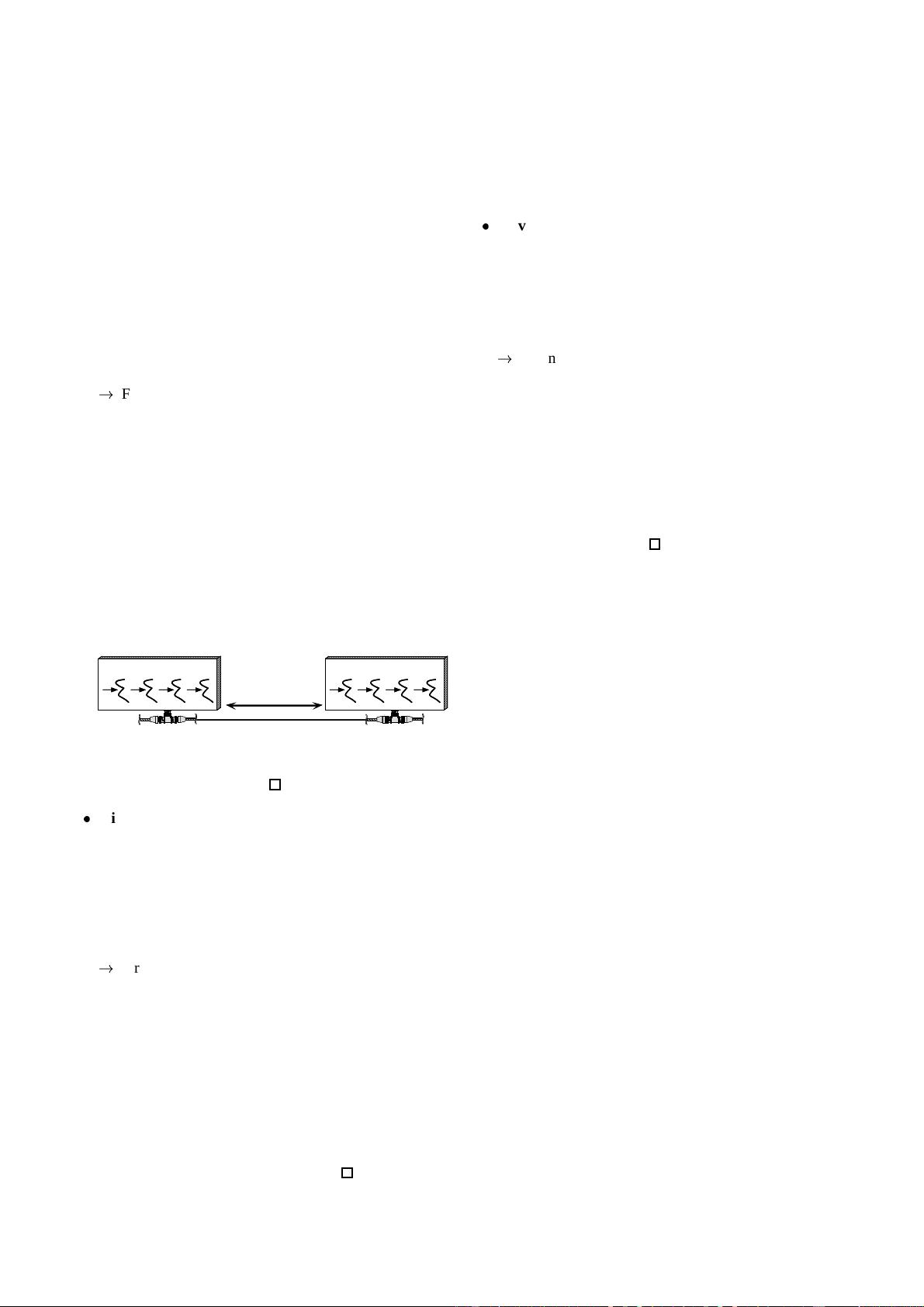

connections. Likewise, to conserve system resources, multiple

threads in each of our front-end communication servers send

requests to the same back-end server over a single multiplexed

connection, as shown in the following figure. Thus, when a

ONE TCP

CONNECTION

WORKER THREADS

FRONT-END

COMMUNICATION

SERVER

WORKER THREADS

BACK-END

DATABASE

SERVER

front-end server receives a result from a back-end server, it

must demultiplex the result to the corresponding thread that is

blocked waiting to process it.

Minimize concurrency-related overhead: To maximize

performance, key sources of concurrency-related overhead,

such as context switching, synchronization, and cache co-

herency management, must be minimized. In particular,

a concurrency model that requires memory to be allocated

dynamically for each request and passed between multiple

threads will incur significant overhead on conventional multi-

processor operating systems [12].

!

For instance, our example OLTP servers employ a thread

pool concurrencymodel based on the “half-sync/half-reactive”

variant of the Half-Sync/Half-Async pattern [2]. This model

uses a message queue to decouple the network I/O thread,

which receives client request events, from the pool of worker

threads, which process these events and return responses to

clients. Unfortunately, this design requires memory to be allo-

cated dynamically, from either the heap or a global pool, in the

network I/O thread so that incoming event requests can be in-

serted into the message queue. In addition, it requires numer-

ous synchronizations and context switches to insert/remove

the request into/from the message queue.

Prevent race conditions: Multiple threads that demulti-

plex events on a set of I/O handles must coordinate to prevent

race conditions. Race conditions can occur if multiple threads

try to access or modify certain types of I/O handles simultane-

ously. This problem often can be prevented by protecting the

handles with a synchronizer, such as a mutex, semaphore, or

condition variable.

!

For instance, a pool of threads cannot use select [3]

to demultiplex a set of socket handles because the operat-

ing system will erroneously notify more than one thread call-

ing select when I/O events are pending on the same sub-

set of handles [3]. Thus, the thread pool would need to re-

synchronize to avoid having multiple threads read from the

same handle. Moreover, for bytestream-oriented protocols,

such as TCP, having multiple threads invoking read on the

same socket handle will corrupt or lose data. Likewise, mul-

tiple simultaneous writes to a socket handle can “scramble”

the data in the bytestream.

5 Solution

Allow one thread at a time – the leader – to wait for an event

to occur on a set of I/O handles. Meanwhile, other threads

– the followers – can queue up waiting their turn to become

the leader. After the current leader thread demultiplexes an

event from the I/O handle set, it promotes a follower thread

to become the new leader and then dispatches the event to a

designated event handler, which processes the event. At this

point, the former leader and the new leader thread can execute

concurrently.

In detail: multiple former leader threads can process events

concurrently while the current leader thread waits on the han-

dle set. After its event processing completes, an idle follower

thread waits its turn to become the leader. If requests arrive

faster than the available threads can service them, the underly-

ing I/O system can queue events internally until a leader thread

becomes available. The leader thread may need to handoff an

event to a follower thread if the leader does not have the neces-

sary context to process the event. This scenario is particularly

relevant in high-volume, multi-tier distributed systems, where

results often arrive in a different order than requests were ini-

tiated. For example, if threads use the Thread-Specific Stor-

age pattern [22] to reduce lock contention, the thread that pro-

cesses a result must be the same one that invoked the request.

6 Structure

The participants in the Leader/Followers pattern include the

following:

3