tion operations on bound threads require OS ker-

nel intervention. Bound threads are most useful

when an application is designed to take advan-

tage of parallelism available on the hardware plat-

form. Since each bound thread requires allocation

of kernel resources, it may be inefficient to allo-

cate a large number of bound threads.

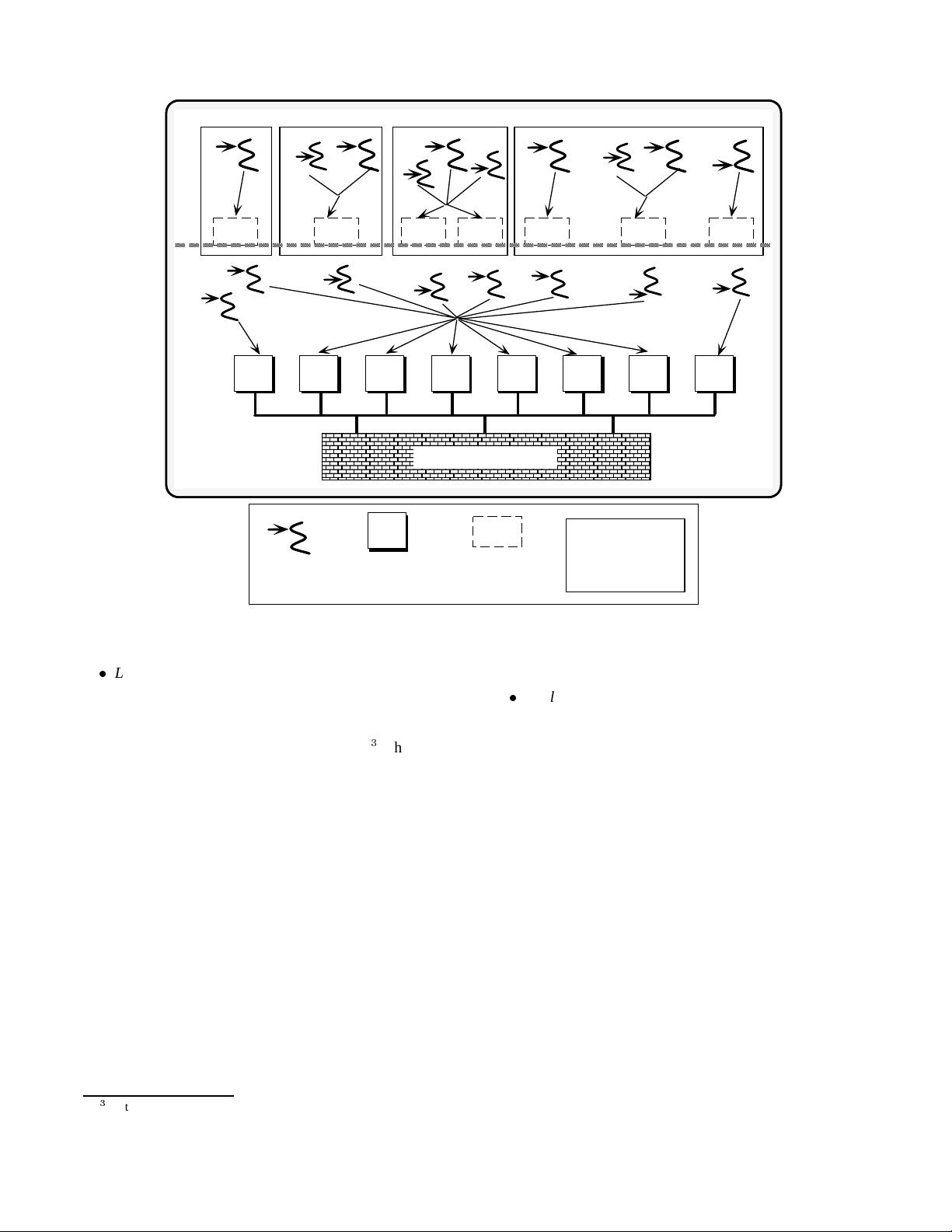

2. Unbound threads – which are multiplexed in an

n

-to-

m

manner atop one or more LWPs and ker-

nel threads by a thread run-time library. This

user-level library implements a non-preemptive,

cooperative multi-tasking concurrency model. It

schedules, dispatches, and suspends unbound

threads, while minimizing kernel involvement.

Compared with using application threads bound

to LWPs, unbound applicationthreads require less

overhead to spawn, context switch, and synchro-

nize.

Depending upon the number of kernel threads

that an application and/or library associates with

a process, one or more unbound threads may ex-

ecute on multiple PEs in parallel. Since each un-

bound thread does not allocate kernel resources,

it is possible to allocate a very large number of

unbound threads without significantly degrading

performance.

3.4 Challenges of Concurrent Programming

On a multi-processor, more than one LWP may run in paral-

lel on separate PEs. On a uni-processor, only one LWP will

be active at any point in time. Regardless of the hardware

platform, programmers must ensure that access to shared re-

sources (such as files, databases records, network devices,

terminals, or shared memory) is serialized to prevent race

conditions. A race condition occurs when the order of execu-

tion of two or more concurrent LWPs leads to unpredictable

and erroneous results (such as a database record being left in

an inconsistent state). Race conditions may be eliminated by

using the Solaris 2.x synchronization mechanisms described

in Section 3.5. These mechanisms serialize access to critical

sections of code that access shared resources.

In addition to the challenges of concurrency control,

the following limitations arise when using multi-threading

(rather than multi-processing or single-threaded, reactive

event loops) to implement concurrent applications:

Robustness – Executing all tasks via threads within a

single process address space may reduce application ro-

bustness. This problem occurs since separate threads

within the same process address space are not protected

from one another. In order to reduce context switching

and synchronization overhead, threads receive little or

no protection from the hardware memory management

unit (MMU).

4

4

An MMU protects separate process address spaces from accidental or

malicious corruption by other active processes in the system.

Since threads are not protected, one faulty service in

a process may corrupt global data shared by services

running on other threads in the process. This, in turn,

may produce incorrect results, crash an entire process,

cause a network server to hang indefinitely, etc. A re-

lated problem is that certain UNIX system calls invoked

in one thread may have undesirable side-effects on an

entire process. For example, the exit system call has

the side-effectof destroying all the threads within a pro-

cess (thr exit should be used to terminate only the

current thread).

Access Privileges – Another limitation with multi-

threading is that all threads within a process share the

same userid and access privileges to files and other pro-

tected resources. Therefore, to prevent accidental or

intentional access to unauthorized resources, network

services that base their security mechanisms on process

ownership (such as the Internet ftp and telnet ser-

vices) are typically implemented in separate processes.

Performance – A common misconception is that multi-

threading an application will automatically improve

performance. In many circumstances, however, multi-

threading does not improve performance. For example,

compute-bound applications on a uni-processor [19]

will not benefit from multi-threading since computa-

tion will not overlap communication. In addition, fine-

grained locking causes high levels of synchronization

overhead[23, 24]. This preventsapplications from fully

exploiting the benefits of parallel processing.

There are some circumstances where multi-threading

may improve performance significantly. For example,

a multi-threading connection-oriented application gate-

way may benefit by being run on a multi-processor plat-

form. Likewise, on a uni-processor, I/O-bound applica-

tions may benefit from multi-threading since computa-

tion is overlapped with communication and disk opera-

tions.

3.5 Overview of Solaris 2.x Synchronization

and Threading Mechanisms

This section outlines and illustrates the synchronization

and threading mechanisms available on Solaris 2.x, POSIX

pthreads, and Win32 threads. In these systems, threads

share various resources (such as open files, signal handlers,

and global memory) within a single process address space.

Therefore, they must utilize synchronization mechanisms to

coordinate access to shared data, to avoid the race conditions

discussed in Section 3.4. To illustrate the need for synchro-

nization mechanisms, consider the following C++ code frag-

ment:

typedef u_long COUNTER;

COUNTER request_count; // At file scope

void *run_svc (Queue<Message> *q)

{

Message *mb; // Message buffer

7

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功