Input

1x1 Conv

f=1024

1x1 Conv

f=512

1x1 Conv

f=256

1x1 Conv

f=13

Global

Max Pooling

GCN

Backbone

Block

Fusion Block

MLP

Prediction

Block

Output

Add

Concat

k = # of nearest neighbors

f = # of filters or hidden units

d = dilation rate

InputInput

Input

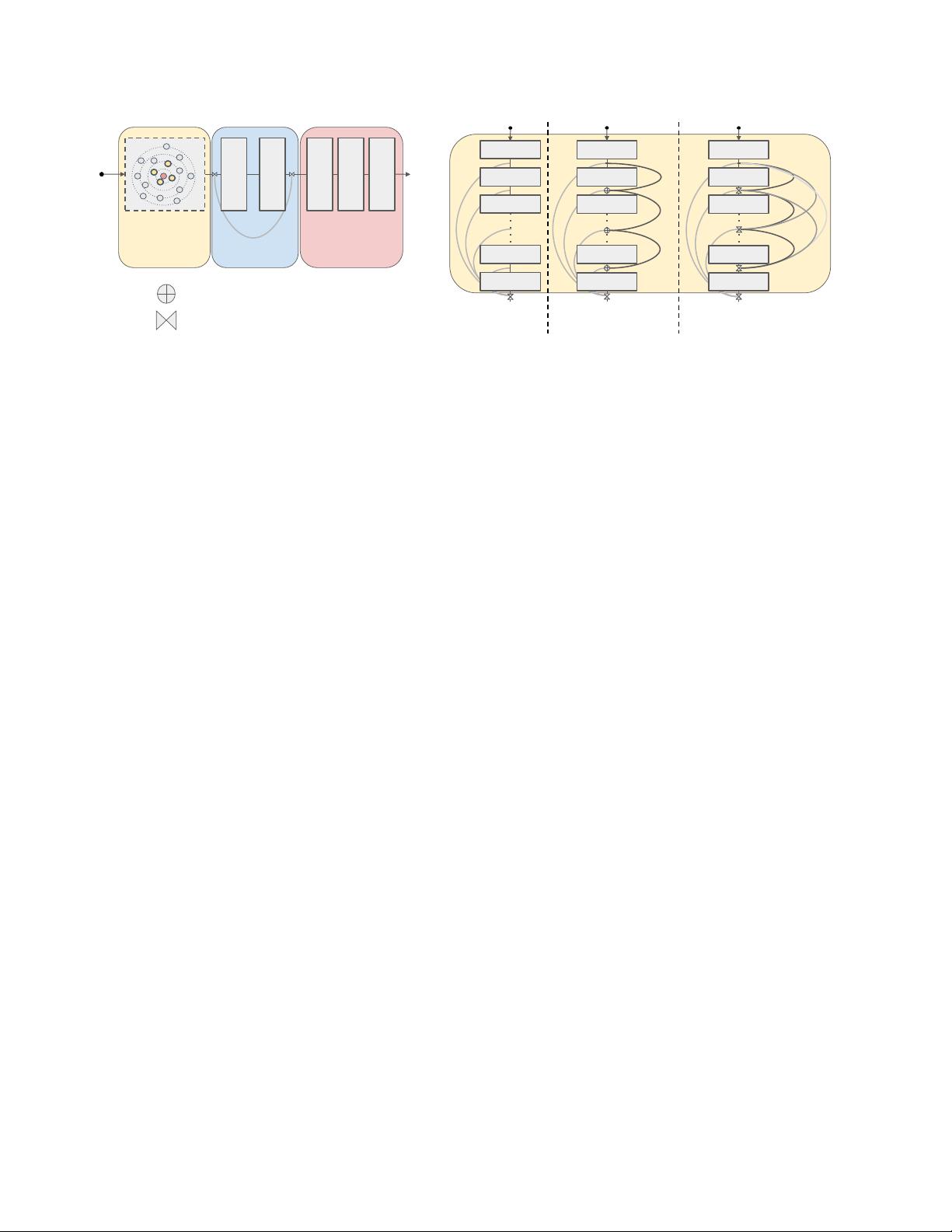

PlainGCN

k=16 f=64

PlainGCN

k=16 f=64

PlainGCN

k=16 f=64

PlainGCN

k=16 f=64

PlainGCN

k=16 f=64

ResGCN

k=16 f=64 d=1

ResGCN

k=16 f=64 d=1

ResGCN

k=16 f=64 d=2

ResGCN

k=16 f=64 d=26

ResGCN

k=16 f=64 d=27

DenseGCN

k=16 f=64 d=1

DenseGCN

k=16 f=32 d=1

DenseGCN

k=16 f=32 d=2

DenseGCN

k=16 f=32 d=26

DenseGCN

k=16 f=32 d=27

PlainGCN

Backbone

ResGCN

Backbone

DenseGCN

Backbone

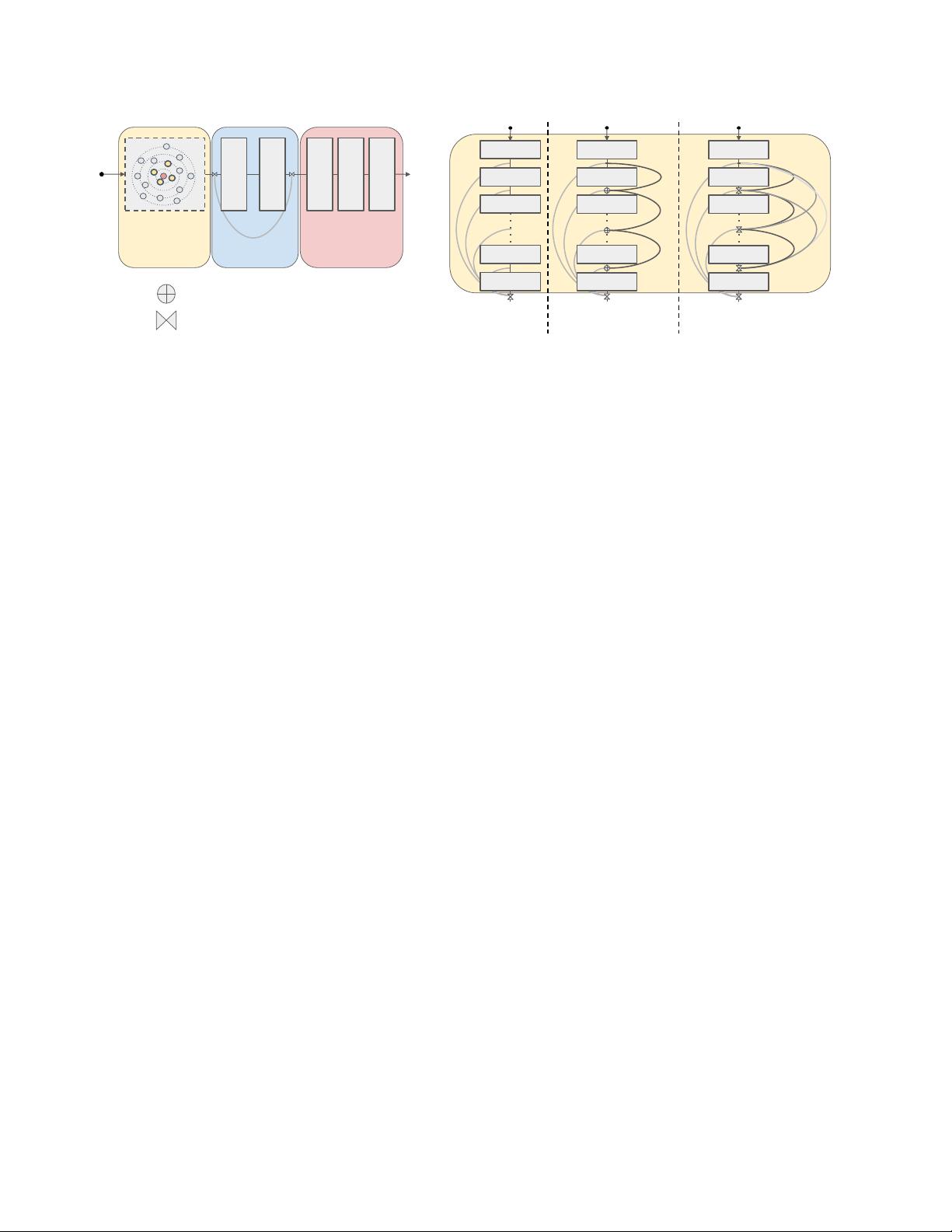

Figure 2. Proposed GCN architecture for point cloud semantic segmentation. (left) Our framework consists of three blocks: a GCN

Backbone Block (feature transformation of input point cloud), a Fusion Block (global feature generation and fusion), and an MLP Predic-

tion Block (point-wise label prediction). (right) We study three types of GCN Backbone Block (PlainGCN, ResGCN and DenseGCN) and

use two kinds of layer connection (vertex-wise addition used in ResGCN or vertex-wise concatenation used in DenseGCN).

we transfer these ideas to GCNs to unleash their full poten-

tial. This enables much deeper GCNs that reliably converge

in training and achieve superior performance in inference.

In the original graph learning framework, the underlying

mapping F, which takes a graph as an input and outputs

a new graph representation (see Equation (1)), is learned.

Here, we propose a graph residual learning framework that

learns an underlying mapping H by fitting another mapping

F. After G

l

is transformed by F, vertex-wise addition is

performed to obtain G

l+1

. The residual mapping F learns

to take a graph as input and outputs a residual graph repre-

sentation G

res

l+1

for the next layer. W

l

is the set of learnable

parameters at layer l. In our experiments, we refer to our

residual model as ResGCN.

G

l+1

= H(G

l

, W

l

)

= F(G

l

, W

l

) + G

l

= G

res

l+1

+ G

l

.

(3)

3.3. Dense Connections in GCNs

DenseNet [13] was proposed to exploit dense connectiv-

ity among layers, which improves information flow in the

network and enables efficient reuse of features among lay-

ers. Inspired by DenseNet, we adapt a similar idea to GCNs

so as to exploit information flow from different GCN layers.

In particular, we have:

G

l+1

= H(G

l

, W

l

)

= T (F(G

l

, W

l

), G

l

)

= T (F(G

l

, W

l

), ..., F(G

0

, W

0

), G

0

).

(4)

The operator T is a vertex-wise concatenation function that

densely fuses the input graph G

0

with all the intermedi-

ate GCN layer outputs. To this end, G

l+1

consists of all

the GCN transitions from previous layers. Since we fuse

GCN representations densely, we refer to our dense model

as DenseGCN. The growth rate of DenseGCN is equal to

the dimension D of the output graph (similar to DenseNet

for CNNs [13]). For example, if F produces a D dimen-

sional vertex feature, where the vertices of the input graph

G

0

are D

0

dimensional, the dimension of each vertex fea-

ture of G

l+1

is D

0

+ D × (l + 1).

3.4. Dilated Aggregation in GCNs

Dilated wavelet convolution is an algorithm originating

from the wavelet processing domain [12, 33]. To allevi-

ate spatial information loss caused by pooling operations,

Yu et al. [51] propose dilated convolutions as an alternative

to applying consecutive pooling layers for dense prediction

tasks, e.g. semantic image segmentation. Their experiments

demonstrate that aggregating multi-scale contextual infor-

mation using dilated convolutions can significantly increase

the accuracy of semantic segmentation tasks. The reason

behind this is the fact that dilation enlarges the receptive

field without loss of resolution. We believe that dilation can

also help with the receptive fields of deep GCNs. Therefore,

we introduce dilated aggregation to GCNs. There are many

possible ways to construct a dilated neighborhood. We use

a Dilated k-NN to find dilated neighbors after every GCN

layer and construct a Dilated Graph. In particular, for an

input graph G = (V, E) with Dilated k-NN and d as the di-

lation rate, the Dilated k-NN returns the k nearest neighbors

within the k × d neighborhood region by skipping every d

neighbors. The nearest neighbors are determined based on

a pre-defined distance metric. In our experiments, we use

the `

2

distance in the feature space of the current layer.

Let N

(d)

(v) denote the d-dilated neighborhood of vertex

v. If (u

1

, u

2

, ..., u

k×d

) are the first sorted k × d nearest

neighbors, vertices (u

1

, u

1+d

, u

1+2d

, ..., u

1+(k−1)d

) are the

d-dilated neighbors of vertex v (see Figure 3), i.e.

N

(d)

(v) = {u

1

, u

1+d

, u

1+2d

, ..., u

1+(k−1)d

}.

4