O. Sorkine / Laplacian Mesh Processing

(more equations than unknowns) and in general no exact so-

lution may exist. However, the system is full-rank and thus

has a unique solution in the least-squares sense:

˜

x = argmin

x

kLx − δ

(x)

k

2

+

∑

j∈C

ω

2

|x

j

− c

j

|

2

!

. (3)

The analytical least-squares solution is expressed in terms of

the rectangular (n + m) × n system matrix

˜

L from Eq. (2):

˜

x = (

˜

L

T

˜

L)

−1

˜

L

T

b,

where b = (δ ,ω c

1

,. . .,ω c

m

)

T

is the right-hand side vector

of the linear system. See Figure 3 for an example of a small

mesh and its associated matrix

˜

L.

An important practical aspect of surface reconstruction

from δ-coordinates is the availability of efficient and accu-

rate numerical methods for solving the least-squares prob-

lem (3). A recent comparative study [BBK05] shows that di-

rect methods prove to be superior for these types of problems

on moderately sized meshes (up to a few hundreds of thou-

sands of vertices). The system (3) can be solved by applying

Cholesky factorization to the associated normal equations

(

˜

L

T

˜

L)x =

˜

L

T

δ.

The matrix

˜

L is sparse, and M =

˜

L

T

˜

L is also sparse (al-

though not as sparse as

˜

L) and positive definite. By using

fill-reducing reordering, it is possible to compu te a sparse

Cholesky factorization of M:

M = R

T

R,

where R is an upper-triangular sparse matrix. The factoriza-

tion is computed once, and we can solve for several mesh

functions ( x, y and z) by back substitution:

R

T

ξ =

˜

L

T

δ

(x)

Rx = ξ .

The factorization takes the bulk of the computation time,

and the back-substitution step is usually very fast. Using ad-

vanced linear solvers, such as TAUCS library [

Tol03], makes

the computations very efficient. See Table 1 for timing data

on several typical meshes. Another important advantage of

direct solvers is the separation between the matrix process-

ing (factorization) and the right-hand side, which is only in-

volved in the fast back-substitution step. This permits fre-

quently changing the right-hand sides at the price of the fac-

torization pre-process which is done only once per fixed ma-

trix. This property is extremely useful for interactive mesh

editing, as described in Section 4. When the system ma-

trix is very large (million variables and more), the size of

the factor becomes too large to fit into memory. In this

case, it is possible to either use the out-of-core version of

Cholesky factorization (available e.g. with [Tol03]) or iter-

ative solvers whose complexity scales linearly with the size

of the system (for instance, see the recent work on multigrid

solvers [AKS05, SYBF06]).

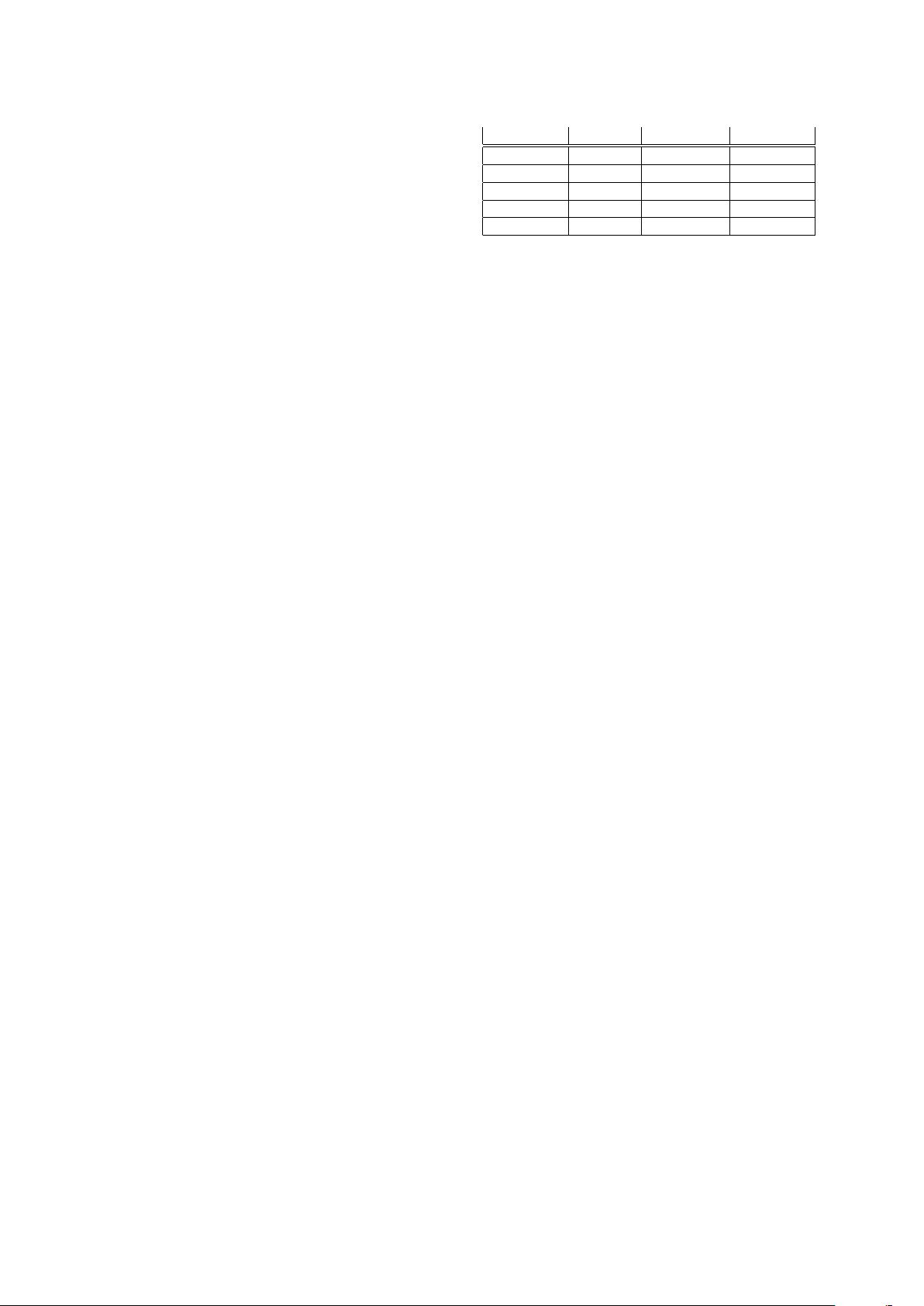

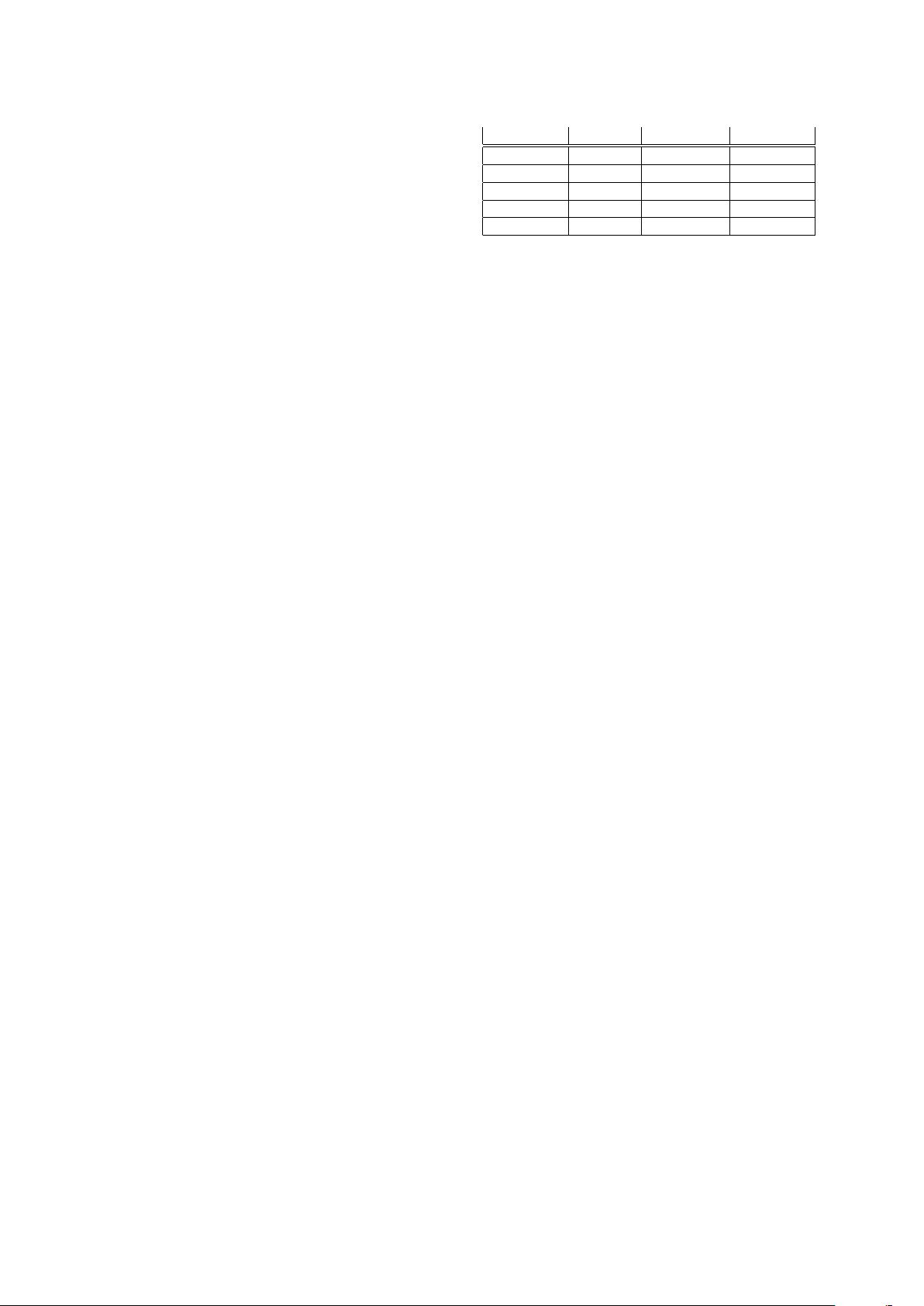

Model # vertices Factor (sec.) Solve (sec.)

Eight 2718 0.085 0.004

Horse 19851 0.900 0.032

Camel 39074 2.096 0.073

Feline 49864 2.750 0.110

Max Planck 100086 7.713 0.240

Table 1: Time measurements of manipulations of the (ex-

tended) Laplacian matrices for several typical meshes, on

a 3.4 GHz Pentium 4 computer. Factorization stands for

Cholesky factorization of

˜

L

T

˜

L and Solve denotes solving by

back-substitution for one mesh function.

Note that the accuracy of the solution is highly depen-

dent on the conditioning of the linear system. The Lapla-

cian matrix is notoriously ill-conditioned: when we add

only one anchor, the largest singular value of

˜

L is propor-

tional to the maximal degree in the mesh, while a good es-

timate for the smallest singular value is one over the prod-

uct of the maximum topological distance of a vertex from

the single anchor vertex, and the number of vertices in the

mesh [BH01, GM00]. For a typical n-vertex 3D mesh, the

condition number is therefore likely to be Θ(n

−1.5

), and it is

squared when we consider the normal equations. However,

adding anchors to the system improves its conditioning and

makes the factorization of the normal equations stable. A

rigorous way to bound the smallest singular value of

˜

L from

below is shown in [CCOST05]; the bound depends on the

number of anchors and their spacing across the mesh.

In summary, the transition from the global Cartesian rep-

resentation to differential representation is performed by a

linear operator with local support: the mesh Laplacian. And

vice versa: in order to recover the Cartesian coordinates

from the differential representation, one needs to solve a

sparse linear least-squares problem. This provides a power-

ful framework for surface manipulation: we will apply dif-

ferent modifications to the differential coordinates (such as

quantization for compression purposes) and/or pose addi-

tional modeling constraints, and then reconstruct the surface

by solving the least-squares problem. The advantages of this

framework can be summarized as follows:

• It strives to preserve local surface detail as much as possi-

ble under the constraints

• The least-squares solution smoothly distributes the error

across the entire domain, providing a graceful reconstruc-

tion

• Sparse linear systems can be solved very efficiently

2.3. Spectral properties

Let us consider the L

s

matrix, since it is symmetric and

thus simpler to analyze. The matrix L

s

is symmetric positive

c

The Eurographics Association 2005.