The actuarial tables published by insurance companies reflect their statistical analysis of the average life

expectancy of men and women at any given age. From these numbers, the insurance companies then

calculate the appropriate premiums for a particular individual to purchase a given amount of insurance.

Exploratory analysis of data makes use of numerical and graphical techniques to study patterns and

departures from patterns. The widely used descriptive statistical techniques are: Frequency Distribution;

Histograms; Boxplot; Scattergrams and Error Bar plots; and diagnostic plots.

In examining distribution of data, you should be able to detect important characteristics, such as shape,

location, variability, and unusual values. From careful observations of patterns in data, you can generate

conjectures about relationships among variables. The notion of how one variable may be associated with

another permeates almost all of statistics, from simple comparisons of proportions through linear

regression. The difference between association and causation must accompany this conceptual

development.

Data must be collected according to a well-developed plan if valid information on a conjecture is to be

obtained. The plan must identify important variables related to the conjecture, and specify how they are to

be measured. From the data collection plan, a statistical model can be formulated from which inferences

can be drawn.

As an example of statistical modeling with managerial implications, such as "what-if" analysis, consider

regression analysis. Regression analysis is a powerful technique for studying relationship between

dependent variables (i.e., output, performance measure) and independent variables (i.e., inputs, factors,

decision variables). Summarizing relationships among the variables by the most appropriate equation (i.e.,

modeling) allows us to predict or identify the most influential factors and study their impacts on the output

for any changes in their current values.

Frequently, for example the marketing managers are faced with the question, What Sample Size Do I

Need? This is an important and common statistical decision, which should be given due consideration,

since an inadequate sample size invariably leads to wasted resources. The sample size determination

section provides a practical solution to this risky decision.

Statistical models are currently used in various fields of business and science. However, the terminology

differs from field to field. For example, the fitting of models to data, called calibration, history matching,

and data assimilation, are all synonymous with parameter estimation.

Your organization database contains a wealth of information, yet the decision technology group members

tap a fraction of it. Employees waste time scouring multiple sources for a database. The decision-makers

are frustrated because they cannot get business-critical data exactly when they need it. Therefore, too many

decisions are based on guesswork, not facts. Many opportunities are also missed, if they are even noticed at

all.

Knowledge is what we know well. Information is the communication of knowledge. In every knowledge

exchange, there is a sender and a receiver. The sender make common what is private, does the informing,

the communicating. Information can be classified as explicit and tacit forms. The explicit information can

be explained in structured form, while tacit information is inconsistent and fuzzy to explain. Know that data

are only crude information and not knowledge by themselves.

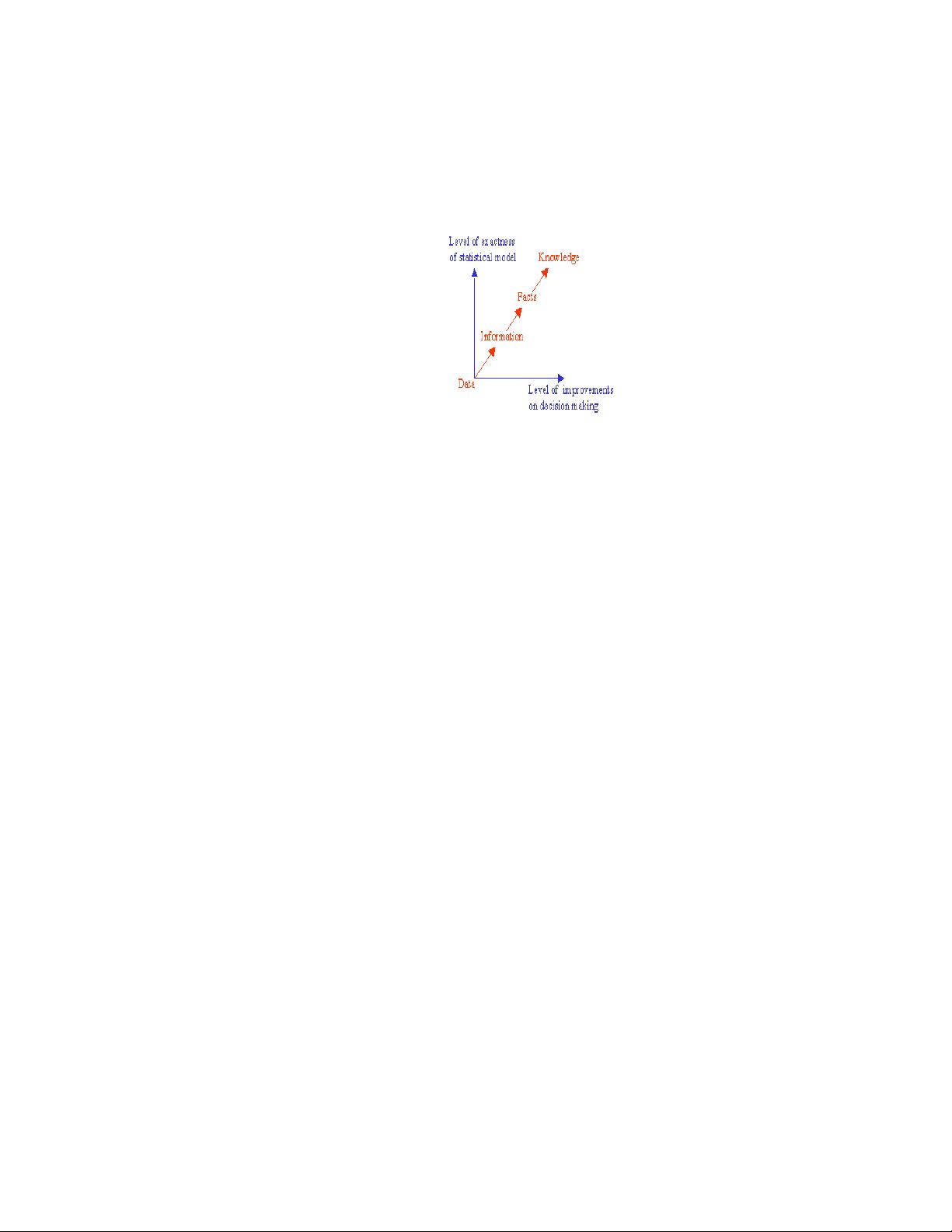

Data is known to be crude information and not knowledge by itself. The sequence from data to knowledge

is: from Data to Information, from Information to Facts, and finally, from Facts to Knowledge. Data

becomes information, when it becomes relevant to your decision problem. Information becomes fact, when

the data can support it. Facts are what the data reveals. However the decisive instrumental (i.e., applied)

knowledge is expressed together with some statistical degree of confidence.

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功