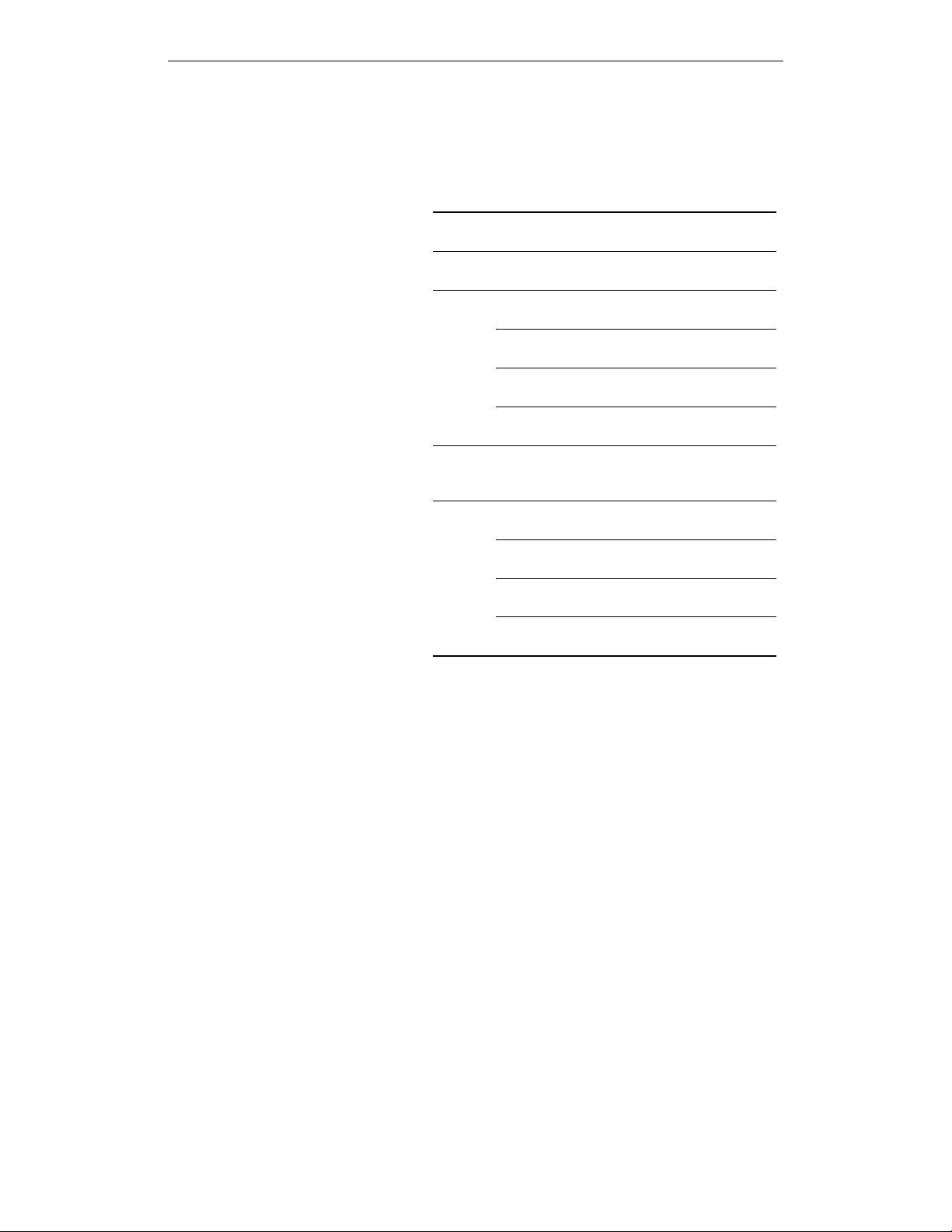

hyperparameters are exactly the same as in (Weiler & Cesa, 2019), namely a Wide-ResNet-16-8

trained for 1000 epochs with random crops, horizontal flips and Cutout (DeVries & Taylor, 2017) as

data augmentation. The group column describes the equivariance group in each of the three residual

blocks. For example,

D

8

D

4

D

1

means that the first block is equivariant under reflections and 8

rotations, the second under 4 rotations and the last one only under reflections. All layers use regular

representations. The

D

8

-equivariant layers use

5 × 5

filters to improve equivariance, whereas the

other layers use 3 × 3 filters.

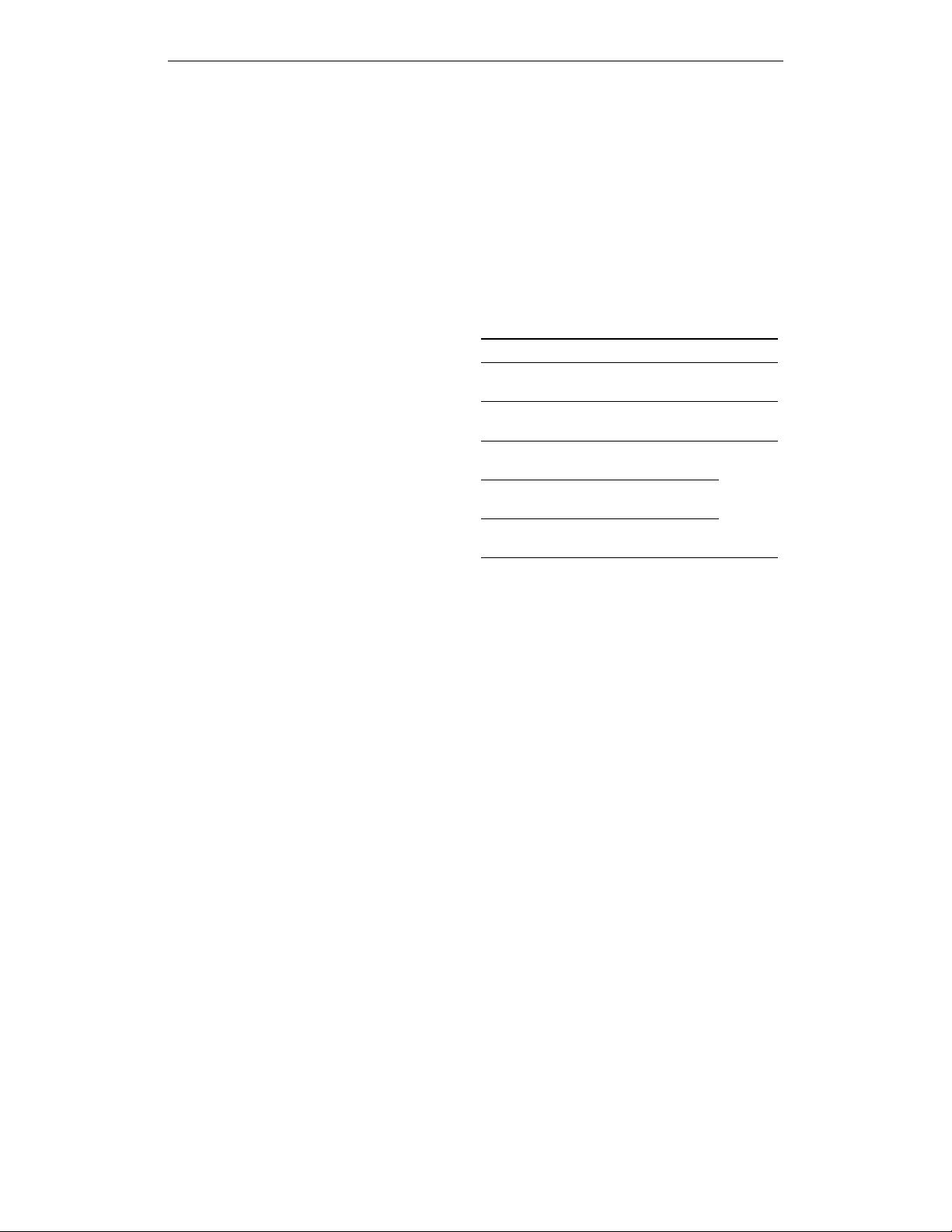

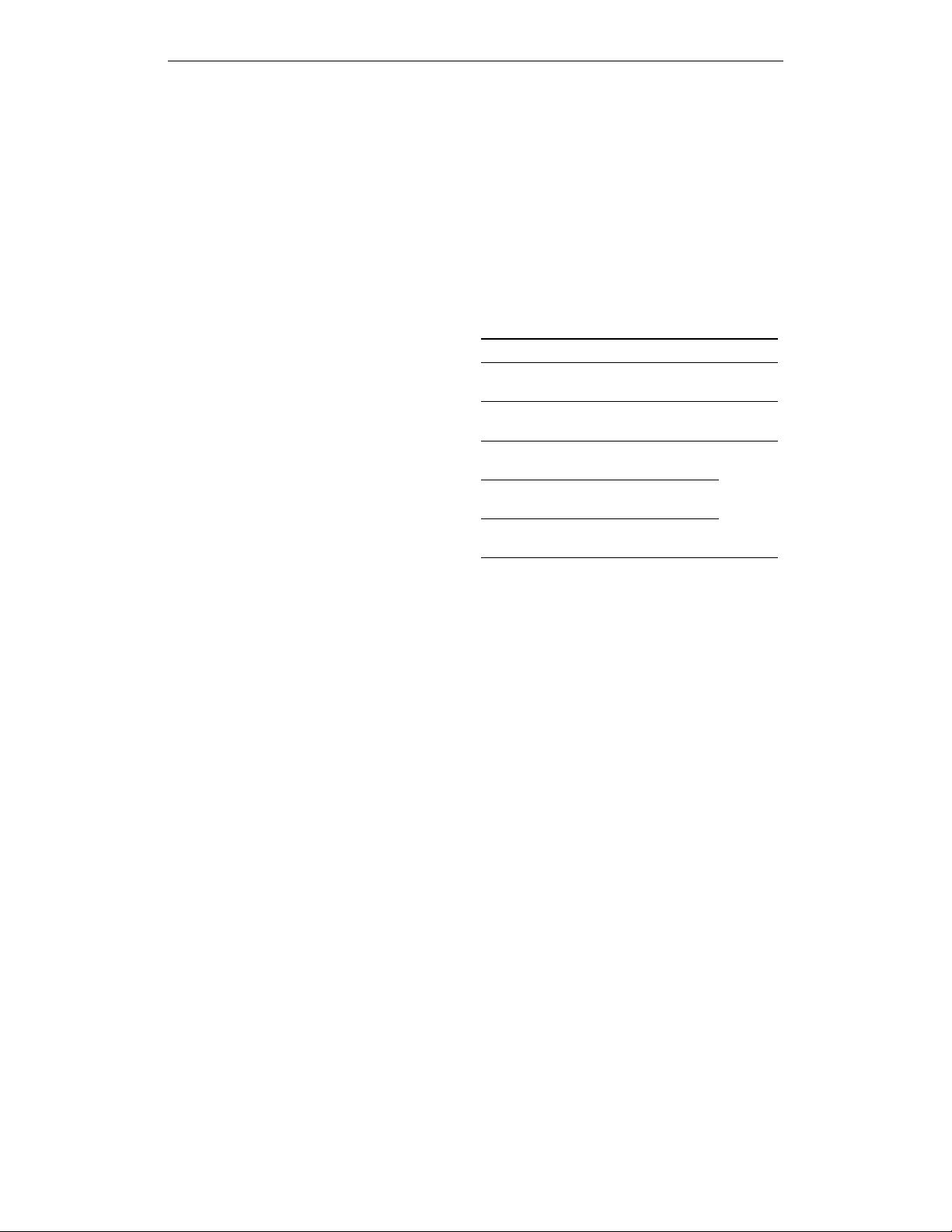

Table 2: STL-10 results, again over six runs. All models

except the vanilla CNN use regular representations, see

main text for details.

Method Groups Error [%] Params

Vanilla

CNN

– 12.7 ± 0.2 11M

Kernels

D

8

D

4

D

1

10.7 ± 0.6

4.2M

D

4

D

4

D

1

10.2 ± 0.4

FD

D

8

D

4

D

1

12.1 ± 0.6

3.2M

D

4

D

4

D

1

12.1 ± 0.7

RBF-FD

D

8

D

4

D

1

14.3 ± 0.4

D

4

D

4

D

1

14.3 ± 0.4

Gauss

D

8

D

4

D

1

11.2 ± 0.3

D

4

D

4

D

1

10.6 ± 0.8

Discussion

While all equivariant models im-

prove significantly over the non-equivariant

CNN, the method of discretization plays an im-

portant role for PDOs. The reason that FD and

RBF-FD underperform kernels is that they don’t

make full use of the stencil, since PDOs are

inherently local operators. When a

5 × 5

sten-

cil is used, the outermost entries are all very

small compared to the inner ones, and even in

3 × 3

kernels, the four corners tend to be closer

to zero (see Appendix K for images of stencils

to illustrate this). Gaussian discretization per-

forms significantly better and almost as well as

kernels because its smoothing effect alleviates

these issues. This fits the observation that ker-

nels and Gaussian methods profit from using

5 × 5

kernels, whereas these do not help for FD

and RBF-FD (and in fact decrease performance because of the smaller number of layers). We also

observe a very small but rather consistent advantage of quotient representations over regular ones,

demonstrating the practical usefulness of non-regular representations.

5 RELATED WORK

Equivariant networks

Equivariant neural networks have gained a lot of popularity in the last few

years, starting with group convolutional neural networks (Cohen & Welling, 2016; Hoogeboom et al.,

2018; Weiler et al., 2018b). These networks apply a filter in all

H

-transformed poses, where

H

is the

group under which equivariance is desired, such as

H = R

d

o G

. Classical CNNs are the special

case where

G

is trivial and filters are thus only translated. Because of their additional transformations

of filters, feature maps for group convolutional networks are defined on

H

rather than on the original

input space

R

d

. The learned filters are unrestricted and the equivariance is guaranteed by the form of

the group convolution itself.

A somewhat different approach is taken by steerable CNNs (Cohen & Welling, 2017; Weiler et al.,

2018a; Weiler & Cesa, 2019; Brandstetter et al., 2021). They represent a single feature as a map

from the base space, such as

R

d

, to a fiber

R

c

that is equipped with a representation

ρ

of the

point group

G

. In contrast to group convolutional networks, they thus use the same domain

R

d

as classical CNNs for feature maps. Instead, they extend the codomain from

R

to

R

c

. If regular

representations are used for

ρ

, steerable CNNs becomes equivalent to group convolutional networks

with

H = R

d

o G

as the symmetry group. The convolution operation used by steerable CNNs

is simply the classical convolution, so to achieve equivariance, the filters that are used need to be

restricted by the G-steerability constraint.

Differential operators and deep learning

Analogies between partial differential operators and

convolutions have been noted and exploited in several previous works (Ruthotto & Haber, 2020; Shen

et al., 2020; Long et al., 2018). The common thread throughout these is that discretizing PDOs on

regular grids naturally leads to convolutions with certain filters, as long as the PDO coefficients are

spatially constant.

A different way in which differential operators have appeared in deep learning are via Neural

ODEs (Chen et al., 2018) and related ideas (E, 2017; Ruthotto & Haber, 2020; Lu et al., 2018). There,

a residual neural network is interpreted as approximately solving a differential equation, where depth

9

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功