22 Front. Comput. Sci., 2016, 10(1): 19–36

classifier (Fig. 4). At a later stage, the remained candidates

are grouped into text lines through a series of connection

rules. However, such connection rules can only adapt to hor-

izontal or nearly horizontal texts, therefore this algorithm is

unable to handle texts with larger inclination angle.

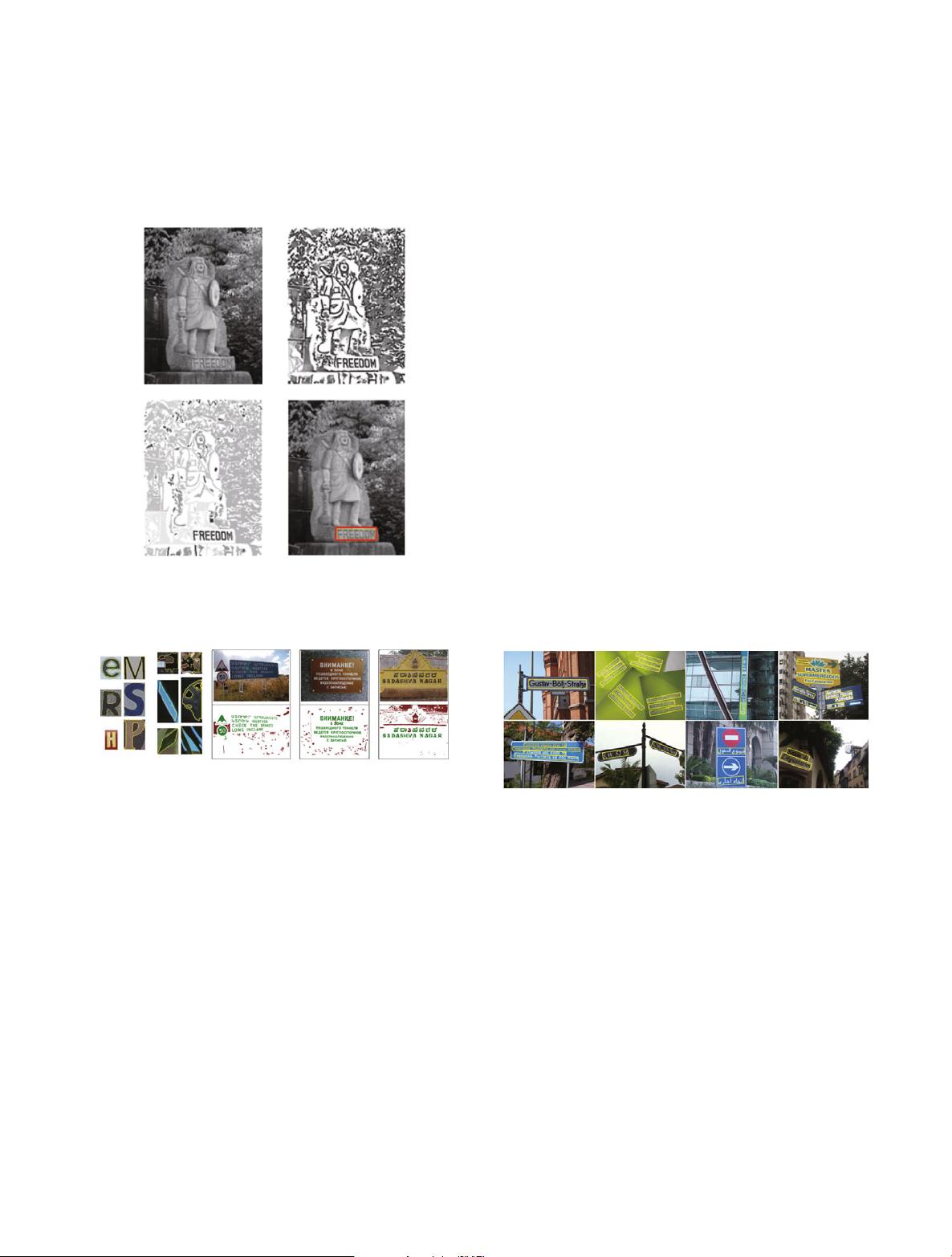

Fig. 3 Text detection examples of the algorithm of Epshtein et al. [9] (im-

age reprinted from Ref. [9]). This work proposed SWT, an image operator

that allows for direct extraction of character strokes from edge map

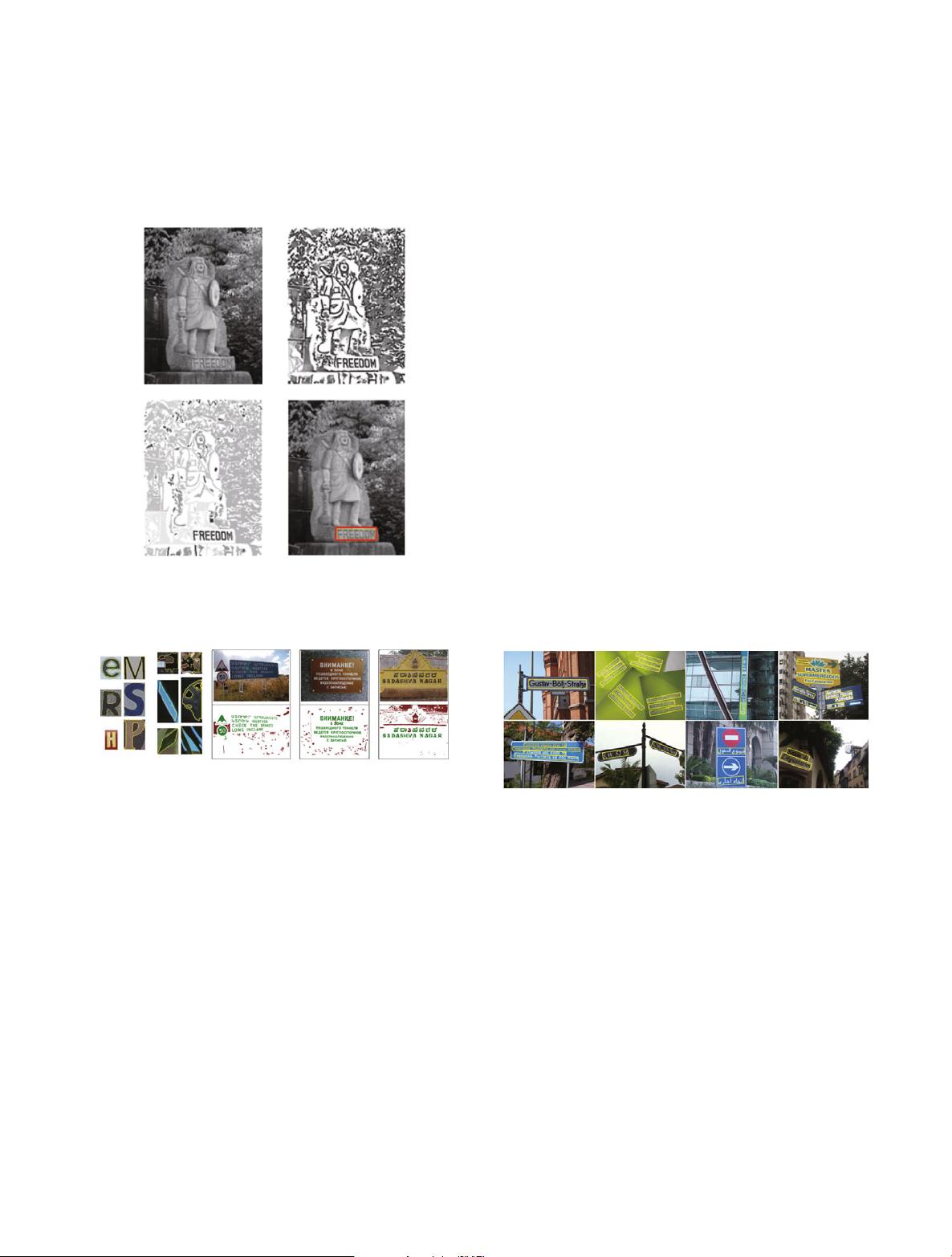

Fig. 4 Text detection examples of the algorithm of Neumann et al. [10] (im-

age reprinted from Ref. [10]). This work is the first that introduces MSER

into the field of scene text detection

SWT [9] and MSER [10] are two representative methods

in the field of scene text detection, which constitute the basis

of a lot of subsequent works [12–14,29, 30,34,48,49].

The great success of sparse representation in face recogni-

tion [50] and image denoising [51] has inspired numerous re-

searchers. For example, Zhao et al. [52] constructed a sparse

dictionary from training samples and used it to judge whether

a particular area in the image contains text. However, the

generalization ability of the learned sparse dictionary is re-

stricted, so that this method is unable to handle issues like

rotation and scale change.

Different from the aforementioned algorithms, the ap-

proach proposed by Yi et al. [28] can detect tilted texts in

natural images. Firstly, the image is divided into different re-

gions according to the distribution of pixels in color space,

and then regions are combined into connected components

according to the properties such as color similarity, spatial

distance and relative size of regions. Finally, non-text com-

ponents are discarded by a set of rules. However, the pre-

requisite of this method is that it assumes the input images

consists of several main colors, which is not necessarily true

for complex natural images. In addition, this method relies on

a lot of artificially designed filtering rules and parameters, so

that it is difficult to generalize to large-scale complex image

data sets.

Shivakumara et al. [53] also proposed a method for multi-

oriented text detection. The method extracted candidate re-

gions by clustering in the Fourier-Laplace space and divided

the regions into distinct components using skeletonization.

However, these components generally do not correspond to

strokes or characters, but just text blocks. This method can

not directly compare with other methods quantitatively, since

it is not able to detect characters or words directly.

Based on SWT [9], Yao et al. [12] proposed an algorithm

that can detect texts of arbitrary orientations in natural images

(Fig. 5). This algorithm is equipped with a two-level classifi-

cation scheme and two sets of rotation and rotation-invariant

features specially designed for capturing the intrinsic charac-

teristics of characters in natural scenes.

Fig. 5 Text detection examples of the algorithm of Yao et al. [12] (image

reprinted from Ref. [12]). Different from previous methods, which have fo-

cused on horizontal or near-horizontal texts, this algorithm is able to detect

texts of varying orientations in natural images

Huang et al. [29] presented a new operator based on Stroke

Width Transform, called stroke feature transform (SFT). In

order to solve the mismatch problem of edge points in the

original Stroke Width Transform, SFT introduces color con-

sistency and constrains relations of local edge points, produc-

ing better component extraction results. The detection perfor-

mance of SFT on standard datasets is significantly higher than

other methods, but only for horizontal texts.

In Ref. [30], Huang et al. proposed a novel framework

for scene text detection, which integrated Maximally Stable

Extremal Regions and convolutional neural networks (CNN).

The MSER operator works in the front-end to extract text

candidates, while a CNN based classifier is applied to cor-