Published as a conference paper at ICLR 2021

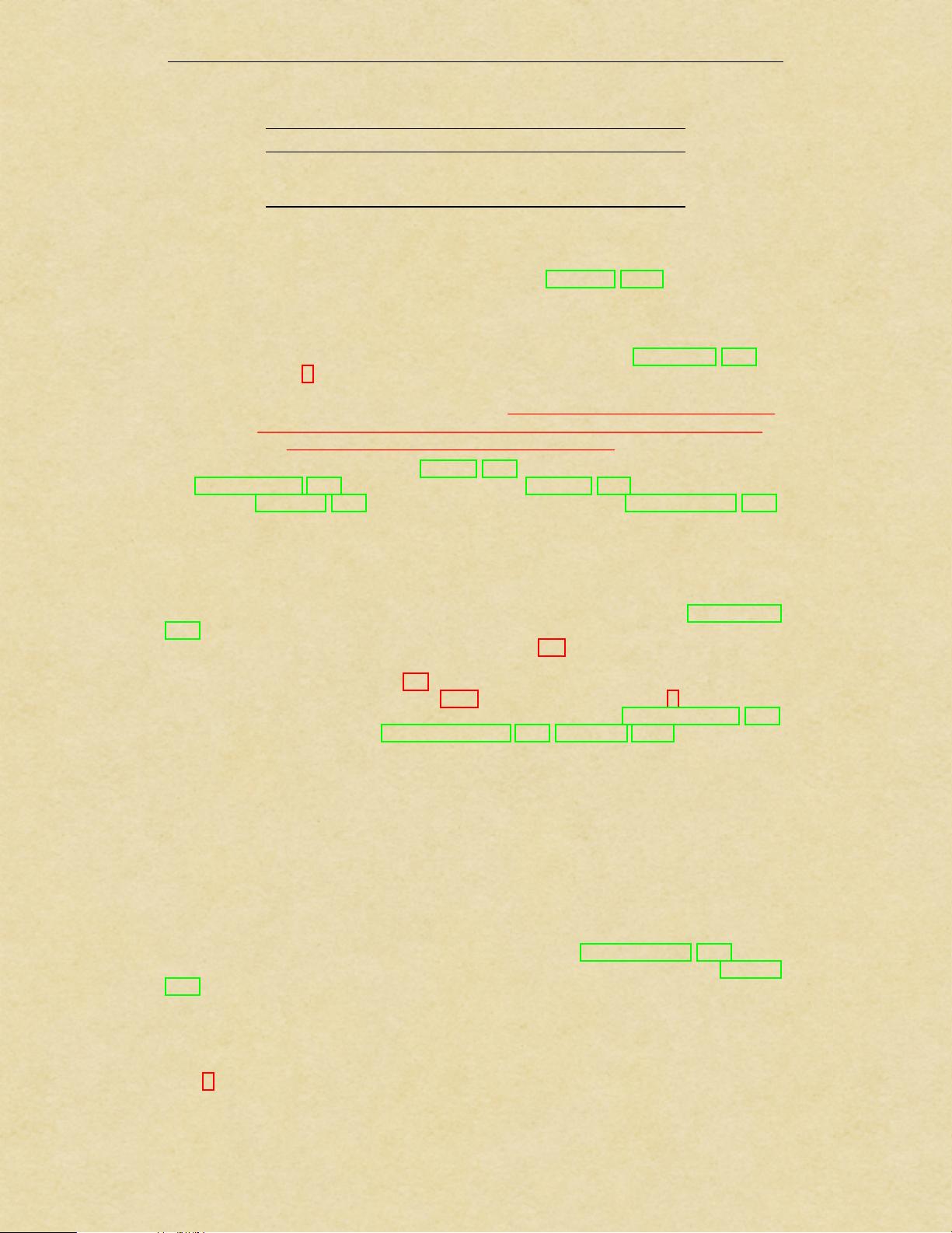

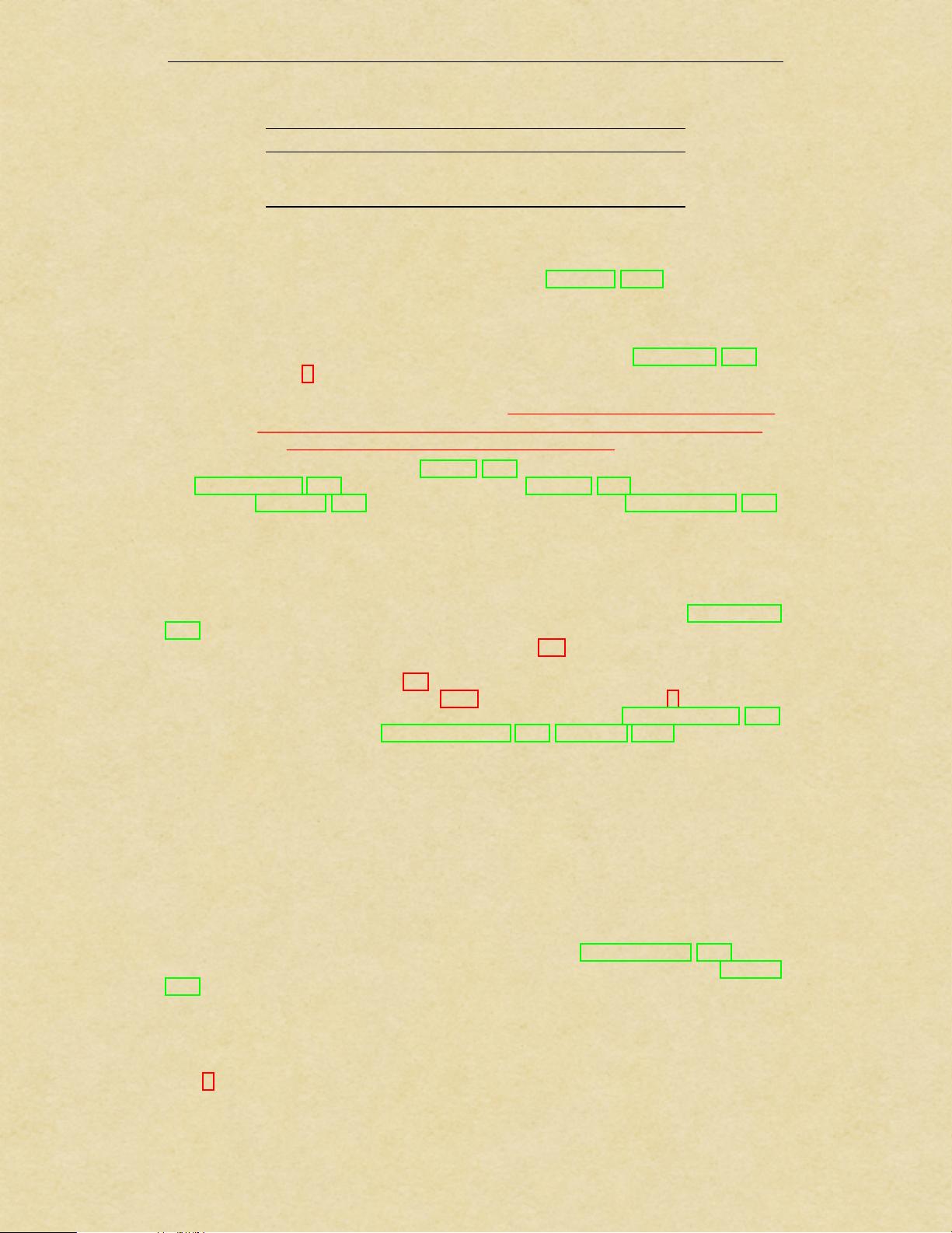

Model Layers Hidden size D MLP size Heads Params

ViT-Base 12 768 3072 12 86M

ViT-Large 24 1024 4096 16 307M

ViT-Huge 32 1280 5120 16 632M

Table 1: Details of Vision Transformer model variants.

We also evaluate on the 19-task VTAB classification suite (Zhai et al., 2019b). VTAB evaluates

low-data transfer to diverse tasks, using 1 000 training examples per task. The tasks are divided into

three groups: Natural – tasks like the above, Pets, CIFAR, etc. Specialized – medical and satellite

imagery, and Structured – tasks that require geometric understanding like localization.

Model Variants. We base ViT configurations on those used for BERT (Devlin et al., 2019), as

summarized in Table 1. The “Base” and “Large” models are directly adopted from BERT and we

add the larger “Huge” model. In what follows we use brief notation to indicate the model size and

the input patch size: for instance, ViT-L/16 means the “Large” variant with 16 ⇥ 16 input patch size.

Note that the Transformer’s sequence length is inversely proportional to the square of the patch size,

thus models with smaller patch size are computationally more expensive.

For the baseline CNNs, we use ResNet (He et al., 2016), but replace the Batch Normalization lay-

ers (Ioffe & Szegedy, 2015) with Group Normalization (Wu & He, 2018), and used standardized

convolutions (Qiao et al., 2019). These modifications improve transfer (Kolesnikov et al., 2020),

and we denote the modified model “ResNet (BiT)”. For the hybrids, we feed the intermediate fea-

ture maps into ViT with patch size of one “pixel”. To experiment with different sequence lengths,

we either (i) take the output of stage 4 of a regular ResNet50 or (ii) remove stage 4, place the same

number of layers in stage 3 (keeping the total number of layers), and take the output of this extended

stage 3. Option (ii) results in a 4x longer sequence length, and a more expensive ViT model.

Training & Fine-tuning. We train all models, including ResNets, using Adam (Kingma & Ba,

2015) with

1

=0.9,

2

=0.999, a batch size of 4096 and apply a high weight decay of 0.1, which

we found to be useful for transfer of all models (Appendix D.1 shows that, in contrast to common

practices, Adam works slightly better than SGD for ResNets in our setting). We use a linear learning

rate warmup and decay, see Appendix B.1 for details. For fine-tuning we use SGD with momentum,

batch size 512, for all models, see Appendix B.1.1. For ImageNet results in Table 2, we fine-tuned at

higher resolution: 512 for ViT-L/16 and 518 for ViT-H/14, and also used Polyak & Juditsky (1992)

averaging with a factor of 0.9999 (Ramachandran et al., 2019; Wang et al., 2020b).

Metrics. We report results on downstream datasets either through few-shot or fine-tuning accuracy.

Fine-tuning accuracies capture the performance of each model after fine-tuning it on the respective

dataset. Few-shot accuracies are obtained by solving a regularized least-squares regression problem

that maps the (frozen) representation of a subset of training images to {1, 1}

K

target vectors. This

formulation allows us to recover the exact solution in closed form. Though we mainly focus on

fine-tuning performance, we sometimes use linear few-shot accuracies for fast on-the-fly evaluation

where fine-tuning would be too costly.

4.2 COMPARISON TO STATE OF THE ART

We first compare our largest models – ViT-H/14 and ViT-L/16 – to state-of-the-art CNNs from

the literature. The first comparison point is Big Transfer (BiT) (Kolesnikov et al., 2020), which

performs supervised transfer learning with large ResNets. The second is Noisy Student (Xie et al.,

2020), which is a large EfficientNet trained using semi-supervised learning on ImageNet and JFT-

300M with the labels removed. Currently, Noisy Student is the state of the art on ImageNet and

BiT-L on the other datasets reported here. All models were trained on TPUv3 hardware, and we

report the number of TPUv3-core-days taken to pre-train each of them, that is, the number of TPU

v3 cores (2 per chip) used for training multiplied by the training time in days.

Table 2 shows the results. The smaller ViT-L/16 model pre-trained on JFT-300M outperforms BiT-L

(which is pre-trained on the same dataset) on all tasks, while requiring substantially less computa-

tional resources to train. The larger model, ViT-H/14, further improves the performance, especially

on the more challenging datasets – ImageNet, CIFAR-100, and the VTAB suite. Interestingly, this

5

我的内容管理

收起

我的内容管理

收起

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功