Expert Systems With Applications 238 (2024) 122302

4

X. Shen et al.

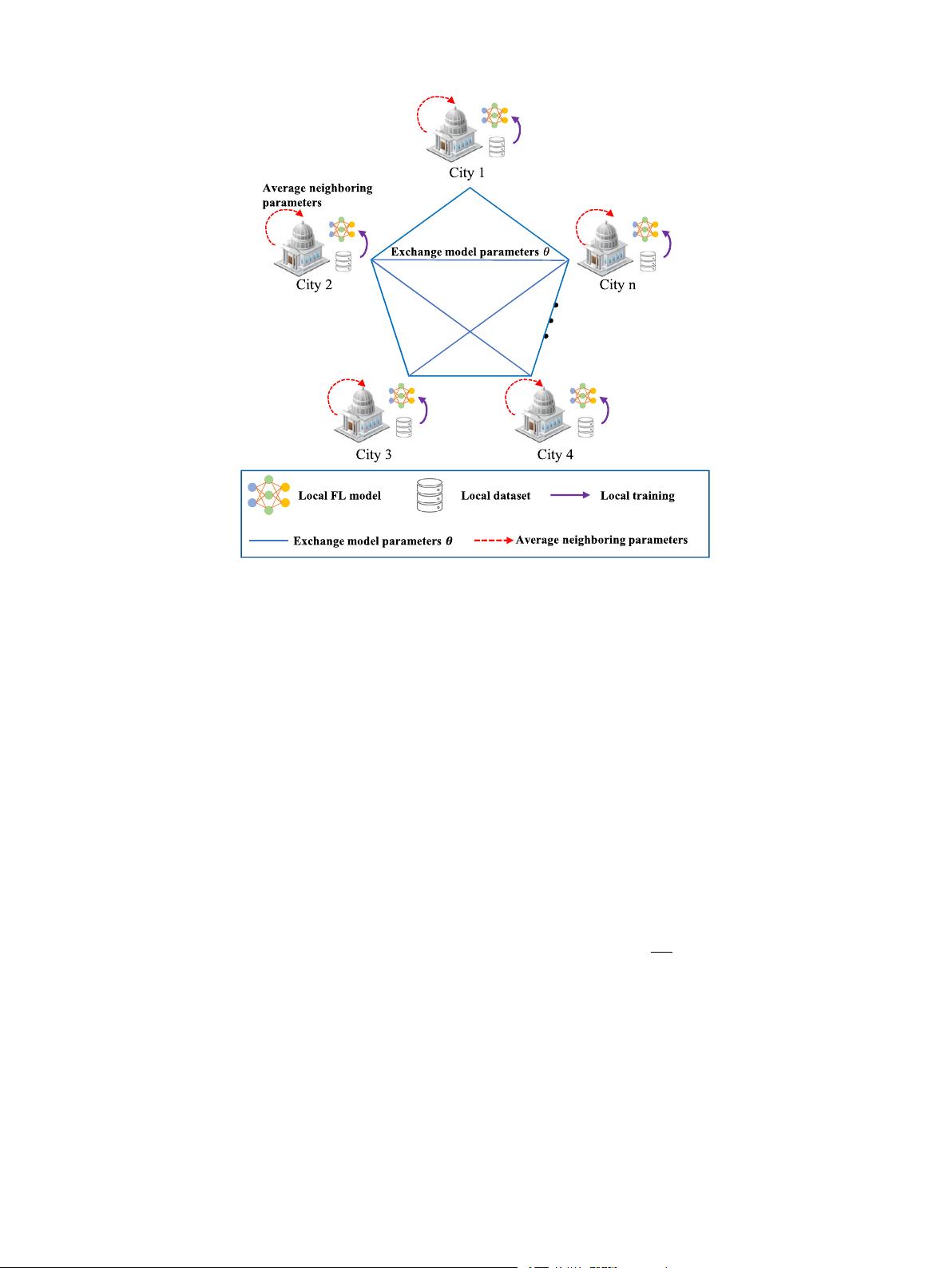

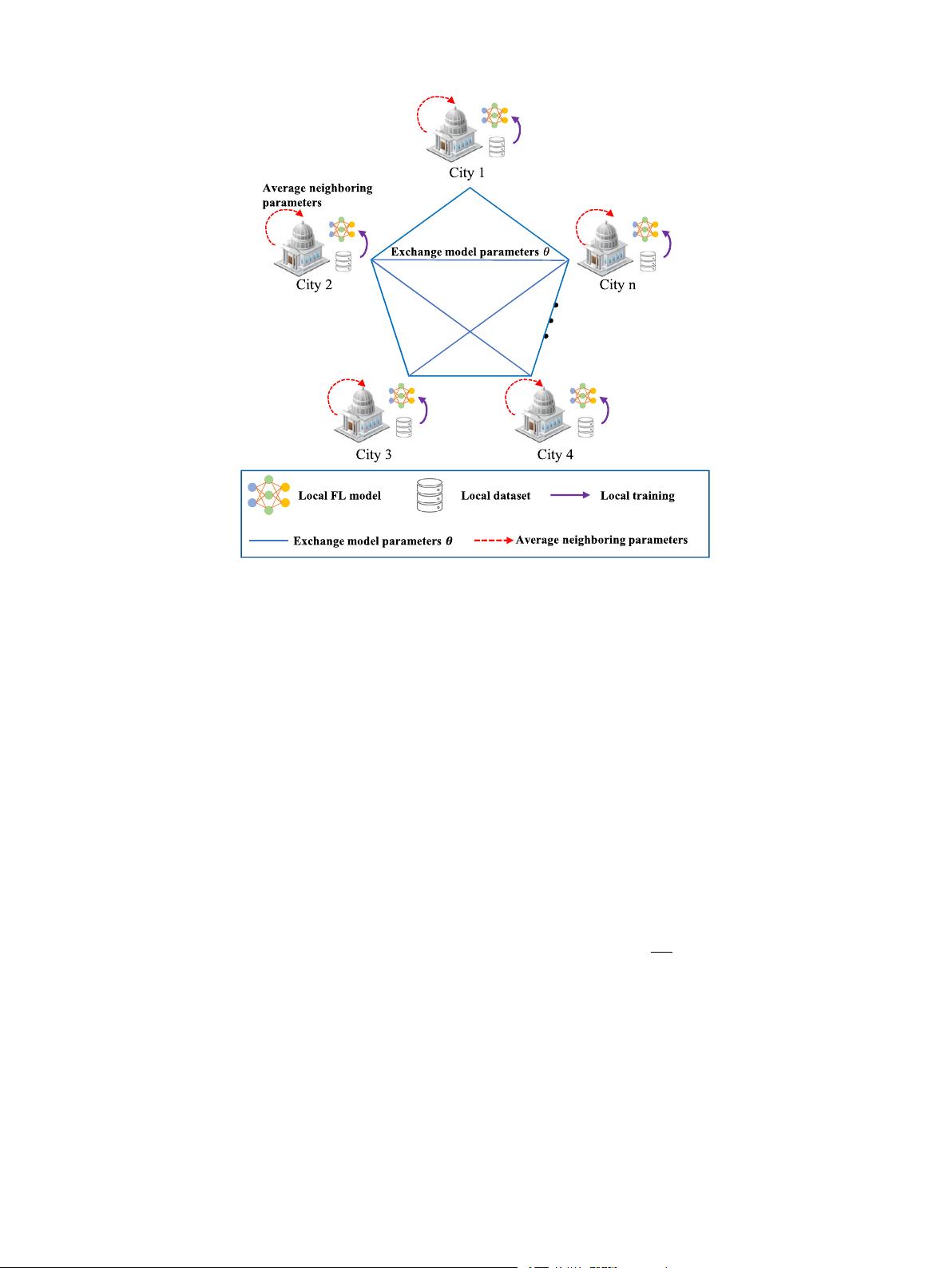

Fig. 1. The structure of decentralized federated learning.

3. Preliminaries

3.1. Problem statement

We consider a metropolitan area (or an urban agglomeration),

which consists of 𝑛 neighboring cities, where cities collaboratively

train traffic speed prediction models in a decentralized fashion. In

each city 𝑖, the traffic network is denoted as a weighted directed

graph

𝑖

= (

𝑖

,

𝑖

, 𝐴

𝑖

), where

𝑖

represents the set of 𝑁

𝑖

road nodes,

𝑖

denotes the set of edges reflecting the connectivity between road

nodes, and 𝐴

𝑖

∈ R

𝑁

𝑖

×𝑁

𝑖

is the weighted adjacency matrix. Based on

freight vehicle trajectory data, freight traffic speed forecasting is a

typical spatial–temporal forecasting issue. In this study, we fasten on

forecasting traffic speeds 𝑣

𝑡

∈ R

𝑁

𝑖

at timestamp 𝑡 for the 𝑁

𝑖

road nodes.

Given 𝑀 historical traffic conditions

𝑣

𝑡−𝑀+1

, … , 𝑣

𝑡

acquired by the 𝑁

𝑖

road nodes and the traffic network

𝑖

, the spatial–temporal forecasting

model is obtained to predict traffic conditions in the future 𝑇

′

time

steps with the following formulation,

𝑣

𝑡+1

, … , 𝑣

𝑡+𝑇

′

=

𝑣

𝑡−𝑀+1

, … , 𝑣

𝑡

;

𝑖

, (1)

where indicates the spatial–temporal forecasting model.

3.2. Structure of decentralized federated learning

Federated learning (FL) is a distributed machine learning approach,

which enables a group of clients to collectively train a global model

without data exchange (Yang, Liu, Chen, & Tong, 2019), where clients

refer to cities within the metropolitan area, and the data of each city

is only stored in the local database. Different from the original FL that

needs an exclusive cloud center server, decentralized federated learning

performs global tasks collaboratively through mutual communication

among neighbors and its detailed architecture is depicted in Fig. 1.

To be specific, let 𝐶 =

{

1, 2, … , 𝑛

}

be the set of cities within

the metropolitan area and =

𝐷

1

, 𝐷

2

, … , 𝐷

𝑛

represent the set of

local datasets owned by respective cities. The communication network

among cities can be modeled as a connected graph G = (V, E), where

V is the vertex set (each vertex represents a city and thereby V can

be regarded as 𝐶) and E is the edge set. The training procedures of

decentralized FL at time 𝜏, 𝜏 ∈ {1, 2, … , 𝑇

𝑔

} are outlined as follows:

• Initialization: The cities participating in the decentralized FL

task construct a connected communication network similar to

Fig. 1, and each participating city initializes the local model 𝜃

𝑖,𝜏

to accomplish the local training task.

• Local training: Each participating city 𝑖(𝑖 ∈ 𝐶) trains its local

model with the local dataset and parameter 𝜃

𝑖,𝜏

. The objective of

each participating city is to tackle the following empirical risk

minimization problem:

arg min

𝜃∈R

𝑑

𝑖

(𝜃),

𝑖

(𝜃) =

1

𝐷

𝑖

(

𝑥

𝑒

,𝑦

𝑒

)

∈𝐷

𝑖

𝑓

𝑒

(𝜃), (2)

where 𝐷

𝑖

represents the local dataset with the input–output vec-

tor pairs

𝑥

𝑒

, 𝑦

𝑒

, 𝜃 is the 𝑑-dimensional parameter of local model,

𝐷

𝑖

denotes the size of 𝐷

𝑖

, and 𝑓

𝑒

(𝜃) is a local objective function.

• Parameter aggregation: Different from the conventional FL, such

as FedAVG (McMahan, Moore, Ramage, Hampson, & y Arcas,

2017), the parameter aggregation of the decentralized FL is

achieved by calculating the weighted summation of participating

cities, i.e.,

𝜃

𝑖,𝜏+1

=

𝑗∈𝐶

𝑝

𝑖,𝑗

⋅ 𝜃

𝑗,𝜏

, (3)

where 𝑝

𝑖,𝑗

denotes the mixing weight for the received models.

我的内容管理

收起

我的内容管理

收起

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功