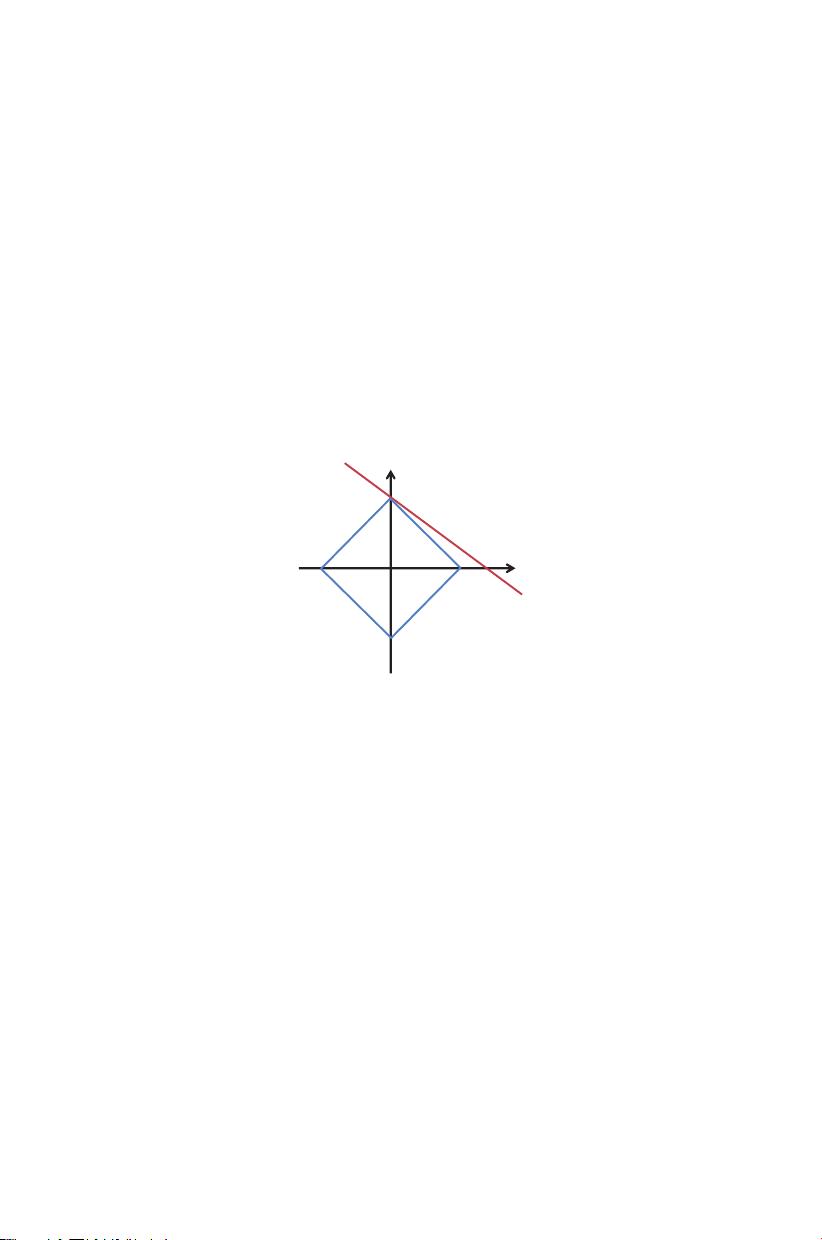

"稀疏编码及其在计算机视觉中的应用" 稀疏编码是现代计算机视觉领域的一个核心概念,它源于信号处理和机器学习理论,旨在通过找到数据的稀疏表示来理解和压缩复杂信息。该技术的基本思想是将一个复杂的信号或图像分解为一组基元素的线性组合,其中大部分元素值接近于零,只有少数几个非零系数。这种稀疏表示有助于揭示数据的内在结构,并在特征提取、图像恢复、识别和分类等任务中表现出优越性能。 在计算机视觉中,稀疏编码的应用广泛且深远。首先,它可以用于特征学习。通过学习一组能够产生稀疏表示的原子或基,可以提取出图像或视频中的关键特征。这些特征通常具有更好的判别性和稳定性,对于图像分类和目标检测等任务尤其有用。例如,稀疏编码可以被用于构造深度学习模型的初始层,作为卷积神经网络(CNN)的预处理步骤,提高模型的训练效率和准确性。 其次,稀疏编码在图像恢复和去噪方面也有显著效果。通过寻找最稀疏的解,可以有效地去除噪声,同时保持图像的重要结构信息。这种方法优于传统的滤波器,因为它可以更好地保留边缘和细节。此外,稀疏编码还可以用于图像超分辨率重建,即从低分辨率图像中恢复高分辨率图像,这对于视频处理和监控系统尤为重要。 再者,稀疏编码在解决光照变化、遮挡和姿态变化等引起的识别问题时也表现出色。通过学习不同条件下的稀疏表示,可以建立鲁棒的表示方法,提高识别系统的泛化能力。例如,在人脸识别领域,稀疏编码可以用来学习不变性特征,使得系统能够在各种光照和表情变化下正确识别人脸。 此外,稀疏编码还可应用于动作识别和视频分析。通过对视频序列进行稀疏编码,可以提取出代表动作的关键帧,并进行时空特征的建模。这种方法在视频内容理解、行为分析和监控系统中有着重要应用。 稀疏编码在计算机视觉领域的应用不仅限于特征提取和图像恢复,还包括目标检测、识别、视频分析等多个方面。随着计算能力和算法的不断发展,稀疏编码技术将持续推动计算机视觉领域的创新,为图像处理和人工智能提供更强大的工具。

剩余238页未读,继续阅读

- 粉丝: 2

- 资源: 19

我的内容管理

收起

我的内容管理

收起

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

会员权益专享

最新资源

- VMP技术解析:Handle块优化与壳模板初始化

- C++ Primer 第四版更新:现代编程风格与标准库

- 计算机系统基础实验:缓冲区溢出攻击(Lab3)

- 中国结算网上业务平台:证券登记操作详解与常见问题

- FPGA驱动的五子棋博弈系统:加速与创新娱乐体验

- 多旋翼飞行器定点位置控制器设计实验

- 基于流量预测与潮汐效应的动态载频优化策略

- SQL练习:查询分析与高级操作

- 海底数据中心散热优化:从MATLAB到动态模拟

- 移动应用作业:MyDiaryBook - Google Material Design 日记APP

- Linux提权技术详解:从内核漏洞到Sudo配置错误

- 93分钟快速入门 LaTeX:从入门到实践

- 5G测试新挑战与罗德与施瓦茨解决方案

- EAS系统性能优化与故障诊断指南

- Java并发编程:JUC核心概念解析与应用

- 数据结构实验报告:基于不同存储结构的线性表和树实现

信息提交成功

信息提交成功