There have been several attempts to create a powerful audio API on the Web to address

some of the limitations I previously described. One notable example is the Audio Data

API that was designed and prototyped in Mozilla Firefox. Mozilla’s approach started

with an

<audio>

element and extended its JavaScript API with additional features. This

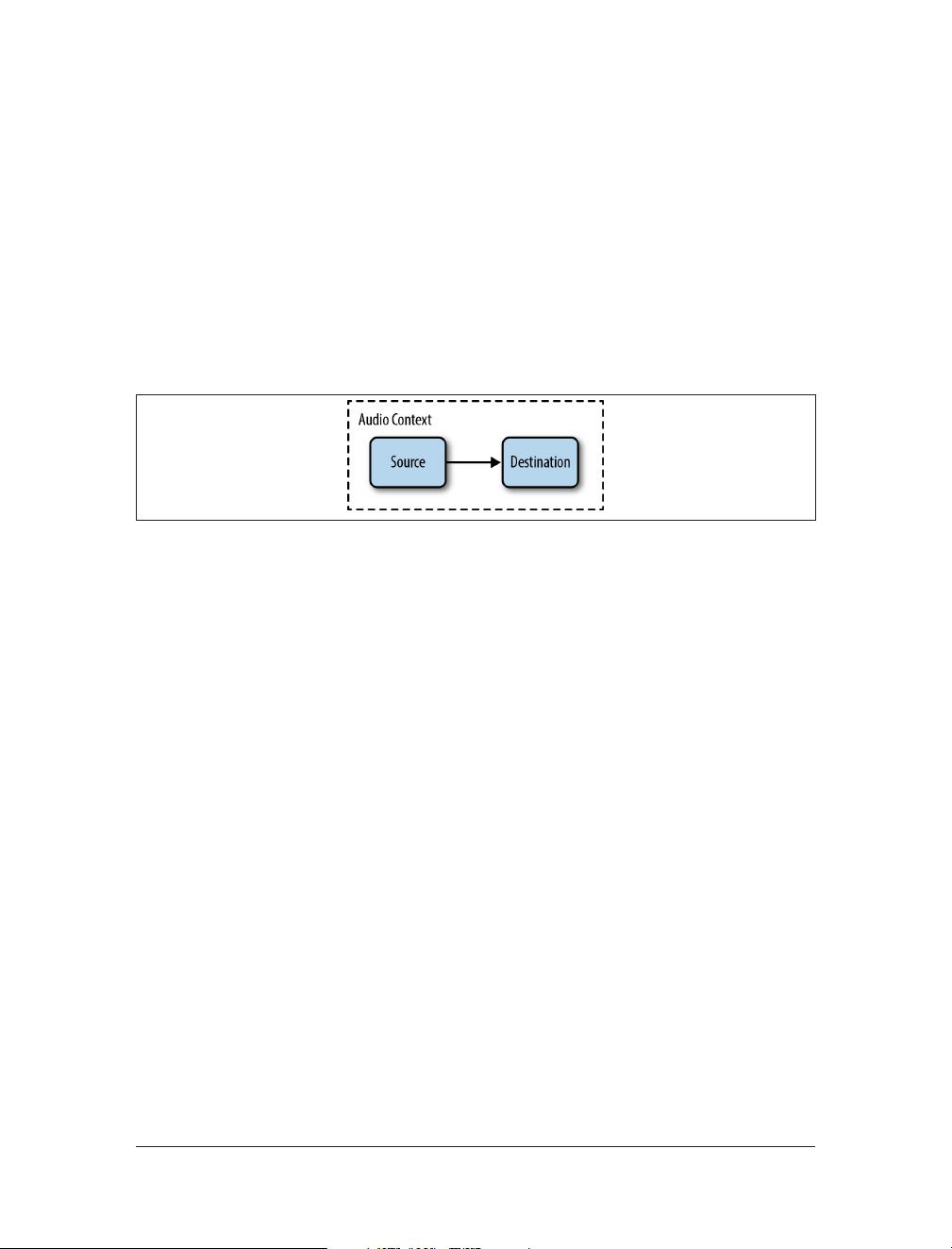

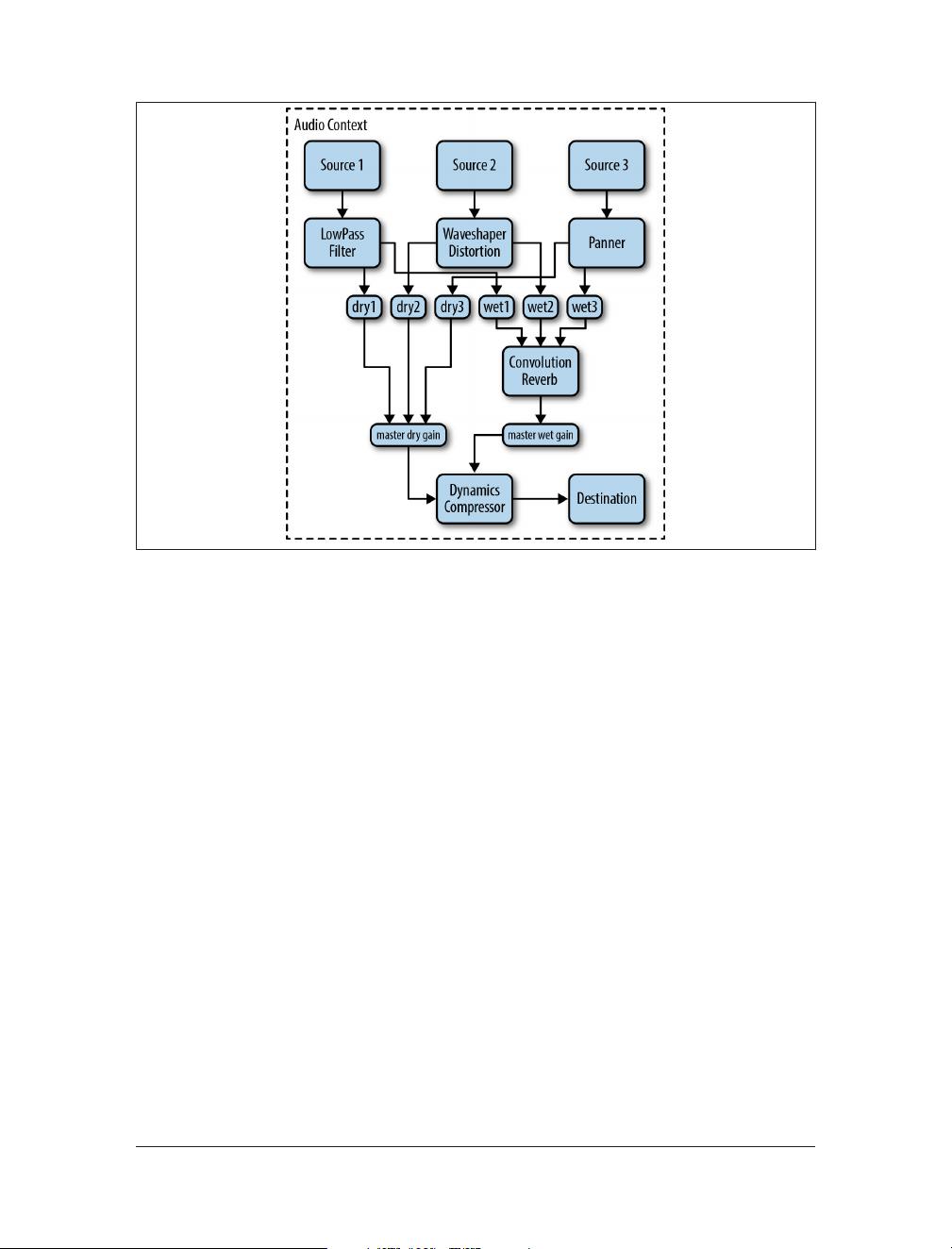

API has a limited audio graph (more on this later in “The Audio Context” (page 3)),

and hasn’t been adopted beyond its first implementation. It is currently deprecated in

Firefox in favor of the Web Audio API.

In contrast with the Audio Data API, the Web Audio API is a brand new model, com‐

pletely separate from the

<audio>

tag, although there are integration points with other

web APIs (see Chapter 7). It is a high-level JavaScript API for processing and synthe‐

sizing audio in web applications. The goal of this API is to include capabilities found in

modern game engines and some of the mixing, processing, and filtering tasks that are

found in modern desktop audio production applications. The result is a versatile API

that can be used in a variety of audio-related tasks, from games, to interactive applica‐

tions, to very advanced music synthesis applications and visualizations.

Games and Interactivity

Audio is a huge part of what makes interactive experiences so compelling. If you don’t

believe me, try watching a movie with the volume muted.

Games are no exception! My fondest video game memories are of the music and sound

effects. Now, nearly two decades after the release of some of my favorites, I still can’t get

Koji Kondo’s Zelda and Matt Uelmen’s Diablo soundtracks out of my head. Even the

sound effects from these masterfully-designed games are instantly recognizable, from

the unit click responses in Blizzard’s Warcraft and Starcraft series to samples from Nin‐

tendo’s classics.

Sound effects matter a great deal outside of games, too. They have been around in user

interfaces (UIs) since the days of the command line, where certain kinds of errors would

result in an audible beep. The same idea continues through modern UIs, where well-

placed sounds are critical for notifications, chimes, and of course audio and video com‐

munication applications like Skype. Assistant software such as Google Now and Siri

provide rich, audio-based feedback. As we delve further into a world of ubiquitous

computing, speech- and gesture-based interfaces that lend themselves to screen-free

interactions are increasingly reliant on audio feedback. Finally, for visually impaired

computer users, audio cues, speech synthesis, and speech recognition are critically im‐

portant to create a usable experience.

Interactive audio presents some interesting challenges. To create convincing in-game

music, designers need to adjust to all the potentially unpredictable game states a player

can find herself in. In practice, sections of the game can go on for an unknown duration,

and sounds can interact with the environment and mix in complex ways, requiring

2 | Chapter 1: Fundamentals

我的内容管理

收起

我的内容管理

收起

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功