Executive leaders should create a culture of

responsibility backed by resources that enable

responsible AI from design to end-of-product use

and beyond. These steps are recommended:

1. Know the legal duties and regulatory

constraints:

Leverage existing guidance, such as the Institute

of Electrical and Electronics Engineers’ (IEEE)

Code of Ethics,

1

UNICEF’s Policy Guidance on

AI for Children

2

and World Economic Forum

guidance,

3

as well as the guidance contained

in this toolkit and guidelines for the product

team, AI labelling system, and resources

for parents and guardians and children and

youth. Commit to internal and, if possible,

external AI oversight. Report compliance and

leadership measures publicly and in simple

language so buyers can understand.

2. Build a diverse and capable team:

Include ethicists, researchers, privacy

specialists, educators, child development

experts, psychologists, user-experience (UX)

designers and data scientists. Collaborate with

non-profit organizations and educational and

research institutions for more expertise.

3. Train your team and provide resources for

success with this checklist:

Educate team members about the importance

of responsible and trustworthy AI and provide

them access to the skills, tools and time

they need to execute your vision. Have open

dialogue about unintended consequences,

possible worst-case scenarios, and the reasons

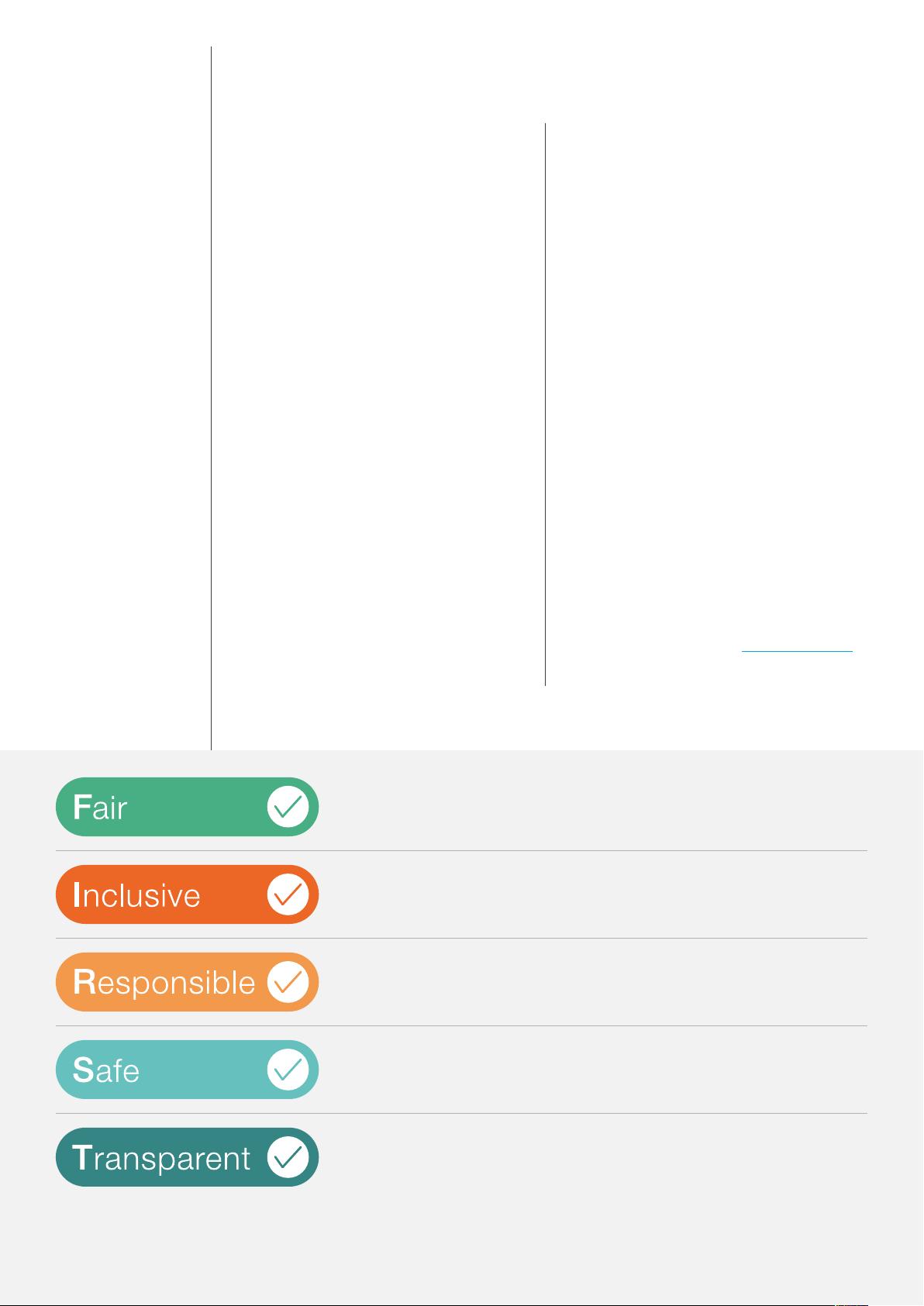

for ensuring your teams are considering the five

AI characteristics critical to putting children and

youth FIRST (Figure 2).

For more information, refer to the product team

guidelines, which offers detailed guidance on

the five areas.

4. Offer expertise to inform development of

regulations, standards and guidance:

Contribute to public forums on how AI is

being used in your products or services.

Share your experience in proposing guidance

and requirements.

5. Welcome principled efforts to label

products and services:

These should be done according to the potential

impact of AI on users. Endorse and participate in

activities to develop labelling and rating standards.

Label your offerings to help consumers make

informed choices based on recommendations

about, for example, user age, accessibility factors

and whether a camera and microphone are being

used. For additional information about labelling

recommendations, see the AI labelling system.

Actions

Putting children and youth FIRST checklistFIGURE 2

Company culture and processes address ethics and bias concerns regarding

how AI models are developed by people and the impact of AI models in use.

AI models interact equitably with users from different cultures and with different

abilities; product testing includes diverse users.

Offerings reflect the latest learning science to enable healthy cognitive, social,

emotional and/or physical development.

The technology protects and secures user and purchaser data, and the

company discloses how it collects and uses data and protects data privacy;

users may opt out at any time and have their data removed or erased.

The company explains in non-technical terms to buyers and users why AI is

used, how it works and how its decisions can be explained. The company also

admits AI’s limitations and potential risks and welcomes oversight and audits.

Source: World Economic Forum

Artificial Intelligence for Children 8