PREPRINT 9

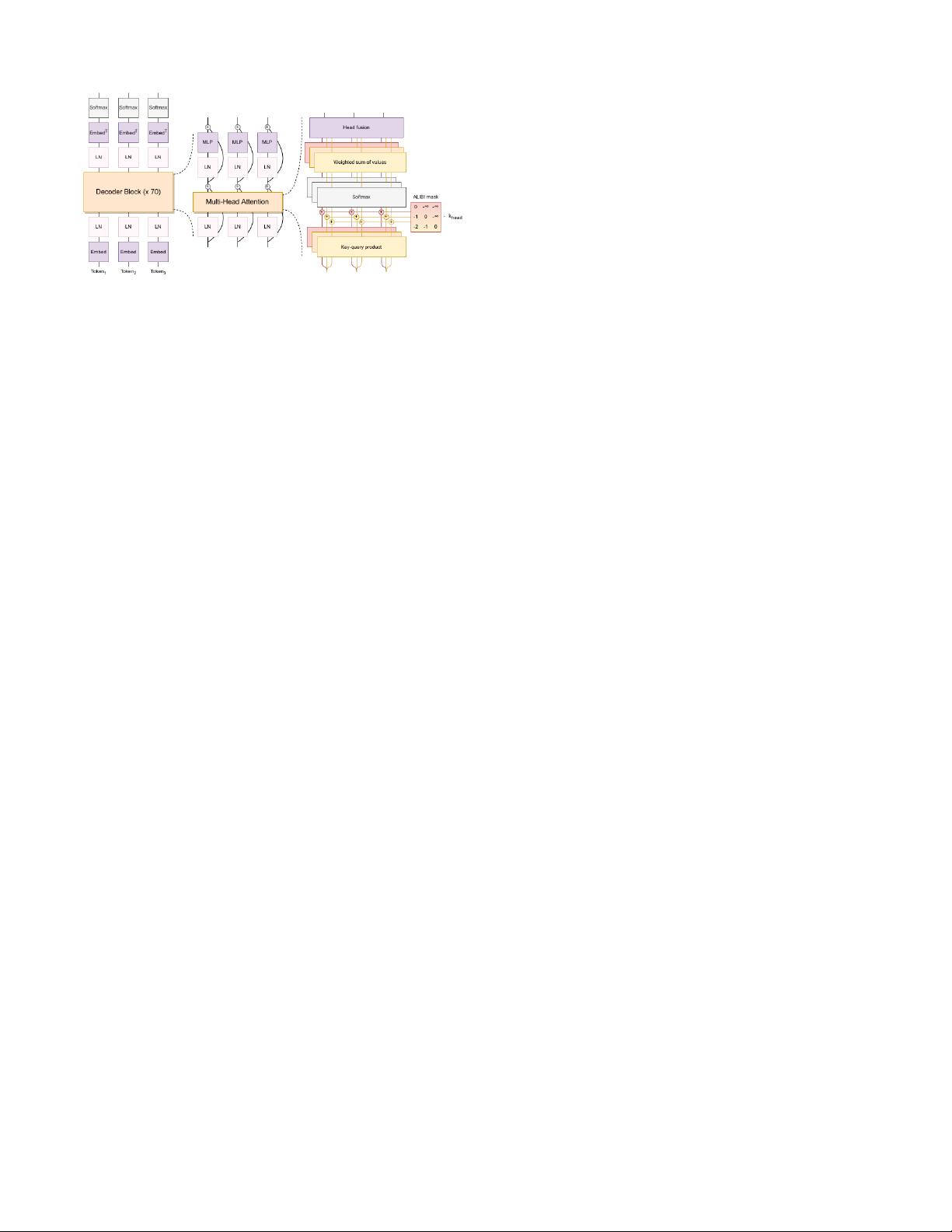

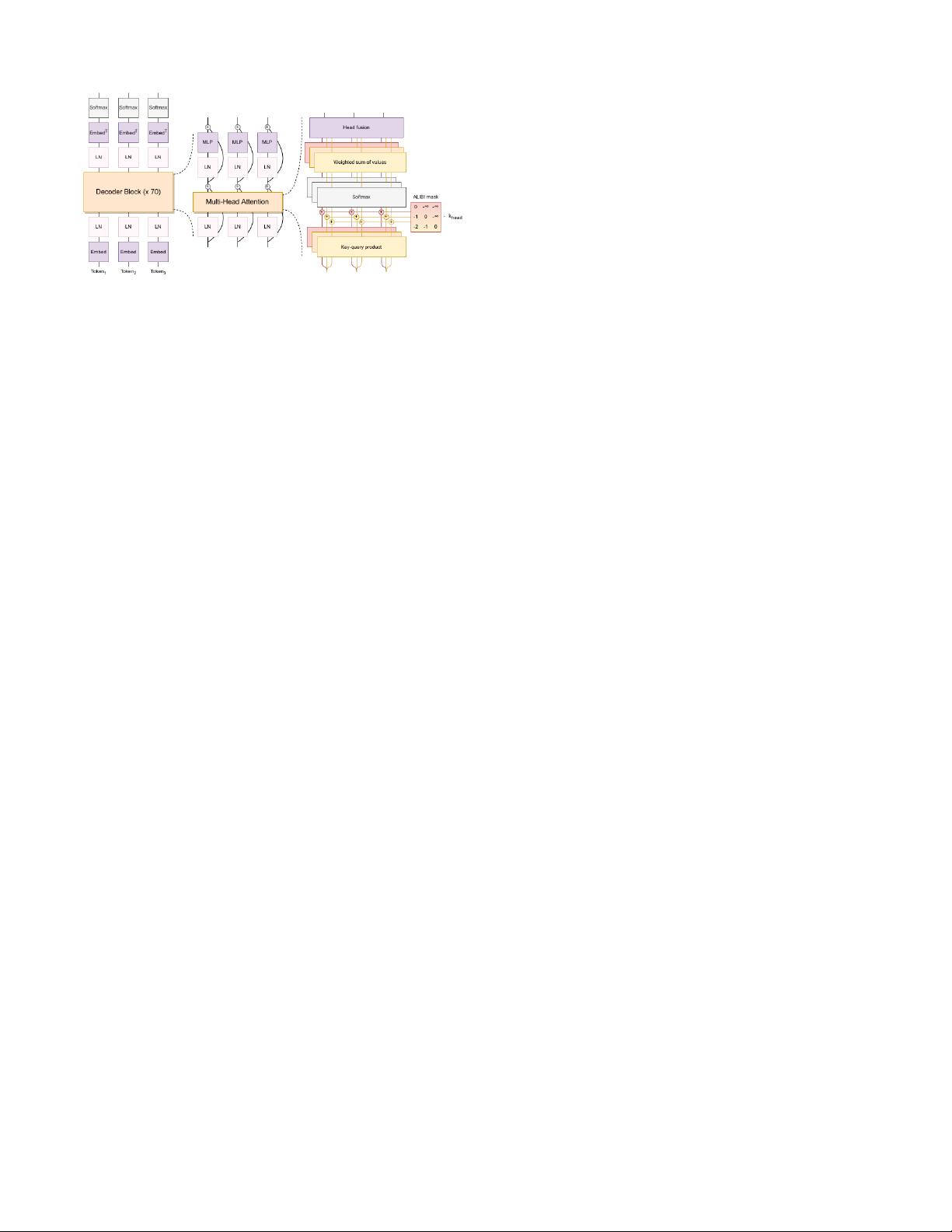

Fig. 9: The BLOOM architecture example sourced from [13].

1.14 BLOOM [13]: A causal decoder model trained

on ROOTS corpus with the aim of open-sourcing an LLM.

The architecture of BLOOM is shown in Figure 9, with

differences like ALiBi positional embedding, an additional

normalization layer after the embedding layer as suggested

by the bitsandbytes

1

library. These changes stabilize training

with improved downstream performance.

1.15 GLaM [116]: Generalist Language Model (GLaM)

represents a family of language models using a sparsely acti-

vated decoder-only mixture-of-experts (MoE) structure [117],

[118]. To gain more model capacity while reducing compu-

tation, the experts are sparsely activated where only the best

two experts are used to process each input token. The largest

GLaM model, GLaM (64B/64E), is about 7× larger than GPT-

3 [6], while only a part of the parameters is activated per input

token. The largest GLaM (64B/64E) model achieves better

overall results as compared to GPT-3 while consuming only

one-third of GPT-3’s training energy.

1.16 MT-NLG [112]: A 530B causal decoder based on

GPT-2 architecture that is roughly 3× GPT-3 model parame-

ters. MT-NLG is trained on filtered high-quality data collected

from various public datasets and blends various types of

datasets in a single batch, which beats GPT-3 on a number

of evaluations.

1.17 Chinchilla [119]: A causal decoder trained on the

same dataset as the Gopher [111] but with a little different

data sampling distribution (sampled from MassiveText). The

model architecture is similar to the one used for Gopher,

with the exception of AdamW optimizer instead of Adam.

Chinchilla identifies the relationship that model size should

be doubled for every doubling of training tokens. Over 400

language models ranging from 70 million to over 16 billion

parameters on 5 to 500 billion tokens are trained to get the

estimates for compute-optimal training under a given budget.

The authors train a 70B model with the same compute budget

as Gopher (280B) but with 4 times more data. It outperforms

Gopher [111], GPT-3 [6], and others on various downstream

tasks, after fine-tuning.

1.18 AlexaTM [120]: An encoder-decoder model, where

encoder weights and decoder embeddings are initialized with

a pre-trained encoder to speedup training. The encoder stays

frozen for initial 100k steps and later unfreezed for end-to-end

training. The model is trained on a combination of denoising

1

https://github.com/TimDettmers/bitsandbytes

and causal language modeling (CLM) objectives, concate-

nating [CLM] token at the beginning for mode switiching.

During training, the CLM task is applied for 20% of the time,

which improves the in-context learning performance.

1.19 PaLM [15]: A causal decoder with parallel atten-

tion and feed-forward layers similar to Eq. 4, speeding up

training 15 times faster. Additional changes to the conven-

tional transformer model include SwiGLU activation, RoPE

embeddings, multi-query attention that saves computation cost

during decoding, and shared input-output embeddings. During

training, loss spiking was observed, and to fix it, model

training was restarted from a 100 steps earlier checkpoint

by skipping 200-500 batches around the spike. Moreover, the

model was found to memorize around 2.4% of the training

data at the 540B model scale, whereas this number was lower

for smaller models.

PaLM-2 [121]: A smaller multi-lingual variant of PaLM,

trained for larger iterations on a better quality dataset. The

PaLM-2 shows significant improvements over PaLM, while

reducing training and inference costs due to its smaller size.

To lessen toxicity and memorization, it appends special tokens

with a fraction of pre-training data, which shows reduction in

generating harmful responses.

1.20 U-PaLM [122]: This method trains PaLM for 0.1%

additional compute with UL2 (also named as UL2Restore) ob-

jective [123] using the same dataset and outperforms baseline

significantly on various NLP tasks, including zero-shot, few-

shot, commonsense reasoning, CoT, etc. Training with UL2R

involves converting a causal decoder PaLM to a non-causal

decoder PaLM and employing 50% sequential denoising, 25%

regular denoising, and 25% extreme denoising loss functions.

1.21 UL2 [123]: An encoder-decoder architecture

trained using a mixture of denoisers (MoD) objectives. De-

noisers include 1) R-Denoiser: a regular span masking, 2)

S-Denoiser: which corrupts consecutive tokens of a large

sequence and 3) X-Denoiser: which corrupts a large number of

tokens randomly. During pre-training, UL2 includes a denoiser

token from R, S, X to represent a denoising setup. It helps

improve fine-tuning performance for downstream tasks that

bind the task to one of the upstream training modes. This

MoD style of training outperforms the T5 model on many

benchmarks.

1.22 GLM-130B [33]: GLM-130B is a bilingual (En-

glish and Chinese) model trained using an auto-regressive

mask infilling pre-training objective similar to the GLM [124].

This training style makes the model bidirectional as compared

to GPT-3, which is unidirectional. Opposite to the GLM, the

training of GLM-130B includes a small amount of multi-task

instruction pre-training data (5% of the total data) along with

the self-supervised mask infilling. To stabilize the training, it

applies embedding layer gradient shrink.

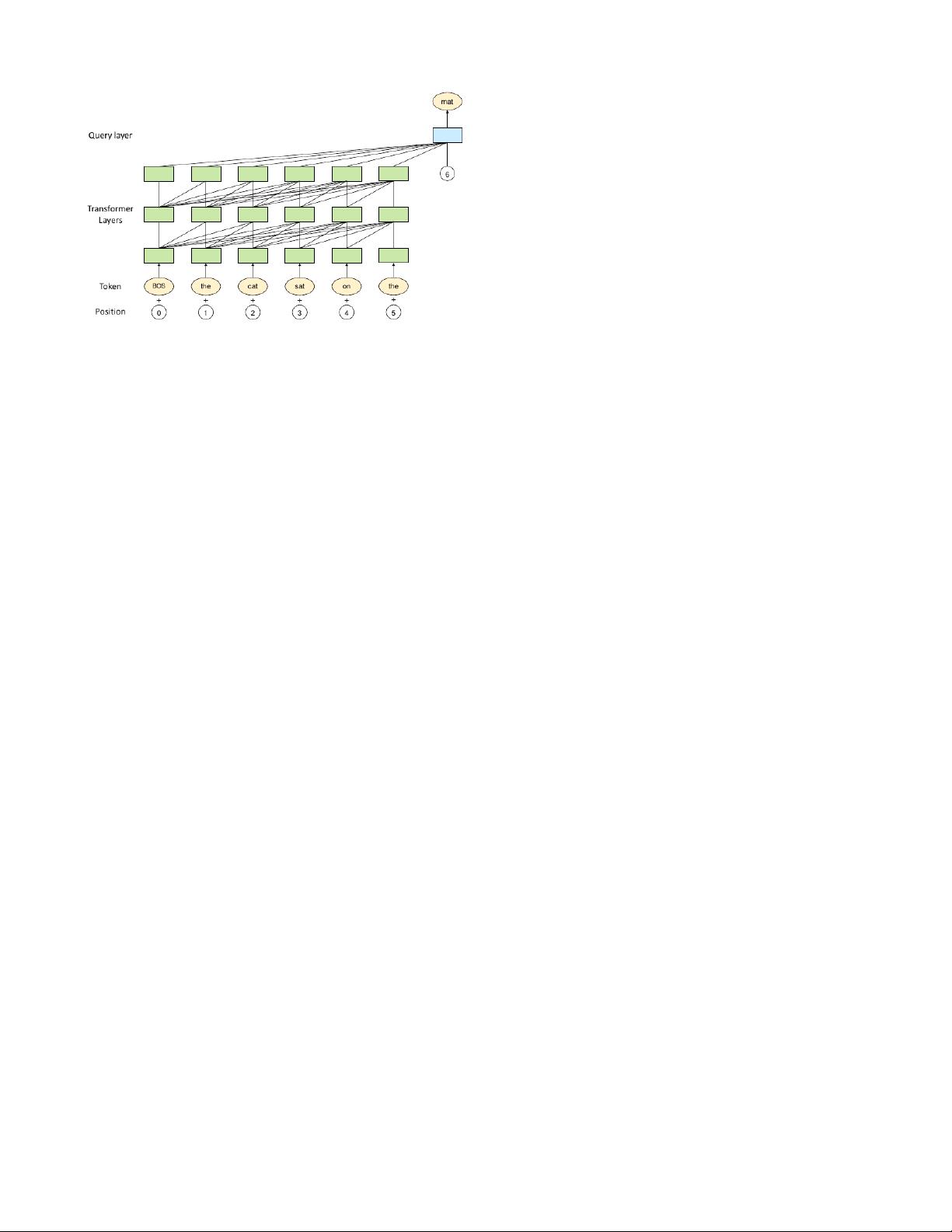

1.23 LLaMA [125], [21]: A set of decoder-only lan-

guage models varying from 7B to 70B parameters. LLaMA

models series is the most famous among the community for

parameter-efficient and instruction tuning.

LLaMA-1 [125]: Implements efficient causal attention [126]

by not storing and computing masked attention weights and

key/query scores. Another optimization is reducing number of

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功