kernel, can reduce the overhead of receiving packet by

deferring the incoming message handling until a given

threshold is reached.

When a packet arrives, the physical network interface

card (NIC) sends a physical interrupt to the processor and

that is forwarded to the virtual processor of Domain 0. The

NIC driver (native driver in Domain 0) is activated to

receive the packet and send it to the virtual switch, which

dispatches the packet to the corresponding back-end driver.

Once a back-end driver receives the packet, it puts a request

in the shared ring to find an available guest buffer, and then

copies the packet to it. It then puts a new request in the

shared ring for the inbound packet and notifies the guest

domain to process the request, using a virtual interrupt. The

front-end driver responds to the interrupt and receives the

packets by consuming the requests for inbound packets in

the shared ring.

3NETWORK I/O VIRTUALIZATION CHALLENGES

Although many studies have been done in network I/O

virtualization, they focused on interguest packet movement

improvement to achieve better performance. In this section,

we discuss two major additional challenges: Excessive

virtual interrupts and single-threaded backend drivers.

3.1 Challenge 1: Excessive Virtual Interrupts

Upon processing an inbound packet, the back-end driver

interrupts a guest OS immediately, indicating the packet’s

readiness to the guest OS. Although this scheme achieves the

best response time and handles latency-sensitive workloads

well, it causes excessive virtual interrupts to the guest OS,

and significantly degrades I/O virtualization performance.

In the virtualized environment, virtual interrupt proces-

sing is much more expensive than a physical interrupt.

Unlike the native environment, handling a virtual interrupt

in a guest OS could incur multiple rounds of trap-and-

emulation, depending on what virtual interrupt controller

the guest OS implements. For instance, an I/O advanced

programmable interrupt controller (APIC) masks and

unmasks the servicing interrupt, by programming the I/O

redirection table register. APIC programs the end of

interrupt (EOI) and the task priority register (TPR) for every

interrupt. Any of the above register writes may trigger trap-

and-emulation in the virtualized environment. The cost of a

typical trap-and-emulation of an interrupt controller regis-

ter operation is around 3,000 to 5,000 cycles. As a result, each

virtual interrupt can introduce an additional 10 K cycles,

based on Xentrace measurement on Intel servers. Therefore,

it is important to reduce the virtual interrupt overhead in a

virtualized execution environment.

3.2 Challenge 2: Single-Threaded Back-End Driver

The hypervisor shares the physical NIC among multiple

guests through a back-end driver. However, the centralized

back-end driver, running in a single thread, becomes a

bottleneck as the number of guests goes up, or as the number

of virtual receiving queues in front-end drivers increases.

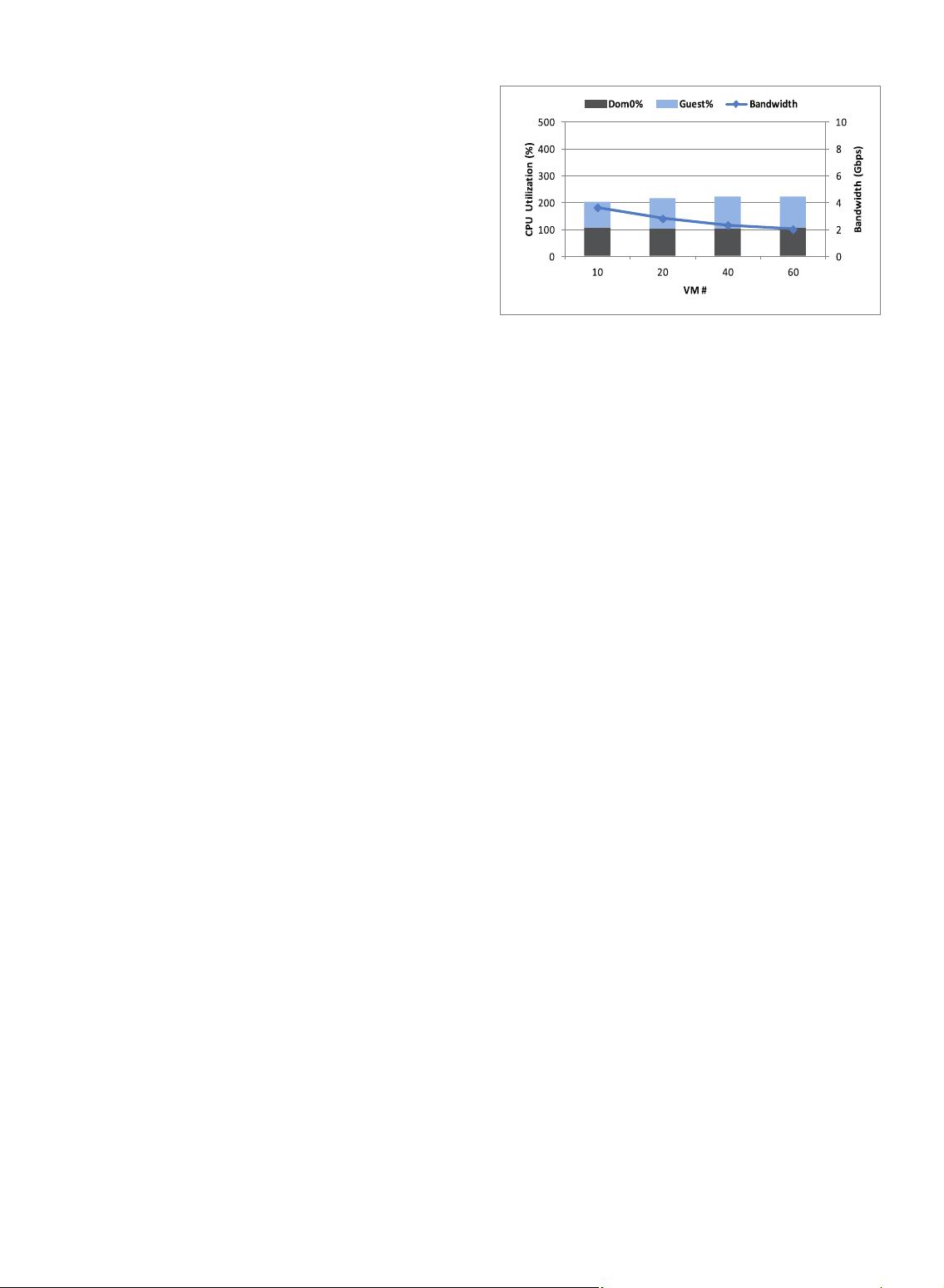

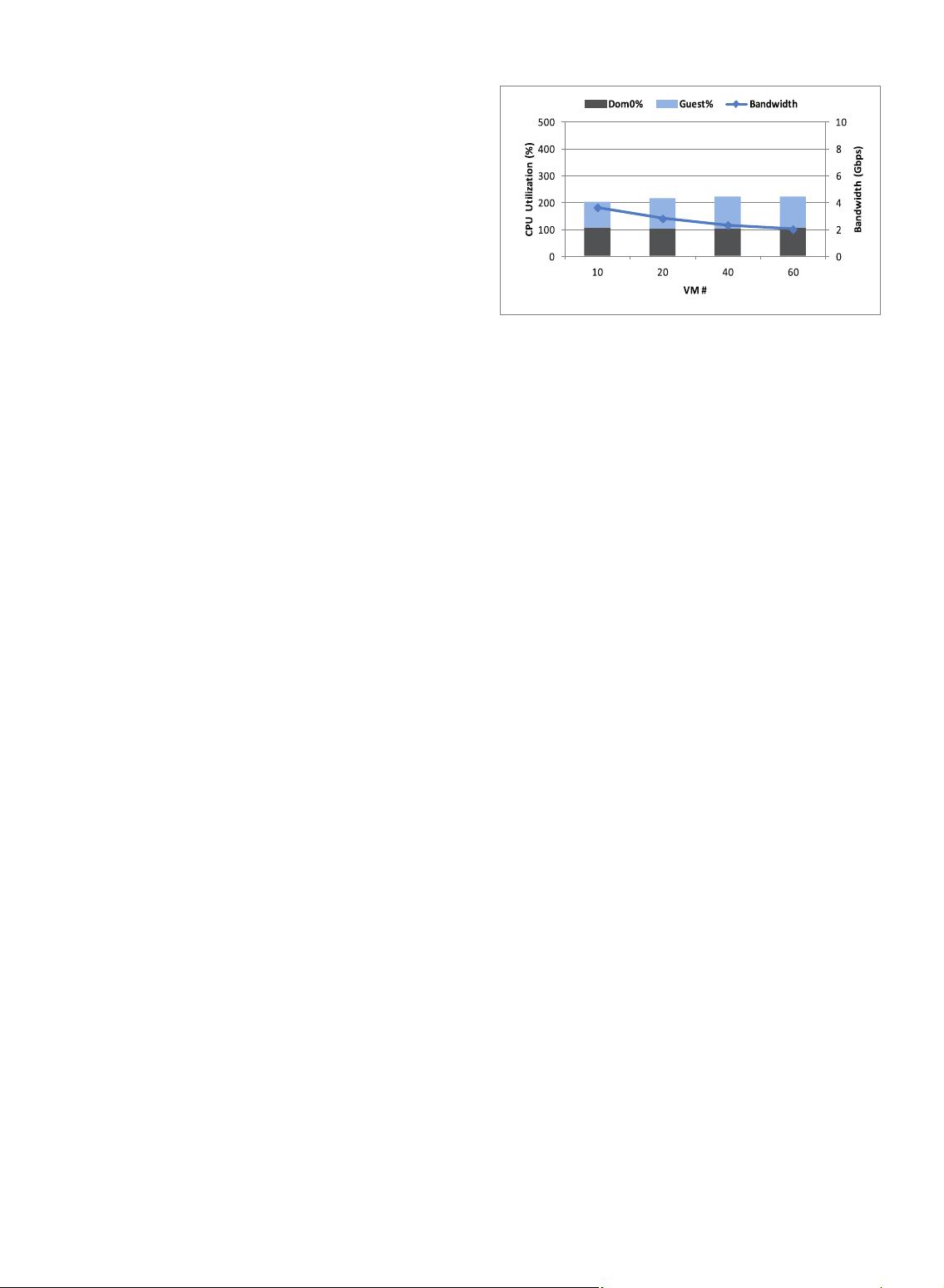

Fig. 2 illustrates the network I/O virtualization scalability

analysis in our 10 Gbps network environment (see the

configuration in Section 5). It points out that when the single-

threaded back-end driver services multiple guests, it

saturates the virtual CPU. That is because the virtual

interrupts delivered to Domain 0 target virtual CPU0, which

is saturated in the presence of high-speed input, no matter

how many other idle CPUs Domain 0 has. This inefficiency

of resource exploitation becomes a bottleneck. In addition, as

the number of VMs keeps increasing, the throughput drops

further due to the increased overheads of context switches

among VMs. Overall, our experiment reveals that the

networking performance is very poor (all under 4 Gbps)

with a single-threaded back-end driver, and suffers from

significant performance degradation as the number of VMs

increases. Therefore, existing network I/O virtualization

suffers from performance and scalability issues.

4NETWORK I/O VIRTUALIZATION OPTIMIZATIONS

To cope with the previously stated challenges, we present

our optimizations in this section. Section 4.1 elaborates

virtual interrupt coalescing (VIC), and Section 4.2 elaborates

the multimode VIC for dynamic network workl oad,

Section 4.3 covers multilayer interrupt coalescing, and in

Section 4.4, we present a virtual RSS.

4.1 Virtual Interrupt Coalescing

Interrupt coalescing [1] is a technology to throttle interrupt

frequency, so as to achieve the best tradeoff between

performance and latency. As network connection speed

goes up, rate of interrupts generated by packet arrivals is

increased. For instance, a 10 Gbps network can receive up to

0.8 million packets per second with maximum size of

1.5 KB, and it may have a 10 higher frequency for small

packets size (minimum to 50 B). To reduce the number of

interrupts, modern NICs coalesce interrupts to achieve

better performance while maintaining the worst case

latency [7] in the physical layer.

Although a NIC can reduce physical interrupt frequency,

it has a limited frequency control range, and the virtual

interrupt rate is still a heavy system overhead. As

mentioned in Section 3.1, virtual interrupt handling is very

expensive. Therefore, we enhance Xen network I/O

virtualization by coalescing virtual interrupts to reduce

CPU cycle usage. As depicted in Fig. 3, virtual interrupts

can be coalesced by either the driver domain or guest

domain. Consequently, there are two implementations:

back-end VIC (BEC) and front-end VIC (FEC). BEC moderates

GUAN ET AL.: PERFORMANCE ENH ANCEMENT FOR NETWORK I/O VIRTUALIZATION WITH EFFICIENT INTERRUPT COALESCING AND... 3

Fig. 2. Network I/O virtualization scalability.