DeepRoad: GAN-Based Metamorphic Testing and Input Validation Framework for ... ASE ’18, September 3–7, 2018, Montpellier, France

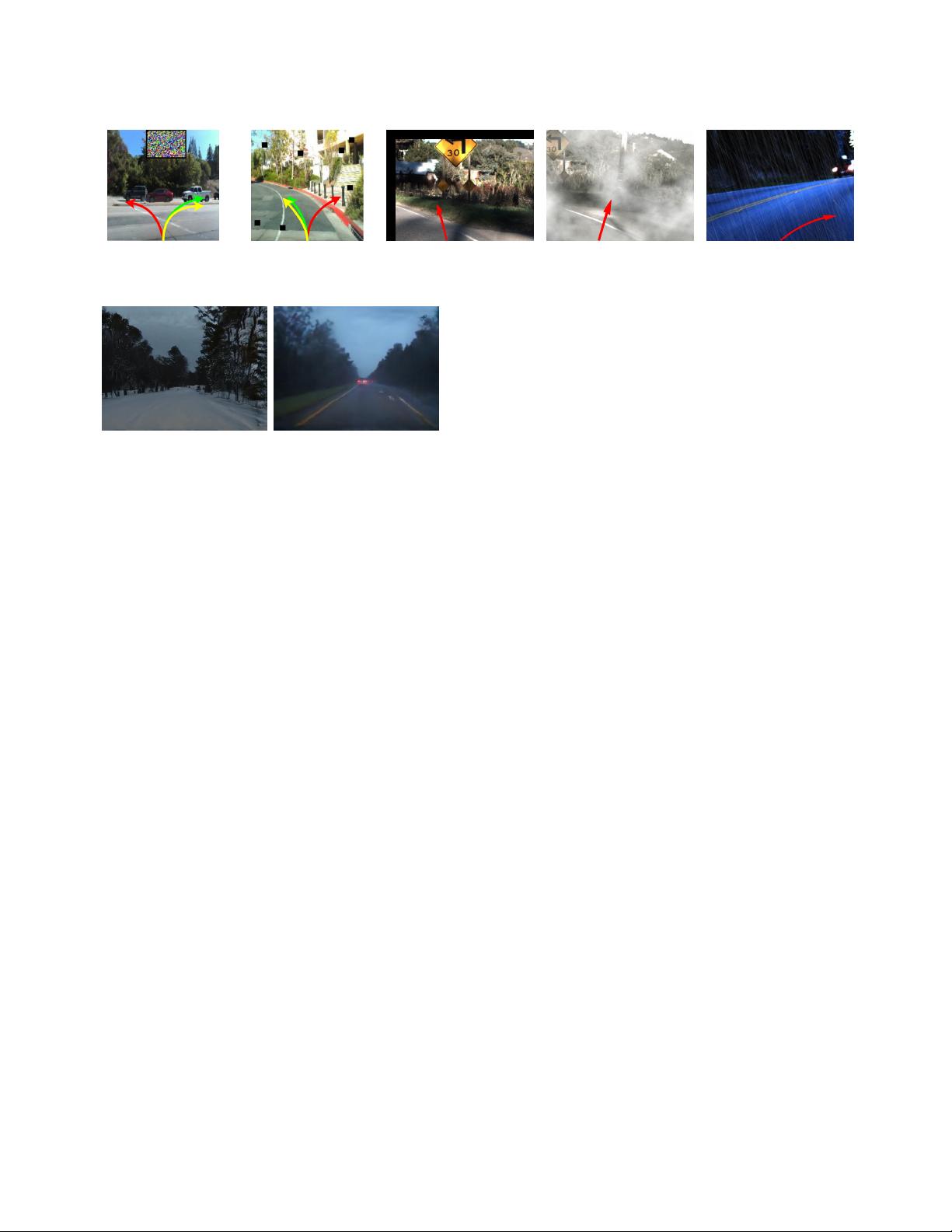

(a) Patch (b) Holes (c) Translation (d) Fog (e) Rain

Figure 1: Driving scenes synthesized by De epXplore (a)(b) and DeepTest (c)(d)(e)

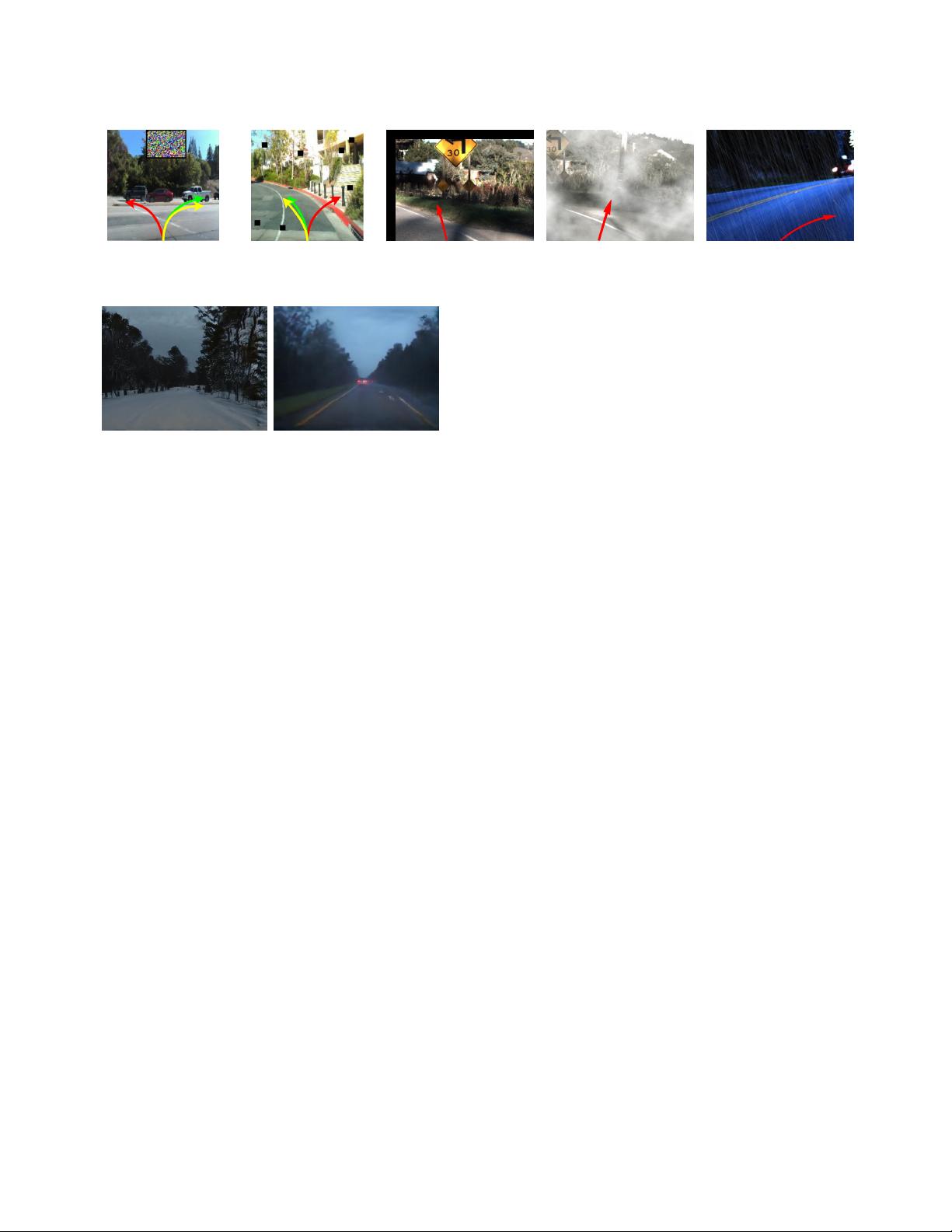

(a) Snow (b) Rain

Figure 2: Snowy and rainy scenes synthesized by DeepRoad

eectively detect thousands of inconsistent behaviors of dierent

levels for these systems. Furthermore, we use DeepRoad

IV

to vali-

date the input images sampled from dierent driving scenes. The

results demonstrate that in the embedding space, the cluster of the

rainy and snowy image points are separately distributed to the clus-

ter of training images, however, the training cluster is mixed with

the majority of the sunny image points. It indicates that given a

proper threshold, DeepRoad

IV

can eectively validate input, which

potentially improve the system robustness.

The key contributions of this paper are as follows.

•

We propose the rst GAN-based metamorphic testing ap-

proach to generate driving scenes with various weather con-

ditions for detecting inconsistent behaviors of autonomous

driving systems.

•

We propose a novel approach to validate inputs for DNN-

based autonomous driving system. We present that the dis-

tance between the high-level features of training and input

images can be used for validating inputs.

•

We implement the proposed approaches in DeepRoad, which

can generate images of diverse driving scenes (e.g. rain and

snow) and measure the similarity between multiple image

sets in embedding space. We use DeepRoad to test well-

recognized DNN-based autonomous driving models and suc-

cessfully detect thousands of inconsistent driving behaviors.

Additionally, DeepRoad can accurately distinguish images

with extreme weather conditions to the training images,

which is eective to validate input for autonomous driving

systems.

2 BACKGROUND

Autonomous driving systems have been rapidly evolving in recent

years [

14

,

32

]. For example, many major auto manufacturers (in-

cluding Tesla, GM, Volvo, Ford, BMW, Honda, and Daimler) and IT

companies (including Waymo/Google, Uber, and Baidu) are work-

ing on building and testing various autonomous driving systems.

Typically, autonomous driving systems capture data from environ-

ment via multiple sensors (e.g. camera, Radar, Lidar, GPU, IMU, etc.)

as input, and use Deep Neural Networks (DNNs) to process data

and output control signals (e.g. steering and braking decisions). In

NVIDIA’s work [

14

], their autonomous driving system, DAVE-2

can uently control cars only based on the images captured by a

single front camera. In this work, we mainly focus on DNN-based

autonomous driving systems with camera inputs and steering angle

outputs.

2.1 DNN Architectures

To date, Convolutional Neural Network (CNN) [

23

] and Recurrent

Neural Network (RNN) [

33

] are the most widely used DNNs for

autonomous driving systems. Typically, CNNs are good at analyzing

visual imagery and RNNs can eectively process sequential data.

In this work, the evaluated models are built on CNN and RNN

modules. We briey introduce the basic concepts and components

of each architecture as follows, where more details about DNNs are

provided in [24].

2.1.1 Convolutional Neural Networks. Convolutional Neural Net-

works are similar to regular neural networks, which include a large

amount of neurons and pass information in a feed-forward way.

However, since the input data are images, several properties can

be applied to optimize the regular neural networks, where con-

volutional layer is a key component in CNNs. Instead of being

fully connected, a neuron in a layer only connects to some neu-

rons in the previous layer, and the computational process can be

presented as a convolution with kernels. Figure 3a shows an ex-

ample of CNN-based autonomous driving system that consists of

an input layer (images) and an output layer (steering angles), as

well as multiple hidden layers. Convolution hidden layers allow

weight sharing across multiple connections and can greatly save

the training eorts.

2.1.2 Recurrent Neural Networks. Regular neural networks and

CNNs are designed to process independent data, such as using CNN

to classify images. However, for sequential data like videos, the

neural networks should not only capture information of each single

frame, but are also expected to model the connections between them.

Unlike regular NNs and CNNs, RNN is a kind of neural network

with feedback connections. As shown in the left part of Figure 3b,

RNNs use loops to forward the previous states to input, which

model the connection of input data. The right part of Figure 3b

shows the workow of the unfolded RNN for predicting steering

angles based on a sequence of images. At each step, RNN takes

134

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功