GhostNet: More Features from Cheap Operations

Kai Han

1

Yunhe Wang

1

Qi Tian

1∗

Jianyuan Guo

2

Chunjing Xu

1

Chang Xu

3

1

Noah’s Ark Lab, Huawei Technologies.

2

Peking University.

3

School of Computer Science, Faculty of Engineering, University of Sydney.

{kai.han,yunhe.wang,tian.qi1,xuchunjing}@huawei.com jyguo@pku.edu.cn c.xu@sydney.edu.au

Abstract

Deploying convolutional neural networks (CNNs) on em-

bedded devices is difficult due to the limited memory and

computation resources. The redundancy in feature maps

is an important characteristic of those successful CNNs,

but has rarely been investigated in neural architecture de-

sign. This paper proposes a novel Ghost module to gener-

ate more feature maps from cheap operations. Based on

a set of intrinsic feature maps, we apply a series of linear

transformations with cheap cost to generate many ghost

feature maps that could fully reveal information underlying

intrinsic features. The proposed Ghost module can be taken

as a plug-and-play component to upgrade existing convo-

lutional neural networks. Ghost bottlenecks are designed

to stack Ghost modules, and then the lightweight Ghost-

Net can be easily established. Experiments conducted on

benchmarks demonstrate that the proposed Ghost module is

an impressive alternative of convolution layers in baseline

models, and our GhostNet can achieve higher recognition

performance (e.g.

75.7%

top-1 accuracy) than MobileNetV3

with similar computational cost on the ImageNet ILSVRC-

2012 classification dataset. Code is available at

https:

//github.com/huawei-noah/ghostnet.

1. Introduction

Deep convolutional neural networks have shown excellent

performance on various computer vision tasks, such as image

recognition [

30

,

13

], object detection [

43

,

33

], and semantic

segmentation [

4

]. Traditional CNNs usually need a large

number of parameters and floating point operations (FLOPs)

to achieve a satisfactory accuracy, e.g. ResNet-50 [

16

] has

about

25.6

M parameters and requires

4.1

B FLOPs to pro-

cess an image of size

224 × 224

. Thus, the recent trend

of deep neural network design is to explore portable and

efficient network architectures with acceptable performance

for mobile devices (e.g. smart phones and self-driving cars).

∗

Corresponding author

HUAWEI TECHNOLOGIES CO., LTD.

Huawei Confidential

1

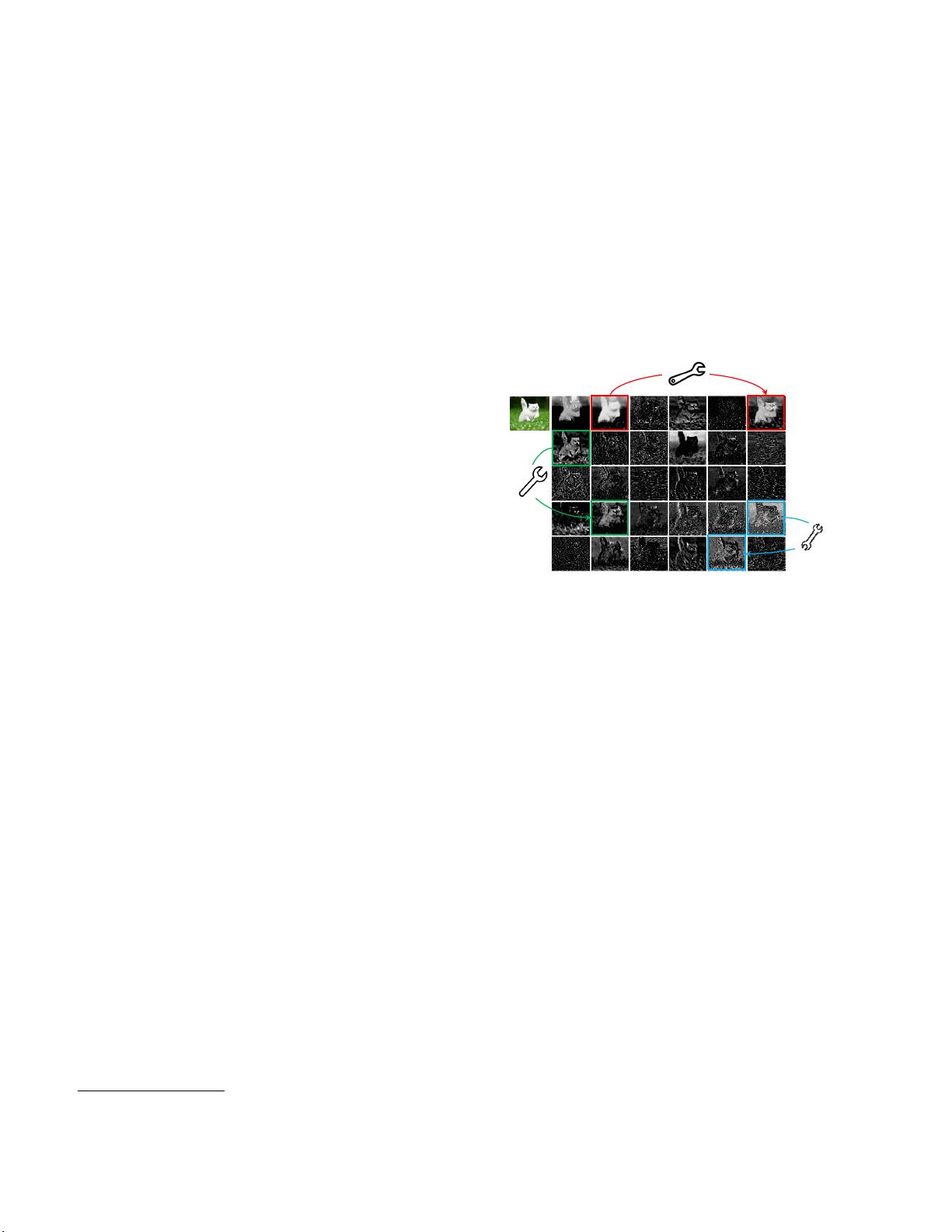

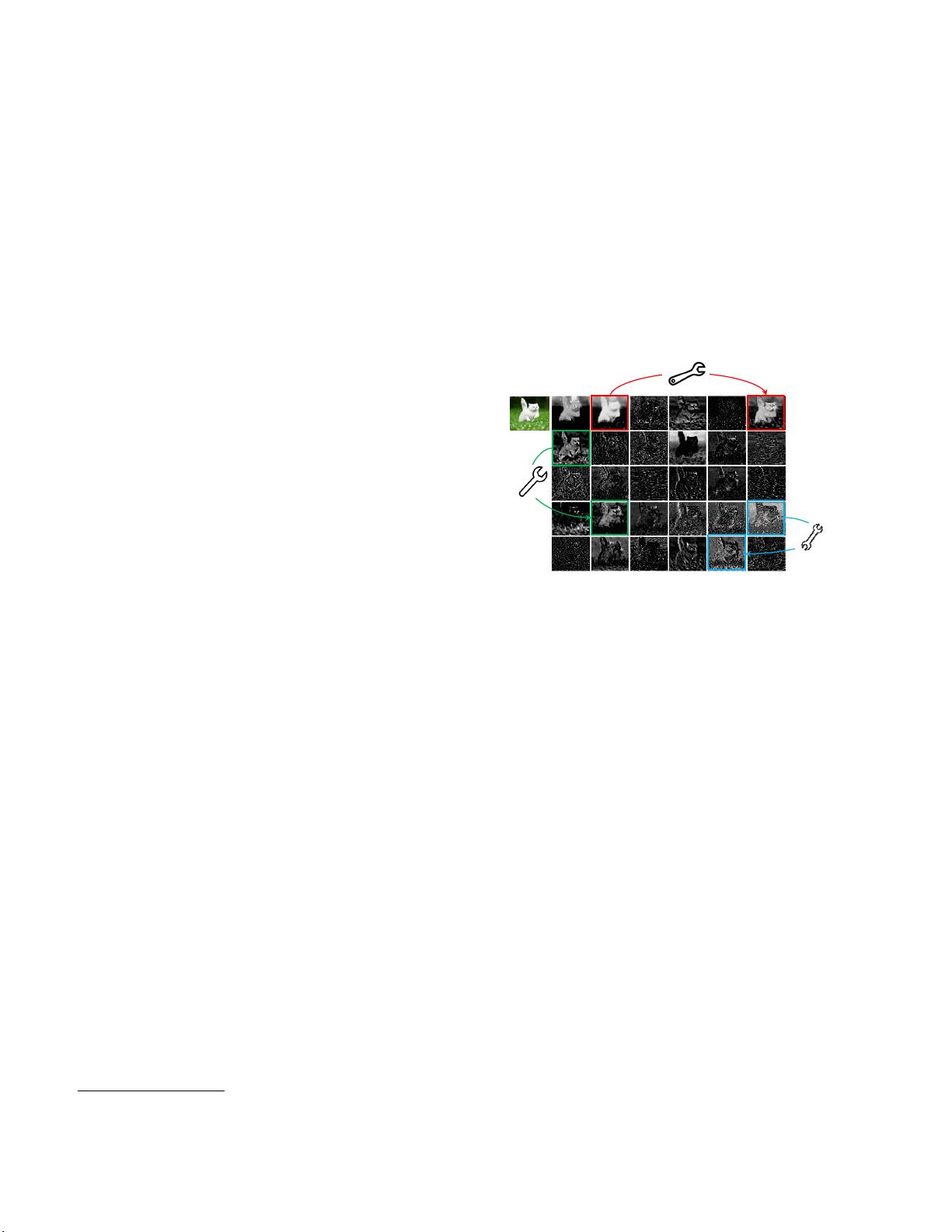

Figure 1. Visualization of some feature maps generated by the first

residual group in ResNet-50, where three similar feature map pair

examples are annotated with boxes of the same color. One feature

map in the pair can be approximately obtained by transforming the

other one through cheap operations (denoted by spanners).

Over the years, a series of methods have been proposed to

investigate compact deep neural networks such as network

pruning [

14

,

39

], low-bit quantization [

42

,

26

], knowledge

distillation [

19

,

57

], etc. Han et al. [

14

] proposed to prune

the unimportant weights in neural networks. [

31

] utilized

`

1

-norm regularization to prune filters for efficient CNNs.

[

42

] quantized the weights and the activations to 1-bit data

for achieving large compression and speed-up ratios. [

19

]

introduced knowledge distillation for transferring knowl-

edge from a larger model to a smaller model. However,

performance of these methods are often upper bounded by

pre-trained deep neural networks that have been taken as

their baselines.

Besides them, efficient neural architecture design has a

very high potential for establishing highly efficient deep net-

works with fewer parameters and calculations, and recently

has achieved considerable success. This kind of methods

can also provide new search unit for automatic search meth-

ods [

62

,

55

,

5

]. For instance, MobileNet [

21

,

44

,

20

] utilized

the depthwise and pointwise convolutions to construct a

unit for approximating the original convolutional layer with

larger filters and achieved comparable performance. Shuf-

fleNet [

61

,

40

] further explored a channel shuffle operation

1

arXiv:1911.11907v2 [cs.CV] 13 Mar 2020

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功