8 M. Feurer and F. Hutter

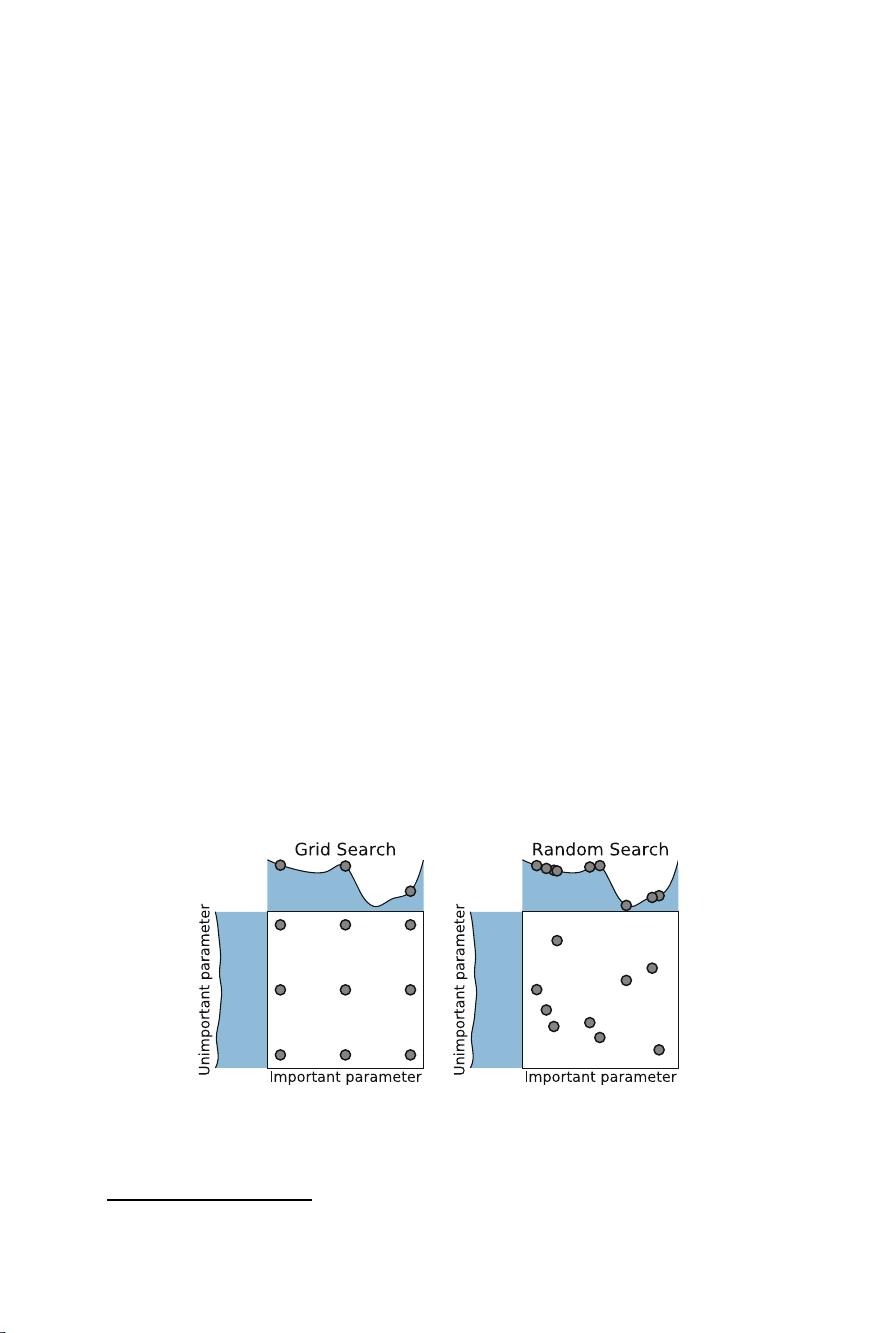

Further advantages over grid search include easier parallelization (since workers

do not need to communicate with each other and failing workers do not leave holes

in the design) and flexible resource allocation (since one can add an arbitrary number

of random points to a random search design to still yield a random search design;

the equivalent does not hold for grid search).

Random search is a useful baseline because it makes no assumptions on the

machine learning algorithm being optimized, and, given enough resources, will,

in expectation, achieves performance arbitrarily close to the optimum. Interleaving

random search with more complex optimization strategies therefore allows to

guarantee a minimal rate of convergence and also adds exploration that can improve

model-based search [3, 59]. Random search is also a useful method for initializing

the search process, as it explores the entire configuration space and thus often

finds settings with reasonable performance. However, it is no silver bullet and often

takes far longer than guided search methods to identify one of the best performing

hyperparameter configurations: e.g., when sampling without replacement from a

configuration space with N Boolean hyperparameters with a good and a bad setting

each and no interaction effects, it will require an expected 2

N−1

function evaluations

to find the optimum, whereas a guided search could find the optimum in N + 1

function evaluations as follows: starting from an arbitrary configuration, loop over

the hyperparameters and change one at a time, keeping the resulting configuration

if performance improves and reverting the change if it doesn’t. Accordingly, the

guided search methods we discuss in the following sections usually outperform

random search [12, 14, 33, 90, 153].

Population-based methods, such as genetic algorithms, evolutionary algorithms,

evolutionary strategies,andparticle swarm optimization are optimization algo-

rithms that maintain a population, i.e., a set of configurations, and improve this

population by applying local perturbations (so-called mutations) and combinations

of different members (so-called crossover) to obtain a new generation of better

configurations. These methods are conceptually simple, can handle different data

types, and are embarrassingly parallel [91] since a population of N members can be

evaluated in parallel on N machines.

One of the best known population-based methods is the covariance matrix

adaption evolutionary strategy (CMA-ES [51]); this simple evolutionary strategy

samples configurations from a multivariate Gaussian whose mean and covariance

are updated in each generation based on the success of the population’s individ-

uals. CMA-ES is one of the most competitive blackbox optimization algorithms,

regularly dominating the Black-Box Optimization Benchmarking (BBOB) chal-

lenge [11].

For further details on population-based methods, we refer to [28, 138]; we discuss

applications to hyperparameter optimization in Sect. 1.5, applications to neural

architecture search in Chap. 3, and genetic programming for AutoML pipelines in

Chap. 8.

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功