没有合适的资源?快使用搜索试试~ 我知道了~

首页开源应用架构解析:扩展性和分布式系统

开源应用架构解析:扩展性和分布式系统

"《开源应用架构》第二卷是一本深入探讨开源软件结构和设计思想的书籍,旨在让开发者从历史上的优秀项目中学习,避免重复错误,提升软件开发的水平。书中涵盖了多个开源项目的架构分析,包括可扩展的Web架构、分布式系统、Firefox的发布工程等,详细阐述了这些项目的关键组件、交互方式以及开发过程中的经验教训。" 在第一部分“可扩展Web架构和分布式系统”中,作者讨论了Web分布式系统设计的基本原则,包括系统的组成部分、冗余和分区策略。通过一个图像托管应用的例子,展示了服务的概念。接着,作者详细介绍了构建快速且可扩展数据访问的基础,如缓存(全局缓存和分布式缓存)、代理、索引、负载均衡器和队列。这些技术都是构建高性能和可扩展Web服务的核心要素。 第二部分聚焦于Firefox的发布工程,详细介绍了从启动发布流程到最终用户更新的全过程。这一过程包括在开始发布前的考虑、"Goto Build"的发送标准和流程、源代码的标记与构建、本地化和合作伙伴的打包、签名验证、更新机制(主要更新和次要更新,完整更新和部分更新)以及内部镜像和质量保证(QA)的推送。此外,还分享了在发布过程中学到的教训,强调了与其他利益相关者的共识、跨团队合作、明确交接以及人员变动管理的重要性。 这本书对于初级开发者来说,是一个了解资深开发者思维模式的绝佳起点;对于中级或高级开发者,则提供了一个观察同行如何解决复杂设计问题的窗口。通过学习这些开源项目的架构,开发者可以更好地理解如何构建可扩展、可靠的软件系统,并从中汲取灵感,提高自己的设计能力。

资源详情

资源推荐

always do more reading than writing), but also helps clarify what is going on at each point. Finally, this

separates future concerns, which would make it easier to troubleshoot and scale a problem like slow reads.

The advantage of this approach is that we are able to solve problems independently of one another—we don't

have to worry about writing and retrieving new images in the same context. Both of these services still

leverage the global corpus of images, but they are free to optimize their own performance with

service-appropriate methods (for example, queuing up requests, or caching popular images—more on this

below). And from a maintenance and cost perspective each service can scale independently as needed,

which is great because if they were combined and intermingled, one could inadvertently impact the

performance of the other as in the scenario discussed above.

Of course, the above example can work well when you have two different endpoints (in fact this is very similar

to several cloud storage providers' implementations and Content Delivery Networks). There are lots of ways

to address these types of bottlenecks though, and each has different tradeoffs.

For example, Flickr solves this read/write issue by distributing users across different shards such that each

shard can only handle a set number of users, and as users increase more shards are added to the cluster

(see the presentation on Flickr's

scaling,http://mysqldba.blogspot.com/2008/04/mysql-uc-2007-presentation-file.html). In the first example it is

easier to scale hardware based on actual usage (the number of reads and writes across the whole system),

whereas Flickr scales with their user base (but forces the assumption of equal usage across users so there

can be extra capacity). In the former an outage or issue with one of the services brings down functionality

across the whole system (no-one can write files, for example), whereas an outage with one of Flickr's shards

will only affect those users. In the first example it is easier to perform operations across the whole

dataset—for example, updating the write service to include new metadata or searching across all image

metadata—whereas with the Flickr architecture each shard would need to be updated or searched (or a

search service would need to be created to collate that metadata—which is in fact what they do).

When it comes to these systems there is no right answer, but it helps to go back to the principles at the start of

this chapter, determine the system needs (heavy reads or writes or both, level of concurrency, queries across

the data set, ranges, sorts, etc.), benchmark different alternatives, understand how the system will fail, and

have a solid plan for when failure happens.

Redundancy

In order to handle failure gracefully a web architecture must have redundancy of its services and data. For

example, if there is only one copy of a file stored on a single server, then losing that server means losing that

file. Losing data is seldom a good thing, and a common way of handling it is to create multiple, or redundant,

copies.

This same principle also applies to services. If there is a core piece of functionality for an application, ensuring

that multiple copies or versions are running simultaneously can secure against the failure of a single node.

Creating redundancy in a system can remove single points of failure and provide a backup or spare

functionality if needed in a crisis. For example, if there are two instances of the same service running in

production, and one fails or degrades, the system can failoverto the healthy copy. Failover can happen

automatically or require manual intervention.

Another key part of service redundancy is creating a shared-nothing architecture. With this architecture, each

node is able to operate independently of one another and there is no central "brain" managing state or

coordinating activities for the other nodes. This helps a lot with scalability since new nodes can be added

without special conditions or knowledge. However, and most importantly, there is no single point of failure in

these systems, so they are much more resilient to failure.

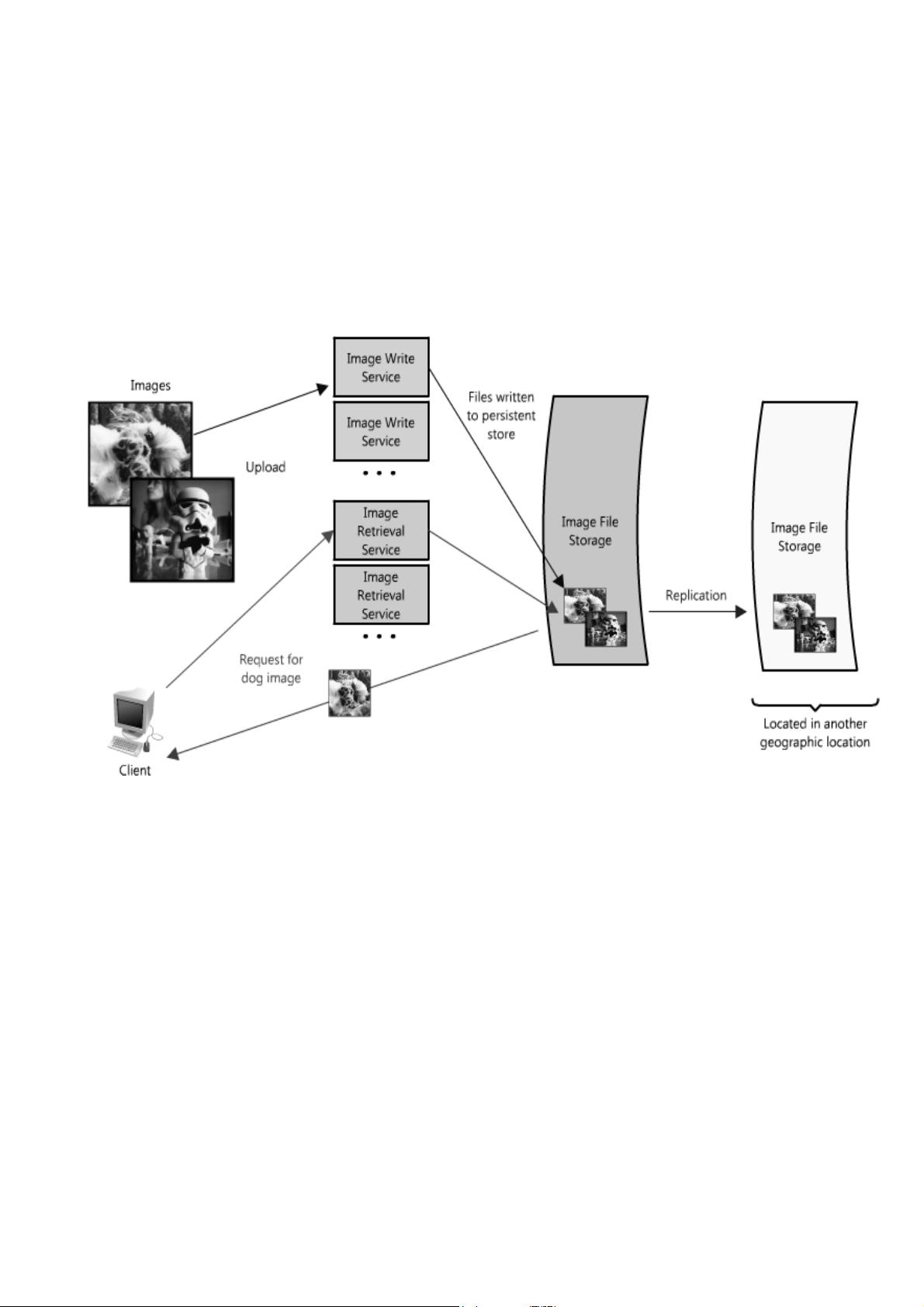

For example, in our image server application, all images would have redundant copies on another piece of

hardware somewhere (ideally in a different geographic location in the event of a catastrophe like an

earthquake or fire in the data center), and the services to access the images would be redundant, all

potentially servicing requests. (See Figure 1.3.) (Load balancers are a great way to make this possible, but

there is more on that below).

Figure 1.3: Image hosting application with redundancy

Partitions

There may be very large data sets that are unable to fit on a single server. It may also be the case that an

operation requires too many computing resources, diminishing performance and making it necessary to add

capacity. In either case you have two choices: scale vertically or horizontally.

Scaling vertically means adding more resources to an individual server. So for a very large data set, this might

mean adding more (or bigger) hard drives so a single server can contain the entire data set. In the case of the

compute operation, this could mean moving the computation to a bigger server with a faster CPU or more

memory. In each case, vertical scaling is accomplished by making the individual resource capable of handling

more on its own.

To scale horizontally, on the other hand, is to add more nodes. In the case of the large data set, this might be

a second server to store parts of the data set, and for the computing resource it would mean splitting the

operation or load across some additional nodes. To take full advantage of horizontal scaling, it should be

included as an intrinsic design principle of the system architecture, otherwise it can be quite cumbersome to

modify and separate out the context to make this possible.

When it comes to horizontal scaling, one of the more common techniques is to break up your services into

partitions, or shards. The partitions can be distributed such that each logical set of functionality is separate;

this could be done by geographic boundaries, or by another criteria like non-paying versus paying users. The

advantage of these schemes is that they provide a service or data store with added capacity.

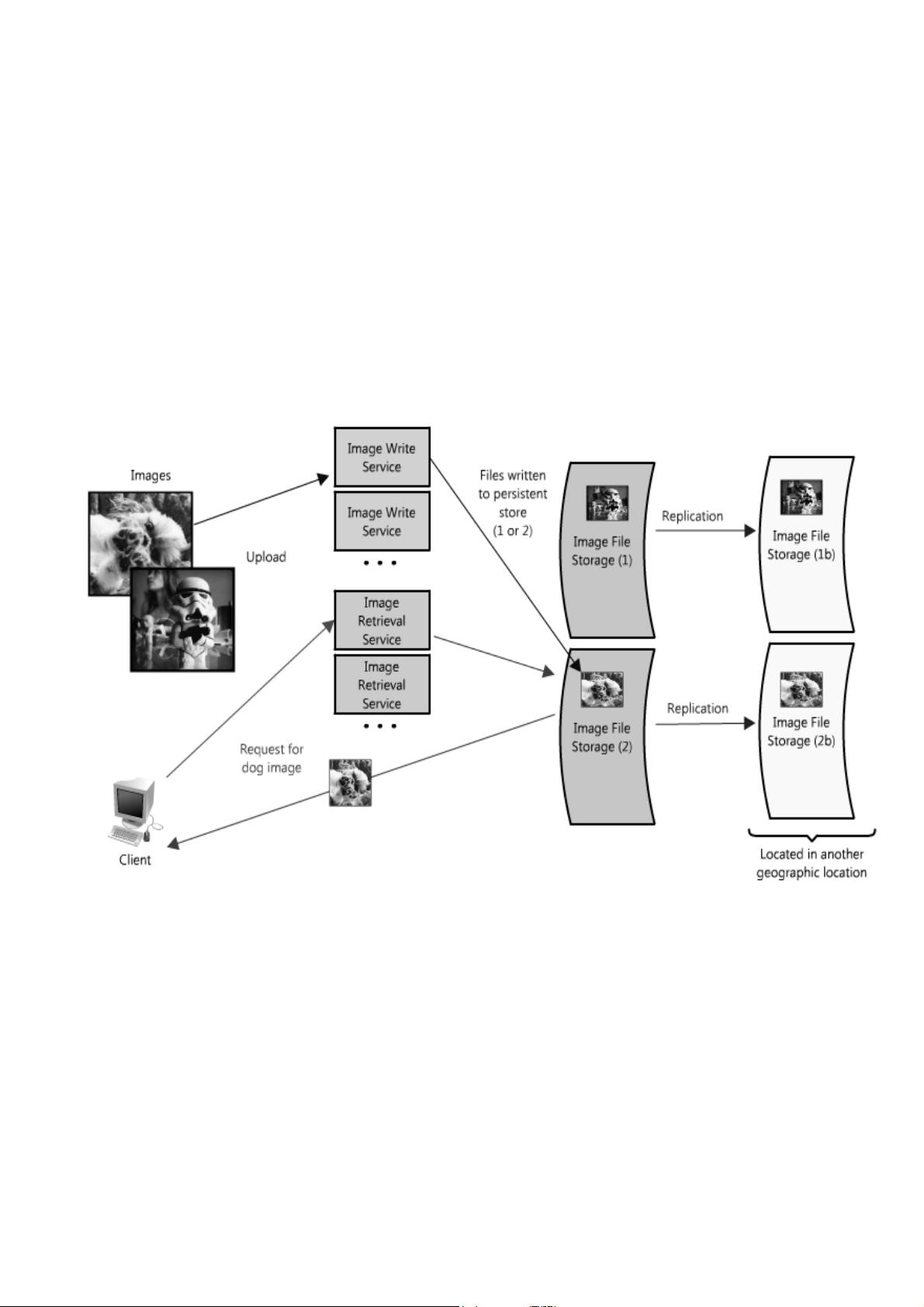

In our image server example, it is possible that the single file server used to store images could be replaced

by multiple file servers, each containing its own unique set of images. (See Figure 1.4.) Such an architecture

would allow the system to fill each file server with images, adding additional servers as the disks become full.

The design would require a naming scheme that tied an image's filename to the server containing it. An

image's name could be formed from a consistent hashing scheme mapped across the servers. Or

alternatively, each image could be assigned an incremental ID, so that when a client makes a request for an

image, the image retrieval service only needs to maintain the range of IDs that are mapped to each of the

servers (like an index).

Figure 1.4: Image hosting application with redundancy and partitioning

Of course there are challenges distributing data or functionality across multiple servers. One of the key issues

is data locality; in distributed systems the closer the data to the operation or point of computation, the better

the performance of the system. Therefore it is potentially problematic to have data spread across multiple

servers, as any time it is needed it may not be local, forcing the servers to perform a costly fetch of the

required information across the network.

Another potential issue comes in the form of inconsistency. When there are different services reading and

writing from a shared resource, potentially another service or data store, there is the chance for race

conditions—where some data is supposed to be updated, but the read happens prior to the update—and in

those cases the data is inconsistent. For example, in the image hosting scenario, a race condition could occur

if one client sent a request to update the dog image with a new title, changing it from "Dog" to "Gizmo", but at

the same time another client was reading the image. In that circumstance it is unclear which title, "Dog" or

"Gizmo", would be the one received by the second client.

There are certainly some obstacles associated with partitioning data, but partitioning allows each problem to

be split—by data, load, usage patterns, etc.—into manageable chunks. This can help with scalability and

manageability, but is not without risk. There are lots of ways to mitigate risk and handle failures; however, in

the interest of brevity they are not covered in this chapter. If you are interested in reading more, you can

check out my blog post on fault tolerance and monitoring.

1.3. The Building Blocks of Fast and Scalable Data

Access

Having covered some of the core considerations in designing distributed systems, let's now talk about the

hard part: scaling access to the data.

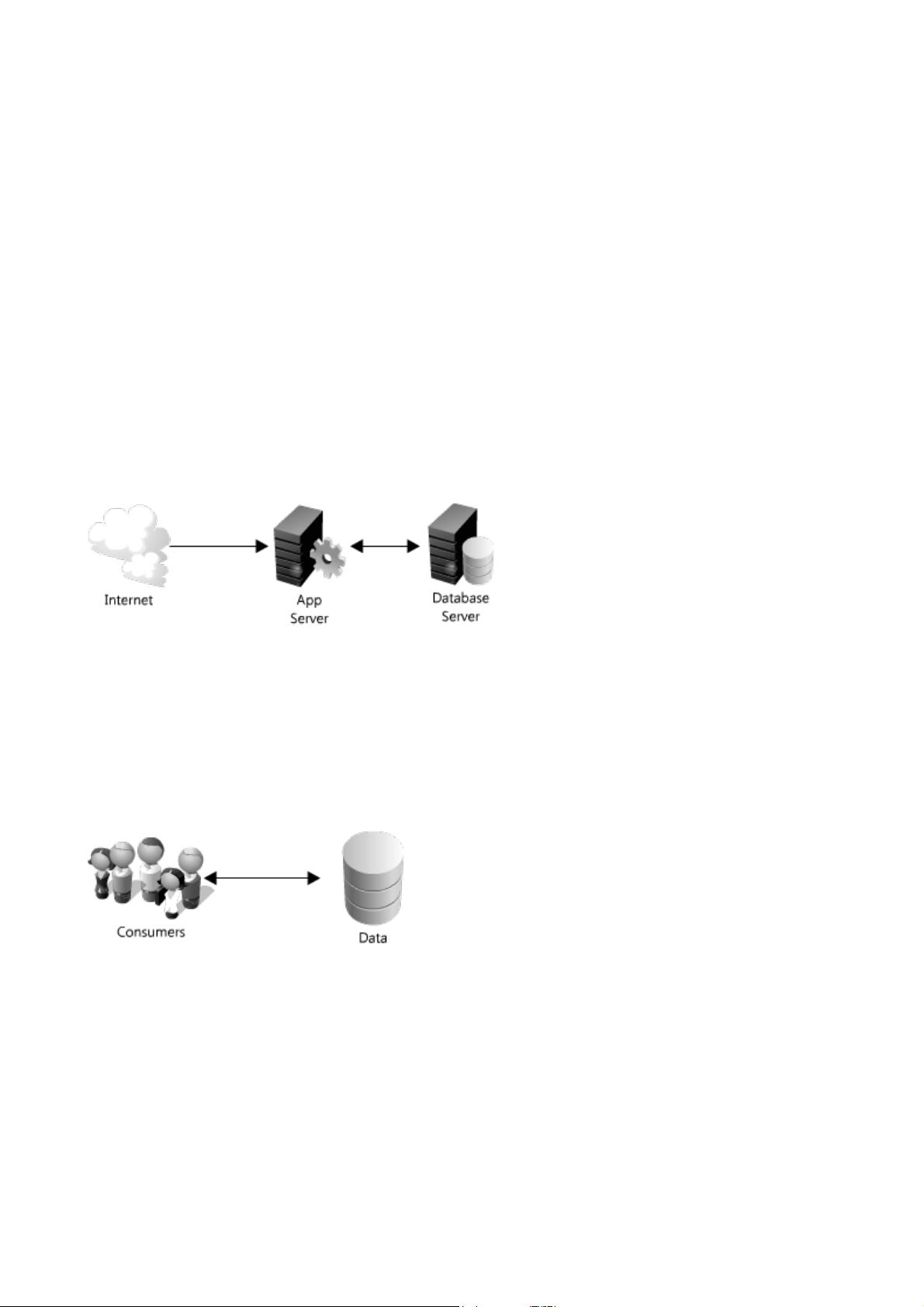

Most simple web applications, for example, LAMP stack applications, look something like Figure 1.5.

Figure 1.5: Simple web applications

As they grow, there are two main challenges: scaling access to the app server and to the database. In a

highly scalable application design, the app (or web) server is typically minimized and often embodies a

shared-nothing architecture. This makes the app server layer of the system horizontally scalable. As a result

of this design, the heavy lifting is pushed down the stack to the database server and supporting services; it's

at this layer where the real scaling and performance challenges come into play.

The rest of this chapter is devoted to some of the more common strategies and methods for making these

types of services fast and scalable by providing fast access to data.

Figure 1.6: Oversimplified web application

Most systems can be oversimplified to Figure 1.6. This is a great place to start. If you have a lot of data, you

want fast and easy access, like keeping a stash of candy in the top drawer of your desk. Though overly

simplified, the previous statement hints at two hard problems: scalability of storage and fast access of data.

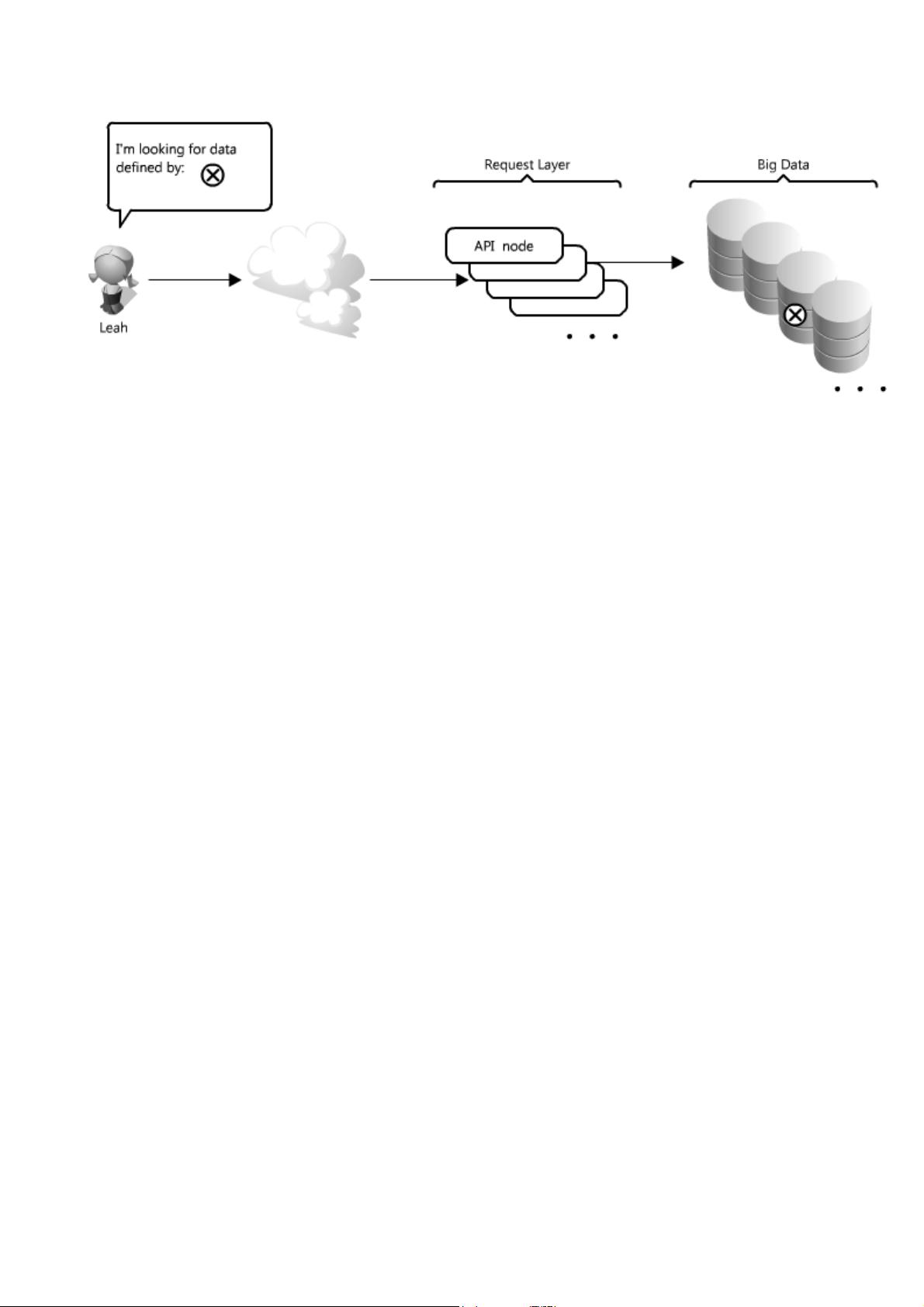

For the sake of this section, let's assume you have many terabytes (TB) of data and you want to allow users to

access small portions of that data at random. (See Figure 1.7.) This is similar to locating an image file

somewhere on the file server in the image application example.

Figure 1.7: Accessing specific data

This is particularly challenging because it can be very costly to load TBs of data into memory; this directly

translates to disk IO. Reading from disk is many times slower than from memory—memory access is as fast

as Chuck Norris, whereas disk access is slower than the line at the DMV. This speed difference really adds up

for large data sets; in real numbers memory access is as little as 6 times faster for sequential reads, or

100,000 times faster for random reads, than reading from disk (see "The Pathologies of Big

Data", http://queue.acm.org/detail.cfm?id=1563874). Moreover, even with unique IDs, solving the problem of

knowing where to find that little bit of data can be an arduous task. It's like trying to get that last Jolly Rancher

from your candy stash without looking.

Thankfully there are many options that you can employ to make this easier; four of the more important ones

are caches, proxies, indexes and load balancers. The rest of this section discusses how each of these

concepts can be used to make data access a lot faster.

Caches

Caches take advantage of the locality of reference principle: recently requested data is likely to be requested

again. They are used in almost every layer of computing: hardware, operating systems, web browsers, web

applications and more. A cache is like short-term memory: it has a limited amount of space, but is typically

faster than the original data source and contains the most recently accessed items. Caches can exist at all

levels in architecture, but are often found at the level nearest to the front end, where they are implemented to

return data quickly without taxing downstream levels.

How can a cache be used to make your data access faster in our API example? In this case, there are a

couple of places you can insert a cache. One option is to insert a cache on your request layer node, as

in Figure 1.8.

剩余428页未读,继续阅读

sinat_21954747

- 粉丝: 0

- 资源: 24

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

最新资源

- 多模态联合稀疏表示在视频目标跟踪中的应用

- Kubernetes资源管控与Gardener开源软件实践解析

- MPI集群监控与负载平衡策略

- 自动化PHP安全漏洞检测:静态代码分析与数据流方法

- 青苔数据CEO程永:技术生态与阿里云开放创新

- 制造业转型: HyperX引领企业上云策略

- 赵维五分享:航空工业电子采购上云实战与运维策略

- 单片机控制的LED点阵显示屏设计及其实现

- 驻云科技李俊涛:AI驱动的云上服务新趋势与挑战

- 6LoWPAN物联网边界路由器:设计与实现

- 猩便利工程师仲小玉:Terraform云资源管理最佳实践与团队协作

- 类差分度改进的互信息特征选择提升文本分类性能

- VERITAS与阿里云合作的混合云转型与数据保护方案

- 云制造中的生产线仿真模型设计与虚拟化研究

- 汪洋在PostgresChina2018分享:高可用 PostgreSQL 工具与架构设计

- 2018 PostgresChina大会:阿里云时空引擎Ganos在PostgreSQL中的创新应用与多模型存储

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功