JOURNAL OF L

A

T

E

X CLASS FILES, VOL. X, NO. X, JANUARY 20XX 3

The four SLAM components are ‘camera pose es-

timation’, ‘map building’, ‘point classification’, and

‘camera grouping’. The main pipeline of our sys-

tem follows conventional sequential structure-from-

motion (SFM) methods. We assume that all cameras

look at the same initial scene to initialize the system.

After that, the ‘camera pose estimation’ component

computes camera poses at every frame by registering

the 3D map points to 2D image features. From time

to time, new map points are generated by the ‘map

building’ component. At every frame, points are clas-

sified into different types by the ‘point classification’

component. The system maintains the view overlap

information among cameras throughout time. The

‘camera grouping’ component separates cameras into

different groups, where cameras with view overlap

are in the same group. These groups could merge and

split when cameras meet or separate. In the following

section, we shall describe these four components in

detail.

4 CAMERA POSE ESTIMATION

Our system alternatively uses two different methods

for camera pose estimation: intra-camera pose esti-

mation and inter-camera pose estimation. In the for-

mer, each camera works independently, where tracked

feature points from a camera are registered with

static map points to compute its pose. In dynamic

environments, the number of static map points could

be small, or the static points are distributed within a

small image region, which can make the intra-camera

pose estimation fail. In such a case, we switch to the

inter-camera pose estimation method that uses both

static and dynamic points to simultaneously obtain

poses for all cameras.

4.1 Intra-camera Pose Estimation

If the camera intrinsic parameters are known, the cam-

era pose Θ = (R, t) can be computed by minimizing

the reprojection error (the distance between the image

projection of 3D map points and their corresponding

image feature points), namely,

Θ

∗

= arg min

θ

X

i

ρ (||m

i

− P(M

i

, Θ)||) . (1)

where P(M

i

, Θ) is the image projection of the 3D

point M

i

, m

i

is the image feature point registered to

M

i

, || · || measures the distance between two image

points. i is an index of feature points. The M-estimator

ρ : R

+

→ R

+

is the Tukey bi-weight function [39]

defined as

ρ(x) =

½

t

2

/6 (1 − [1 − (

x

t

)

2

]

3

) if |x| ≤ t

t

2

/6 otherwise.

(2)

Assuming that the error of feature detection and

tracking obeys a Gaussian distribution N (0, σ

2

I), we

set the threshold t in ρ(·) as 3σ. Equation (1) is

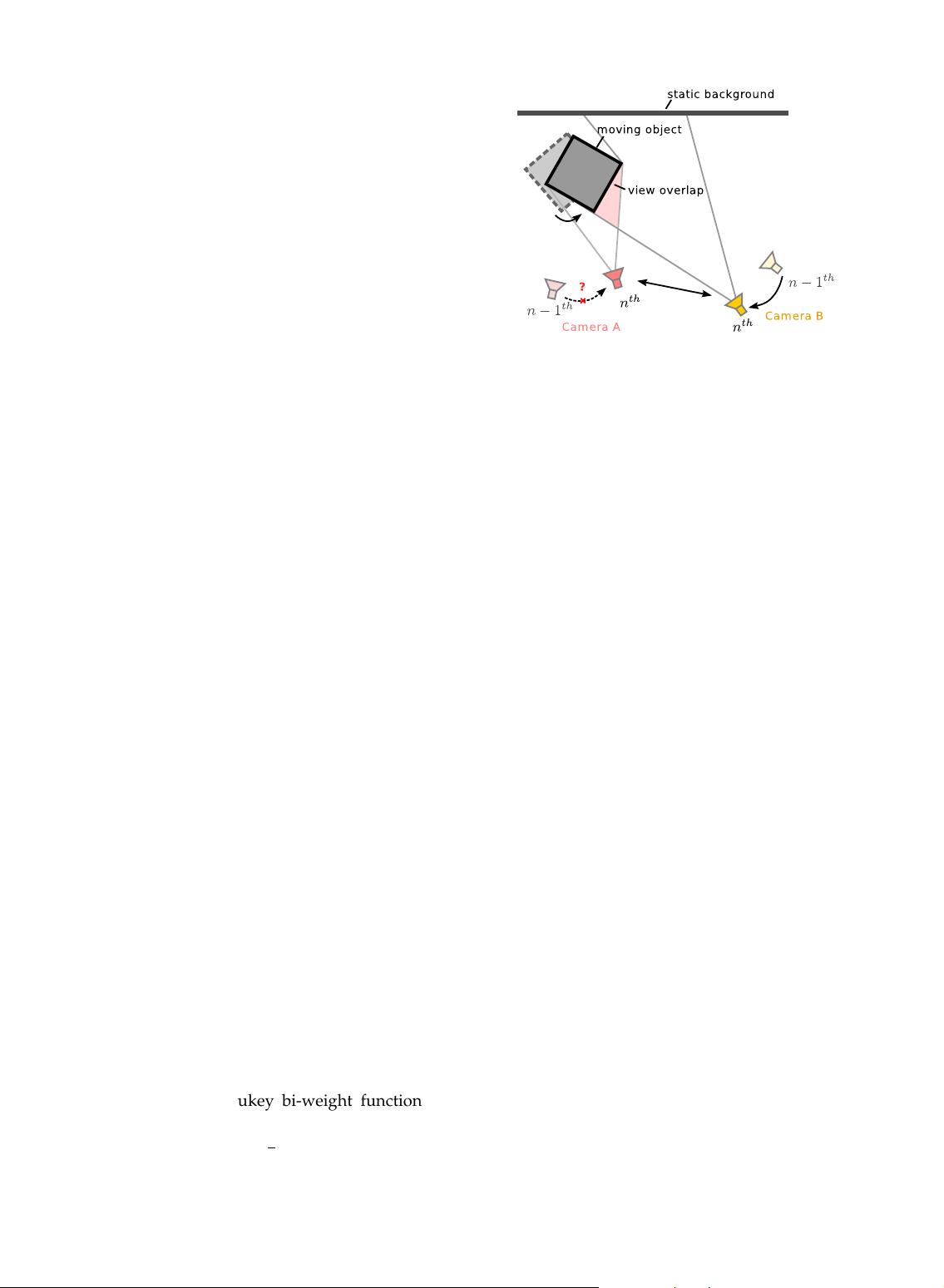

Fig. 2. The pose of camera A at the n

th

frame cannot

be estimated from its previous pose, since it observes

only the moving object (the grey square). However,

its relative pose with respect to camera B can be

determined. So the absolute pose of camera A can be

computed.

minimized by the iteratively re-weighted least squares

(IRLS) method, where Θ is initialized according to the

camera pose at the previous frame. At each iteration of

the IRLS, the Levenberg-Marquart algorithm is used

to solve the non-linear least square problem, where Θ

is parameterized in Lie algebra se(3) as [32].

4.2 Inter-camera Pose Estimation

When the number of visible static points is small,

or the static points are located in a small image

region, the intra-camera pose estimation is unstable

and sometimes fails. Fortunately, points on moving

objects give information about the relative camera

poses. Figure 2 provides such an illustration. The pose

of camera A at the n

th

frame cannot be decided on

its own, since it only observes the moving object (the

grey square). However, its relative pose with respect

to the camera B can be decided. We can therefore use

both static and dynamic points together to decide all

camera poses simultaneously.

Actually, the 3D coordinates of dynamic points can

only be computed when the camera poses are already

known. Hence, our system in fact simultaneously es-

timates both the camera poses and the 3D positions of

dynamic points. We formulate the inter-camera pose

estimation problem as an minimization of reprojection

error,

{Θ

c

}

∗

= arg min

M

D

,{Θ

c

}

X

c

½

X

i∈S

v

c

i

ρ (||m

i

− P(M

i

, Θ

c

)||)

+

X

j∈D

v

c

j

ρ (||m

j

− P(M

j

, Θ

c

)||)

¾

.

(3)

Here, c is an index of cameras, S and D are the set of

‘static’ and ‘dynamic’ map points. v

c

i

represents the

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功