CHAPTER 1. INTRODUCTION 4

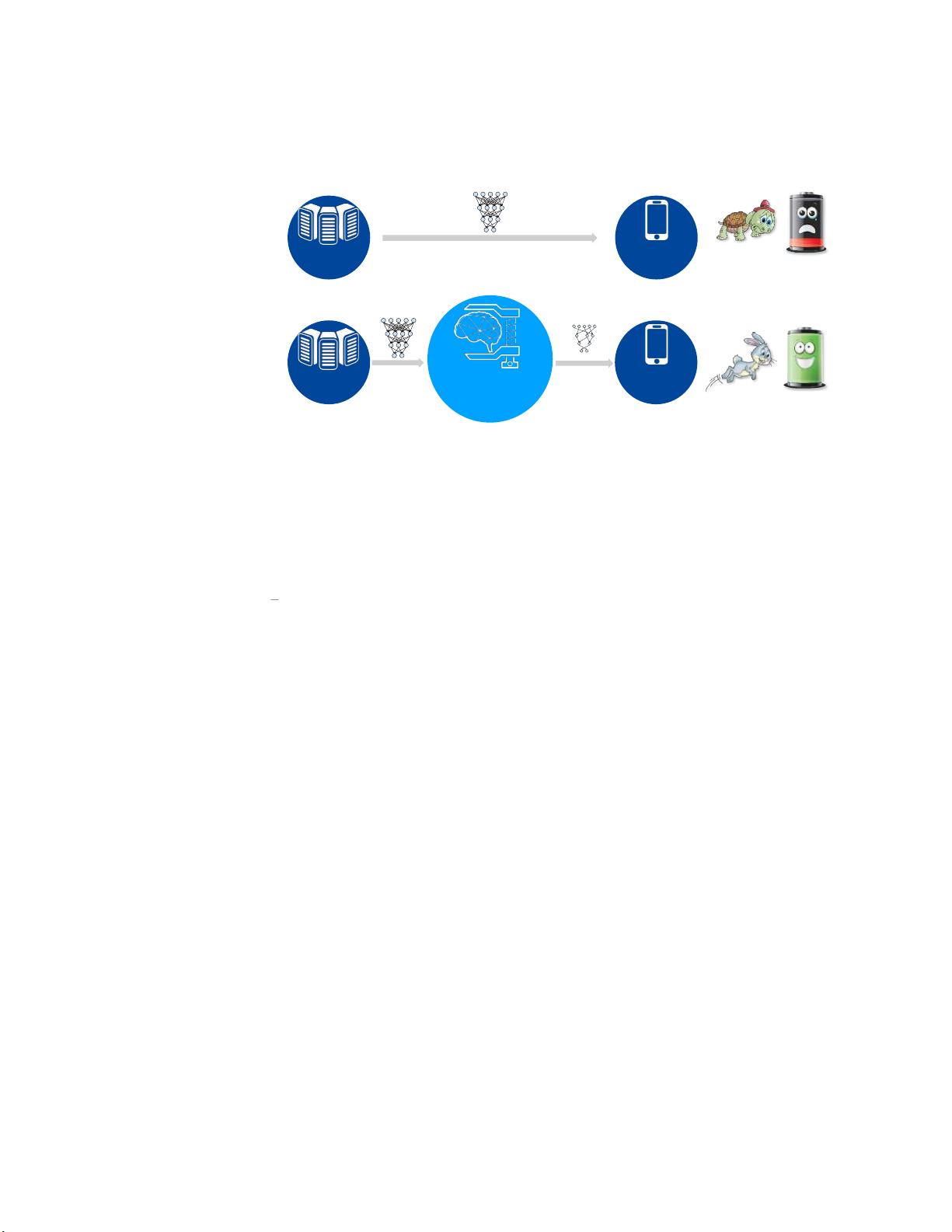

cloud AI. Future data center workloads would be populated with AI applications, such as Google

Cloud Machine Learning and Amazon Rekognition. The cost of maintaining such large-scale data

centers is tremendous. Smaller DNN models reduce the computation of the workload and take less

energy to run. This helps to reduce the electricity bill and the total cost of ownership (TCO) of

running a data center with deep learning workloads.

A byproduct of model compression is that it can remove the redundancy during training and

prevents overfitting. The compression algorithm automatically selects the optimal set of parameters

as well as their precision. It additionally regularizes the network by avoiding capturing the noise in

the training data.

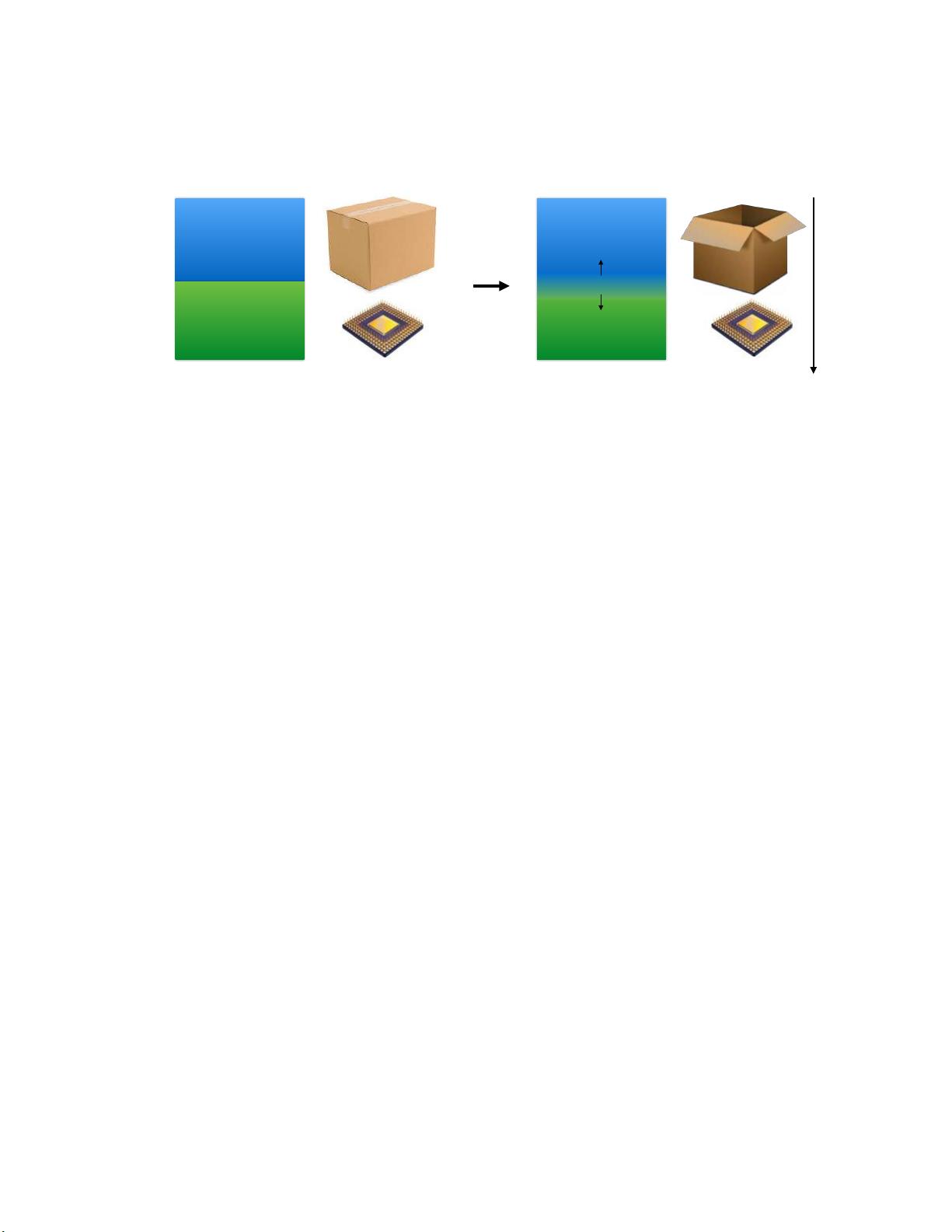

Motivation for Specialized Hardware:

Though model compression reduces the total number

operations deep learning algorithms require, the irregular pattern caused by compression hinders

the efficient acceleration on general-purpose processors. The irregularity limited the benefits of

model compression, and we achieved only 3

×

energy efficiency improvement on these machines. The

potential saving is much larger: 1

−

2 orders of magnitude comes from model compression, another

two orders of magnitude come from DRAM

⇒

SRAM. The compressed model is small enough to fit

in about 10MB of SRAM (verified with AlexNet, VGG-16, Inception-V3, ResNet-50, as discussed in

Chapter 4) rather than having to be stored in a larger capacity DRAM.

Why is there such a big gap between the theoretical and the actual efficiency improvement? The

first reason is the inefficient data path. First, running on compressed models requires traversing a

sparse tensor, which has poor locality on general-purpose processors. Secondly, model compression

incurs a level of indirection for the weights, which requires dedicated buffers for fast access. Lastly,

the bit width of an aggressively compressed model is not byte aligned, which results in serialization

and de-serialization overhead on general-purpose processors.

The second reason for the gap is inefficient control flow. Out-of-order CPU processors have

complicated front ends attempting to speculate the parallelism in the workload; this has a costly

consequence (flushing the pipeline) if any speculation is wrong. However, once narrowed down to deep

learning workloads, the computation pattern is known to the processor ahead of time. Neither branch

prediction nor caching are needed, and the execution is deterministic, not speculative. Therefore,

such speculative units are wasteful in out-of-order processors.

There are alternatives, but they are not perfect. SIMD units can amortize the instruction overhead

among multiple pieces of data. SIMT units can also hide the memory latency by having a pool of

threads. These architectures prefer the workload to be executed lockstep and in a parallel manner.

However, model compression leads to irregular computation patterns and makes it hard to parallelize,

causing divergence problem on these architectures.

While previously proposed DNN accelerators [19

–

21] can efficiently handle the dense, uncompressed

DNN model; they are unable to handle the aggressively compressed DNN model due to different

computation patterns. There is an enormous waste of computation and memory bandwidth for

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功