rest of the graph from disk.

In the early phase of our project, we explored this op-

tion, but found it difficult to find a good cache policy to

sufficiently reduce disk access. Ultimately, we rejected this

approach for two reasons. First, the performance would

be highly unpredictable, as it would depend on structural

properties of the input graph. Second, optimizing graphs

for locality is costly, and sometimes impossible, if a graph

is supplied without metadata required to efficiently cluster

it. General graph partitioners are not currently an option,

since even the state-of-the-art graph partitioner, METIS

[

27

], requires hundreds of gigabytes of memory to work

with graphs of billions of edges.

Graph compression. Compact representation of real-

world graphs is a well-studied problem, the best algorithms

can store web-graphs in only 4 bits/edge (see [

9

,

13

,

18

,

25

]). Unfortunately, while the graph structure can often

be compressed and stored in memory, we also associate

data with each of the edges and vertices, which can take

significantly more space than the graph itself.

Bulk-Synchronous Processing. For a synchronous sys-

tem, the random access problem can be solved by writing

updated edges into a scratch file, which is then sorted (us-

ing disk-sort), and used to generate input graph for next

iteration. For algorithms that modify only the vertices, not

edges, such as Pagerank, a similar solution has been used

[

15

]. However, it cannot be efficiently used to perform

asynchronous computation.

3 Parallel Sliding Windows

This section describes the Parallel Sliding Windows (PSW)

method (Algorithm 2). PSW can process a graph with

mutable edge values efficiently from disk, with only a small

number of non-sequential disk accesses, while supporting

the asynchronous model of computation. PSW processes

graphs in three stages: it 1) loads a subgraph from disk; 2)

updates the vertices and edges; and 3) writes the updated

values to disk. These stages are explained in detail below,

with a concrete example. We then present an extension to

graphs that evolve over time, and analyze the I/O costs of

the PSW method.

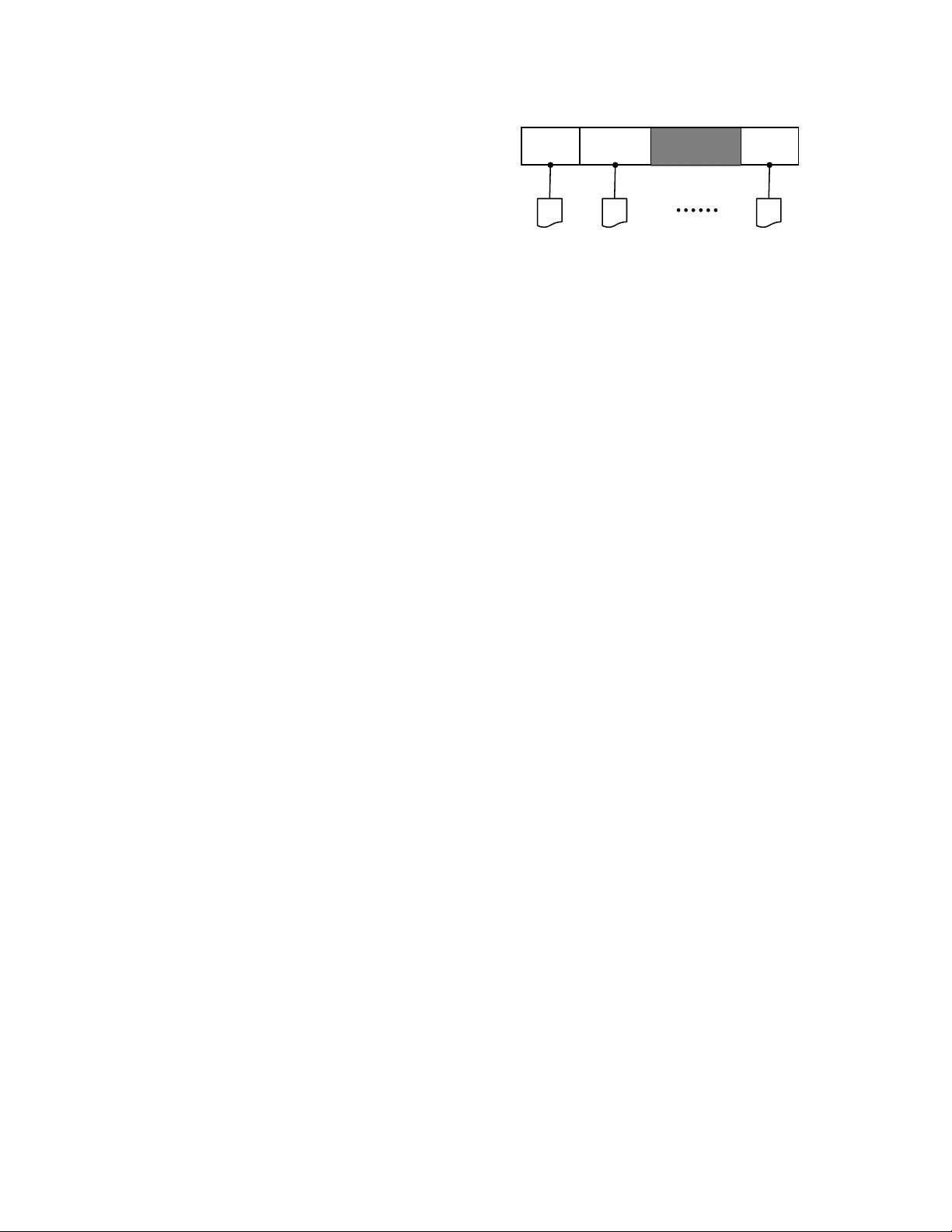

3.1 Loading the Graph

Under the PSW method, the vertices

V

of graph

G =

(V, E)

are split into

P

disjoint

intervals

. For each interval,

we associate a shard, which stores all the edges that have

destination in the interval. Edges are stored in the order of

their source (Figure 1). Intervals are chosen to balance the

number of edges in each shard; the number of intervals,

P

,

is chosen so that any one shard can be loaded completely

shard(1)

interval(1) interval(2) interval(P)

shard(2)

shard(P)

1 |V| v

1

v

2

Figure 1: The vertices of graph

(V, E)

are divided into

P

intervals. Each interval is associated with a shard, which

stores all edges that have destination vertex in that interval.

into memory. Similar data layout for sparse graphs was

used previously, for example, to implement I/O efficient

Pagerank and SpMV [5, 22].

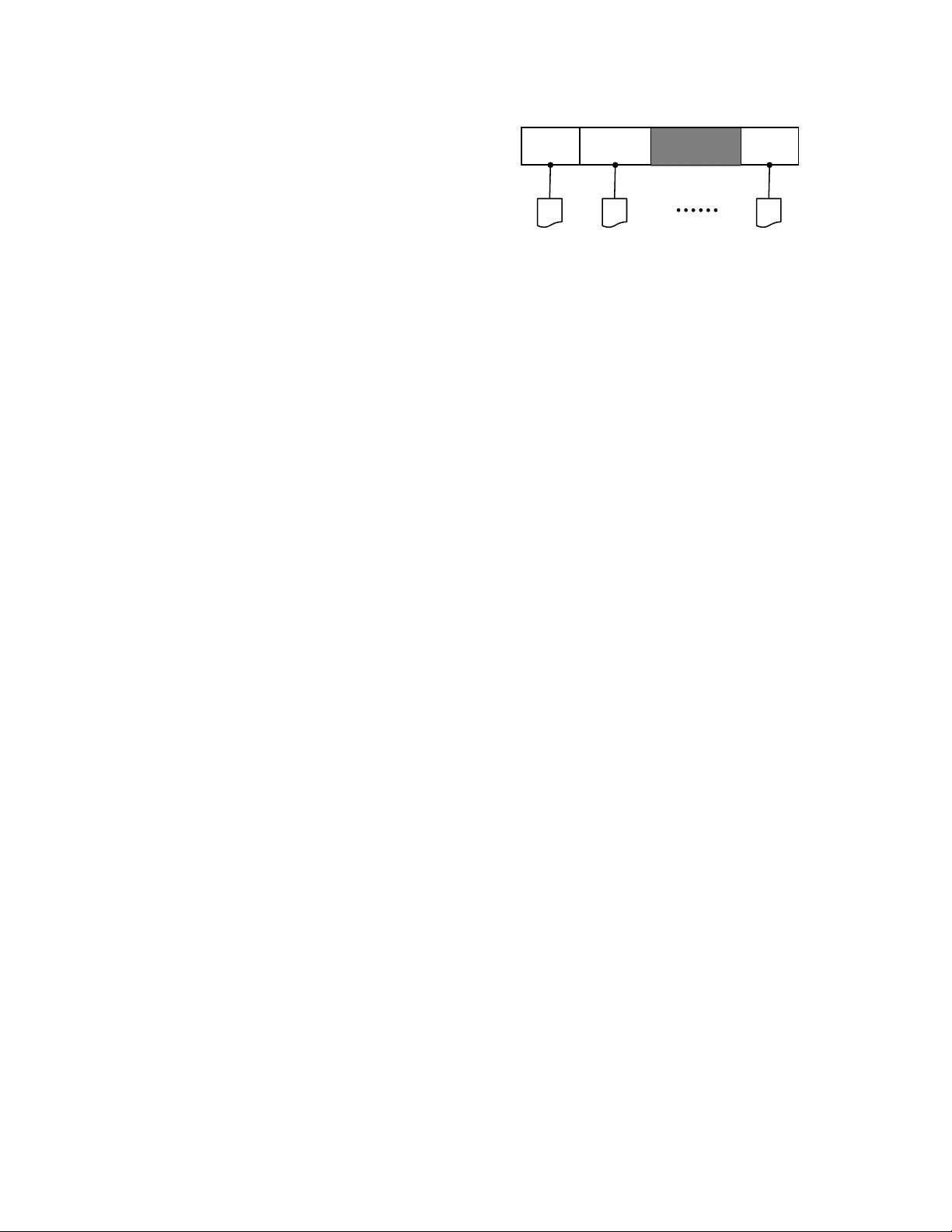

PSW does graph computation in

execution intervals

,

by processing vertices one interval at a time. To create the

subgraph for the vertices in interval

p

, their edges (with

their associated values) must be loaded from disk. First,

Shard(p)

, which contains the in-edges for the vertices

in interval(p), is loaded fully into memory. We call thus

shard(p) the

memory-shard

. Second, because the edges

are ordered by their source, the out-edges for the vertices

are stored in consecutive chunks in the other shards, requir-

ing additional

P − 1

block reads. Importantly, edges for

interval(p+1) are stored immediately after the edges for

interval(p). Intuitively, when PSW moves from an interval

to the next, it slides a

window

over each of the shards. We

call the other shards the

sliding shards

. Note, that if the

degree distribution of a graph is not uniform, the window

length is variable. In total, PSW requires only

P

sequential

disk reads to process each interval. A high-level illustration

of the process is given in Figure 2, and the pseudo-code of

the subgraph loading is provided in Algorithm 3.

3.2 Parallel Updates

After the subgraph for interval

p

has been fully loaded from

disk, PSW executes the user-defined

update-function

for

each vertex in parallel. As update-functions can modify the

edge values, to prevent adjacent vertices from accessing

edges concurrently (race conditions), we enforce external

determinism, which guarantees that each execution of PSW

produces exactly the same result. This guarantee is straight-

forward to implement: vertices that have edges with both

end-points in the same interval are flagged as critical, and

are updated in sequential order. Non-critical vertices do

not share edges with other vertices in the interval, and

can be updated safely in parallel. Note, that the update of

a critical vertex will observe changes in edges done by

4

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功