Certain of these algorithmic combinations give rise to

published descriptors, but many are untested. Using this

structure allows us to examine the contribution of each

building block in detail and obtain a better covering of the

space of possible algorithms.

Our approach to learning descriptors is therefore to put

together a combination of building blocks and then optimize

the parameters of these blocks using learning to obtain the

best match/no-match classification performance. This con-

trasts with prior attempts to hand tune descriptor para-

meters and helps to put each algorithm on the same footing

so that we can obtain and compare best performances.

Fig. 3 shows the overall learning framework for building

robust local image descriptors. The input is a set of image

patches which may be extracted from the neighborhood of

any interest point detector. The processing stages consist of

the following:

. G-block: Gaussian smoothing is applied to the input

patch.

. T-blocks: We perform a range of nonlinear trans-

formations to the smoothed patch. These include

operations such as angle-quantized gradients and

rectified steerable filters, and typically resemble the

“simple-cell” stage in human visual processing.

. S-blocks/E-blocks: We perform spatial pooling of

the above filter responses. S-blocks use parametrized

pooling regions, E-blocks are nonparametric. This

stage resembles the “complex-cell” operations in

visual processing.

. N-blocks: We normalize the output patch to account

for photometric variations. This stage may option-

ally be followed by another E-block to reduce the

number of dimensions at the output.

In general, the T-block stage extracts useful features from

the data like edge or local frequency information and the

S-block stage pools these features locally to make the

representation insensitive to positional shift. These stages

are similar to the simple/complex cells in the human visual

cortex [36]. It’s important that the T-block stage introduces

some nonlinearity, otherwise the smoothing step amounts

to simply blurring the image. Also, the N-block normal-

ization is critical, as many factors such as lighting,

reflectance, and camera response have a large effect on

the actual pixel values.

These processing stages have been combined into three

different pipelines, as shown in the figure. Each stage has

trainable parameters which are learned using our ground

truth data set of match/nonmatch pairs. In the remainder of

this section, we will take a more detailed look at the

parametrization of each of these building blocks.

3.1 Presmoothing (G-Block)

We smooth the image pixels using a Gaussian kernel of

standard deviation

s

as a preprocessing stage to allow the

descriptor to adapt to an appropriate scale relative to the

BROWN ET AL.: DISCRIMINATIVE LEARNING OF LOCAL IMAGE DESCRIPTORS 45

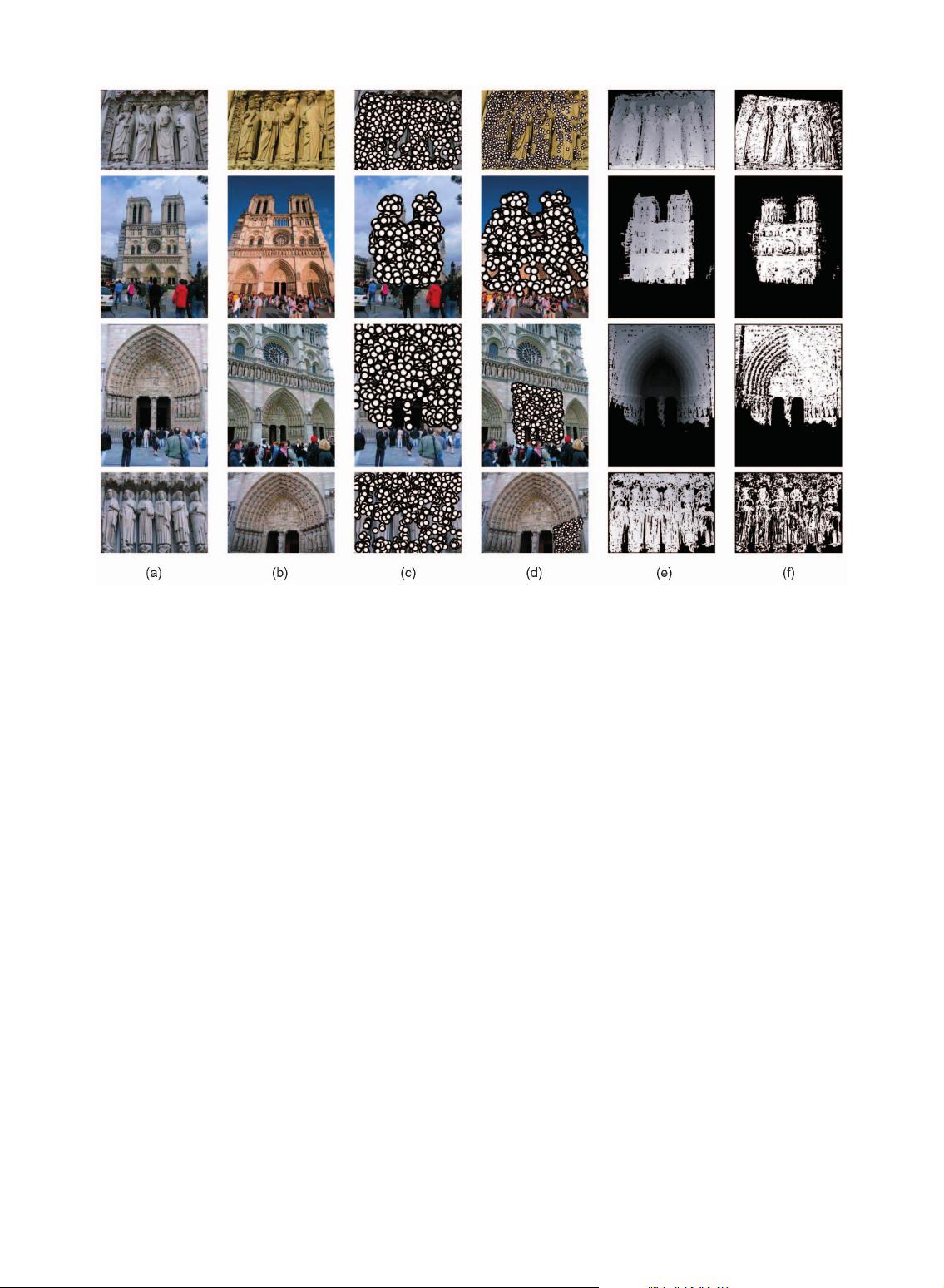

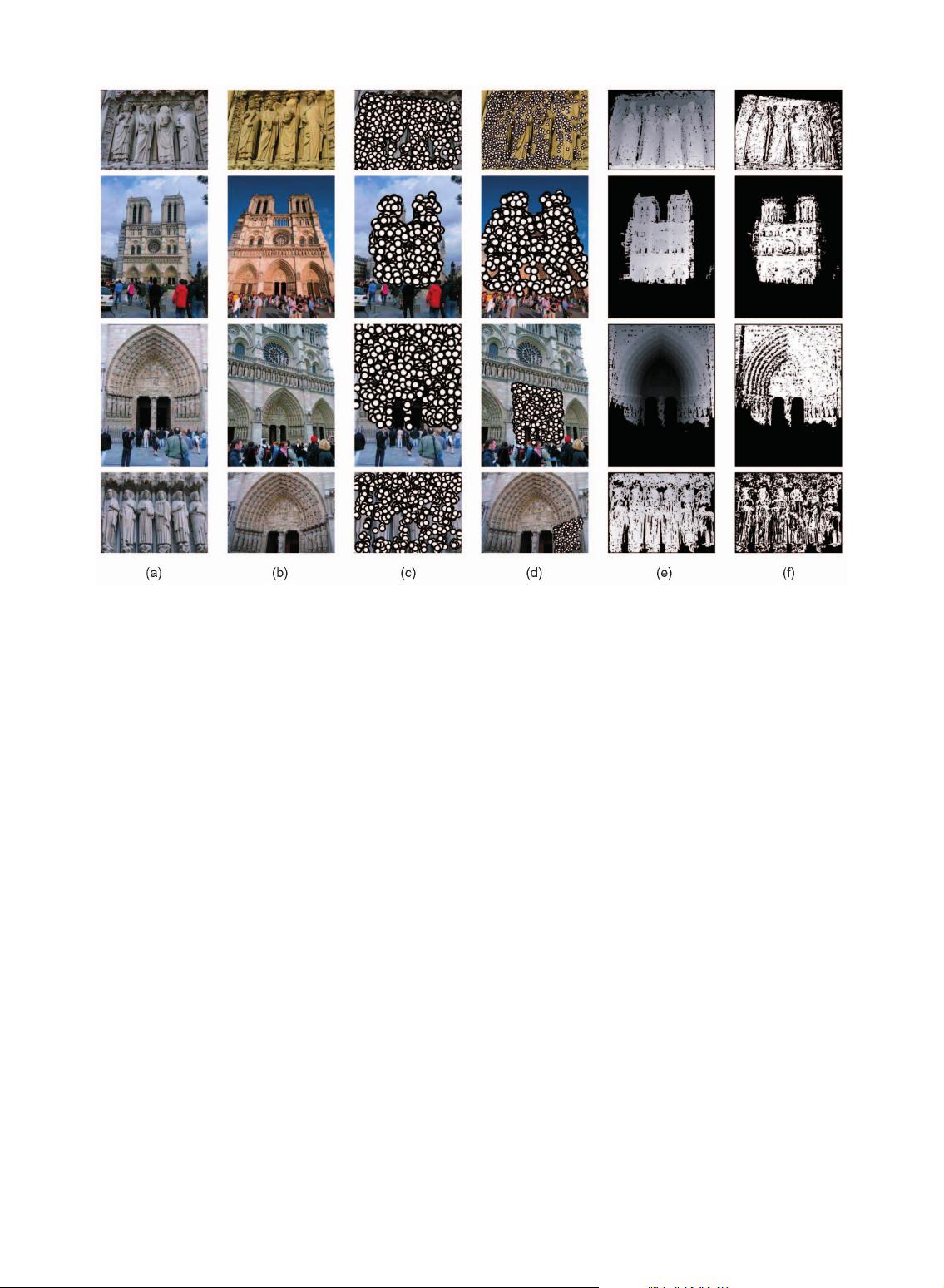

Fig. 1. Generating ground truth correspondences. To generate the ground truth image correspondences needed as input to our algorithms, we use

multiview stereo data provided by Goesele et al. [30]. Interest points are detected in the reference image and transferred to each neighboring

image via the depth map. If the projected point is visible, we look for interest points within a specified range of position, orientation, and scale, and

declare these to be matches. Points lying outside of twice this range are declared to be nonmatches. This is the basic input to our learning

algorithms. (a)-(f) Reference image, neighbor image, reference matches, neighbor matches, depth map, and visibility map, respectively.