8I.Oguzetal.

but it is uncertain using these measures whether this is driven by a higher suc-

cessful detection rate, more false alarms, more split lesions, or other reasons.

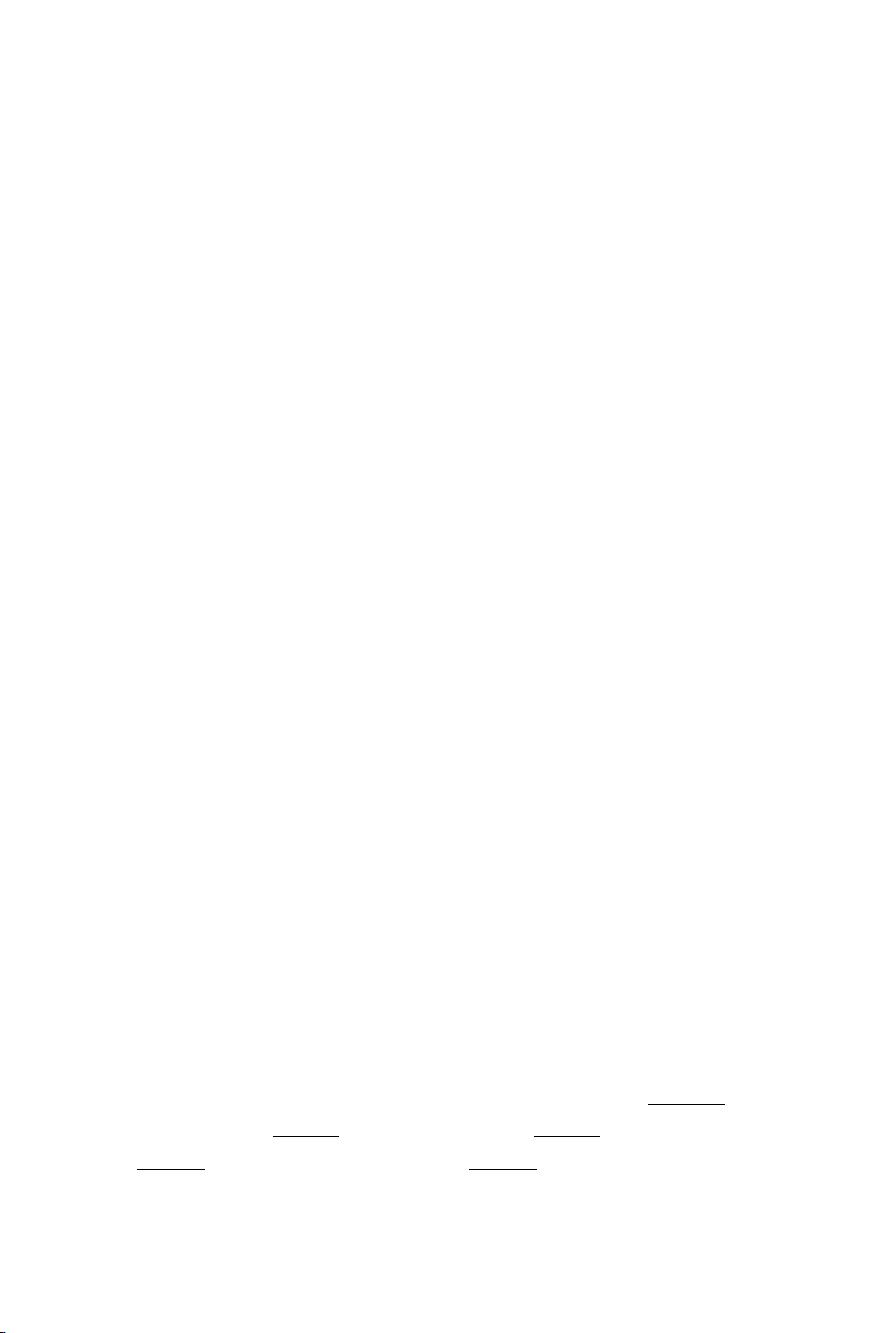

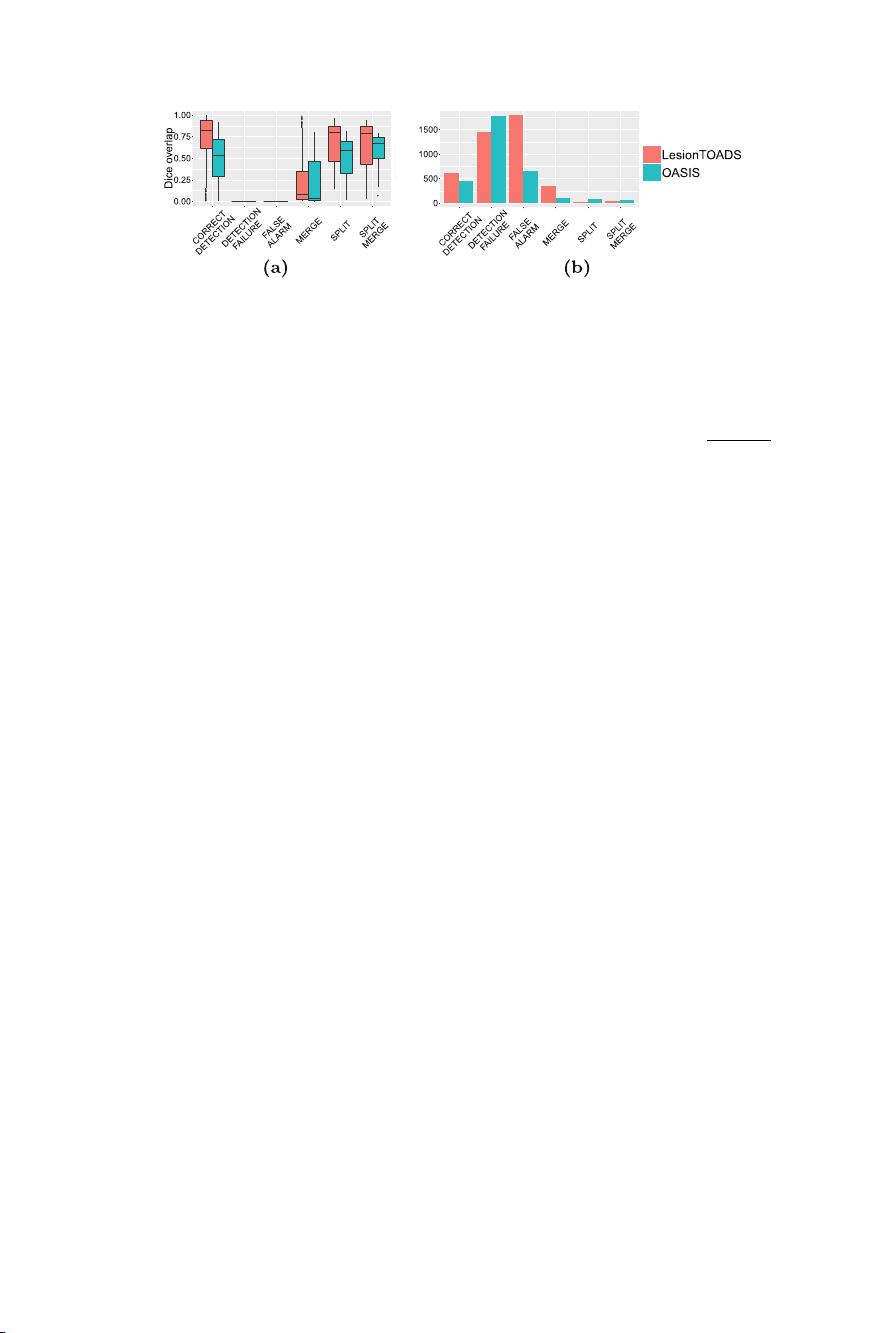

Figure 2(a) shows the per-lesion Dice overlap, summarized per class. Note

that the Dice for the detection failures and false alarms is 0 by construction. The

LesionTOADS algorithm has a higher Dice than OASIS in every category, which

is rather surprising given that the whole-image Dice measures are nearly equal

between the two methods (see Fig. 1). Figure 2(b) shows the number of lesions

in each of the classes described in Sect. 2. Compared to overall lesion counts,

this analysis provides further insight into the algorithm behavior: compared to

OASIS, LesionTOADS has a larger number of correctly detected lesions (good),

and fewer detection failures (good), but also more merges (bad), and many more

false alarms (bad). As such, it is difficult to declare an overall “winner” but each

algorithm is “winning” for different classes of lesions.

Figure 3(a) shows the per-lesion Dice overlap as a function of true lesion size.

On average, LesionTOADS seems to perform better than OASIS for small and

large lesions, whereas OASIS performs better for the more prevalent medium-

sized lesions. It is also interesting that in this medium-size range, OASIS appears

to have a tighter distribution of Dice scores whereas LesionTOADS performs

either very well (Dice > 0.8) or very poorly (Dice < 0.2). Figures 3(c) and (d)

provide additional insight by breaking down this data into individual classes.

Figure 4 takes this analysis one step further by directly comparing the algo-

rithms’ behaviors in each class. Figure 4(a) shows this comparison for the correctly

detected lesions. We note that the average performance of the LesionTOADS algo-

rithm increases steeply with size in this class, indicating the algorithm is highly

accurate for all but the smallest lesions (which are notoriously difficult to seg-

ment correctly), for those lesions that it manages to detect correctly. In contrast,

OASIS performance improves more slowly with lesion size. Figure 4(b) compares

the two methods for merged lesions; while the performance of the two algorithms

are roughly comparable and both improve with lesion size, there are overall fewer

merged lesions for OASIS, which is desirable. Figures 4(c) and (d) provide the

same comparison for split and split-merge classes, respectively.

For false alarms and detection failures, instead of scatterplots, we present

spatial distribution maps and size histograms of lesions. Figure 5 shows the detec-

tion failures for the two algorithms. It is interesting that the distribution of these

failures are remarkably similar between the two algorithms for smaller lesions,

suggesting many of these smaller lesions may be generally difficult to detect. The

spatial distributions of these detection failures concentrate on the septum area

for both methods. OASIS has an additional hotspot for detection failures near

the temporal horn of the ventricles.

Figure 6 compares the false alarms for the two algorithms. LesionTOADS

appears to generate hardly any small false positive lesions, but many medium-to-

large false positive lesions. This rather surprising finding explains the counterin-

tuitive result that while LesionTOADS reports better Dice overlap for each lesion

category (Fig. 2), the whole-image Dice scores are nearly identical between the

two methods (see Fig. 1). We note that the large number of false alarms reported

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功