x

o

Linear SVM

Smiling

Wavy Hair

No Beard

High Cheekbones

h

…

…

x

s

x

f

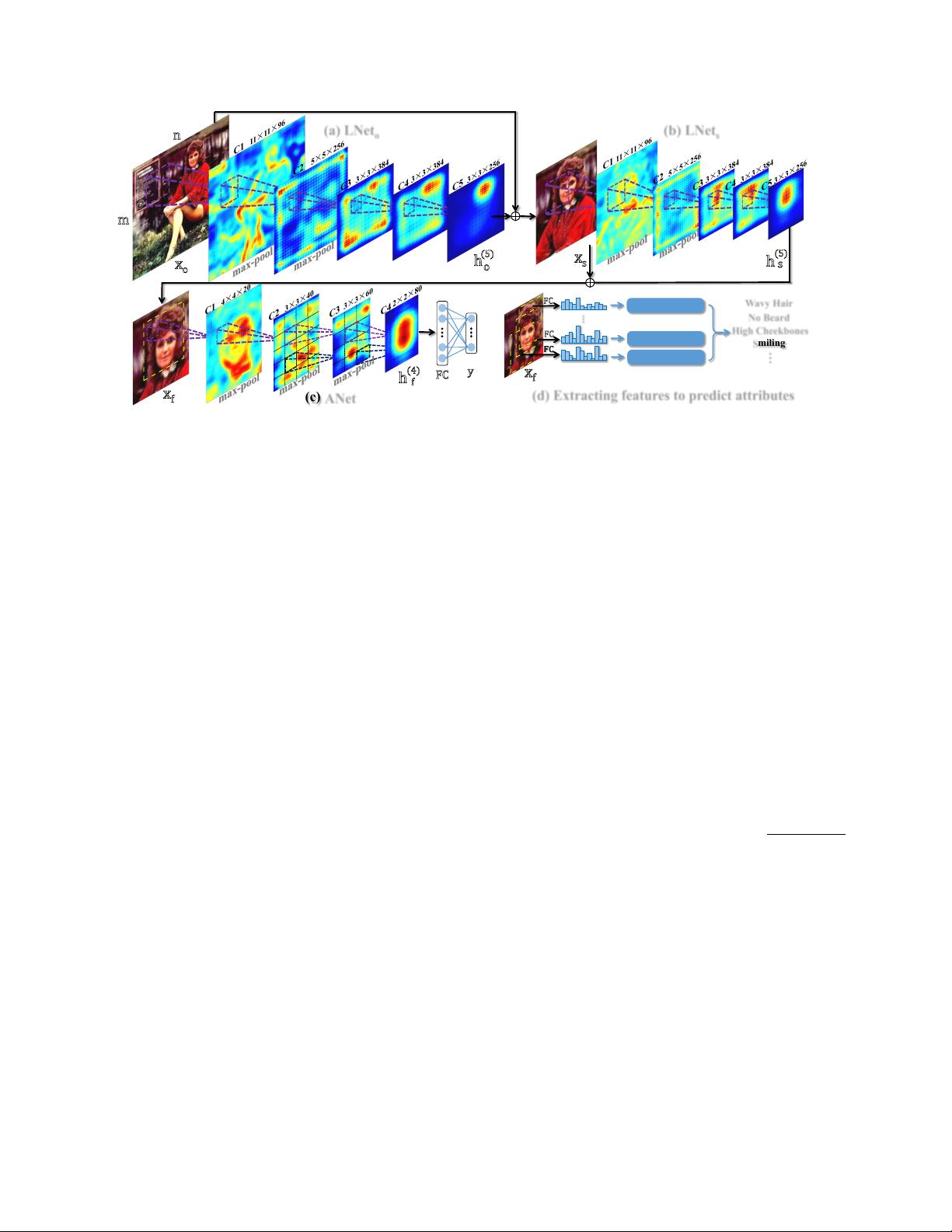

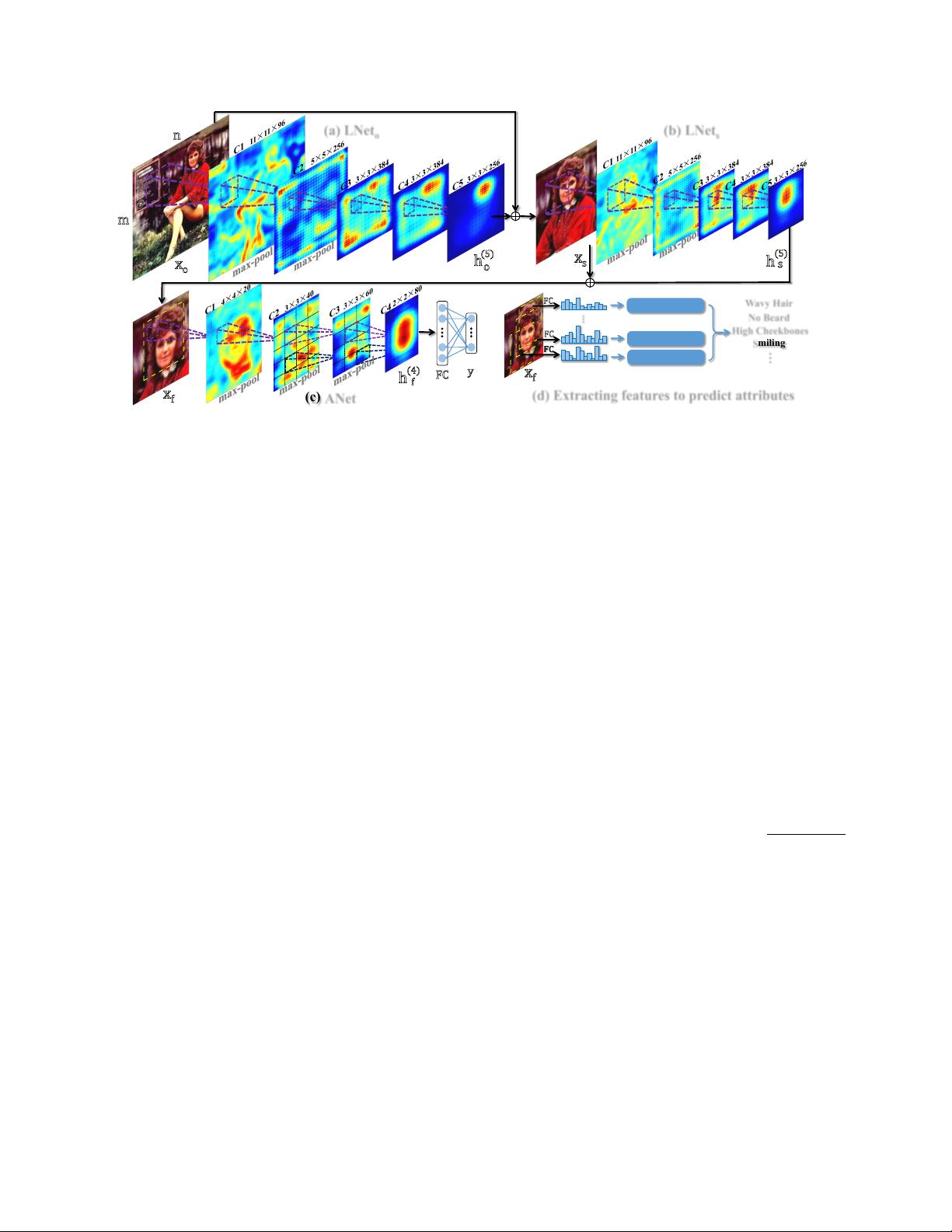

(a) LNet

o

(b) LNet

s

(c) ANet

(d) Extracting features to predict attributes

m

n

o

(5)

s

(5)

h

f

(4)

h

FC

FC

FC

FC

y

Linear SVM

Linear SVM

x

f

Figure 2. The proposed pipeline of attribute prediction (Best viewed in color)

accuracy of face localization. Both LNet

o

and LNet

s

have

network structures similar to AlexNet [13], whose hyper

parameters are specified in Fig.2 (a) and (b) respectively.

The fifth convolutional layer (C5) of LNet

o

indicates head-

shoulders while C5 of LNet

s

indicates faces, with their

highly responsed regions in their averaged response maps.

Moreover, the input x

o

of LNet

o

is a m × n image, while

the input x

s

of LNet

s

is the head-shoulder region, which is

localized by LNet

o

and resized to 227 × 227.

As illustrated in Fig.2 (c), ANet is learned to predict

attributes y by providing the input face region x

f

, which is

detected by LNet

s

and properly resized. Specifically, multi-

view versions [13] of x

f

are utilized to train ANet. Further-

more, ANet contains four convolutional layers, where the

filters of C1 and C2 are globally shared and the filters of C3

and C4 are locally shared. The effectiveness of local filters

have been demonstrated in many face related tasks [26, 28].

To handle complex face variations, ANet is pre-trained by

distinguishing massive face identities, which facilitates the

learning of discriminative features.

Fig.2 (d) outlines the procedure of attribute recognition.

ANet extracts a set of feature vectors (FCs) by cropping

overlapping patches on x

f

. An efficient feed-forward

algorithm is developed to reduce redundant computation

in the feature extraction stage. SVMs [8] are trained to

predict attribute values given each FC. The final prediction

is obtained by averaging all these values, to cope with small

misalignment of face localization.

2.1. Face Localization

The cascade of LNet

o

and LNet

s

accurately localizes

face regions by being trained on image-level attribute tags.

Pre-training LNet Both LNet

o

and LNet

s

are pre-

trained with 1, 000 general object categories from the

ImageNet Large Scale Visual Recognition Challenge

(ILSVRC) 2012 [6], containing 1.2 million training images

and 50 thousands validation images. All the data is

employed for pre-training except one third of the validation

data for choosing hyper-parameters [13]. We augment

data by cropping ten patches from each image, including

one patch at the center and four at the corners, and their

horizontal flips. We adopt softmax for object classification,

which is optimized by stochastic gradient descent (SGD)

with back-propagation (BP) [16]. As shown in Fig.3

(a.2), the averaged response map in C5 of LNet

o

already

indicates locations of objects including human faces after

pre-training.

Fine-tuning LNet Both LNet

o

and LNet

s

are fine-tuned

with attribute tags. Additional output layers are added to

the LNets individually for fine-tuning and then removed for

evaluation. LNet

o

adopts the full image x

o

as input while

LNet

s

uses the highly responsed region x

s

in the averaged

response map in C5 of LNet

o

as input, which roughly re-

spond to head-shoulders. The cross-entropy loss is used for

attribute classification, i.e. L =

P

i=1

y

i

log p(y

i

|x)+(1−

y

i

) log

1 − p(y

i

|x)

, where p(y

i

= 1|x) =

1

1+exp(−f(x))

is the probability of the i-th attribute given image x. As

shown in Fig.3 (a.3), the response maps after fine-tuning

become much more clean and smooth, indicating that the

filters learned by attribute tags can detect face patterns with

complex variations. To appreciate the effectiveness of pre-

training, we also include the averaged response map in C5

of being directly trained from scratch with attribute tags but

without pre-training in Fig.3 (a.4). It cannot separate face

regions from background and other body parts well.

Thresholding and Proposing Windows We show that

the responses of C5 in LNet are discriminative enough

to separate faces and background by simply searching a

threshold, such that a window with response larger than

this threshold corresponding to face and otherwise is back-

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功