没有合适的资源?快使用搜索试试~ 我知道了~

首页Java NIO 1.4新特性详解:提升效率与性能的关键

Java NIO 1.4新特性详解:提升效率与性能的关键

"Java NIO (New I/O) 是Java语言1.4版本引入的一项重大革新,该英文版文档由O'Reilly出版社出版,于2002年8月发行,共312页。本书详细探讨了Java程序员在处理I/O(输入/输出)时面临的典型挑战,并展示了如何充分利用新版本1.4中I/O功能来提升代码效率。作者强调,NIO API并非完全取代1.3版本的I/O,而是作为补充,因此,读者将学习何时选择使用新API,何时旧版本的1.3 I/O API更适合特定应用。 第1章"Introduction"(介绍)部分,首先阐述了I/O操作与CPU时间的关系,指出在早期版本中,I/O操作往往占用大量CPU资源,限制了程序的性能。然后,作者强调Java NIO的目标是让I/O操作不再成为CPU瓶颈,从而提升程序的响应性、可扩展性和可靠性。章节中还会涉及I/O的基本概念,帮助读者理解NIO技术的核心原理。 书中通过实际案例和例子,引导读者掌握如何使用NIO API解决常见的I/O问题,如非阻塞I/O、缓冲区管理、多路复用等,这些都将直接影响到程序的性能优化。此外,由于NIO API与1.3版本API的兼容性,读者会了解到何时应该采用新的I/O方式,以及旧版本API在特定场景下的优势。 总体来说,这是一本实用的指南,适合希望提高Java编程效率,尤其是I/O密集型应用开发者的参考书籍。通过阅读这本书,开发者不仅可以了解NIO技术,还能学会如何在实际项目中灵活运用这些新特性,以提升代码质量和整体系统的性能表现。"

资源详情

资源推荐

Java NIO

12

1.3 Getting to the Good Stuff

Most of the development effort that goes into operating systems is targeted at improving I/O

performance. Lots of very smart people toil very long hours perfecting techniques for

schlepping data back and forth. Operating-system vendors expend vast amounts of time and

money seeking a competitive advantage by beating the other guys in this or that published

benchmark.

Today's operating systems are modern marvels of software engineering (OK, some are more

marvelous than others), but how can the Java programmer take advantage of all this wizardry

and still remain platform-independent? Ah, yet another example of the TANSTAAFL

principle.

1

The JVM is a double-edged sword. It provides a uniform operating environment that shelters

the Java programmer from most of the annoying differences between operating-system

environments. This makes it faster and easier to write code because platform-specific

idiosyncrasies are mostly hidden. But cloaking the specifics of the operating system means

that the jazzy, wiz-bang stuff is invisible too.

What to do? If you're a developer, you could write some native code using the Java Native

Interface (JNI) to access the operating-system features directly. Doing so ties you to a specific

operating system (and maybe a specific version of that operating system) and exposes the

JVM to corruption or crashes if your native code is not 100% bug free. If you're an operating-

system vendor, you could write native code and ship it with your JVM implementation to

provide these features as a Java API. But doing so might violate the license you signed to

provide a conforming JVM. Sun took Microsoft to court about this over the JDirect package

which, of course, worked only on Microsoft systems. Or, as a last resort, you could turn to

another language to implement performance-critical applications.

The

java.nio

package provides new abstractions to address this problem. The Channel and

Selector classes in particular provide generic APIs to I/O services that were not reachable

prior to JDK 1.4. The TANSTAAFL principle still applies: you won't be able to access every

feature of every operating system, but these new classes provide a powerful new framework

that encompasses the high-performance I/O features commonly available on commercial

operating systems today. Additionally, a new Service Provider Interface (SPI) is provided in

java.nio.channels.spi

that allows you to plug in new types of channels and selectors

without violating compliance with the specifications.

With the addition of NIO, Java is ready for serious business, entertainment, scientific and

academic applications in which high-performance I/O is essential.

The JDK 1.4 release contains many other significant improvements in addition to NIO. As of

1.4, the Java platform has reached a high level of maturity, and there are few application areas

remaining that Java cannot tackle. A great guide to the full spectrum of JDK features in 1.4 is

Java In A Nutshell, Fourth Edition by David Flanagan (O'Reilly).

1

There Ain't No Such Thing As A Free Lunch.

Java NIO

13

1.4 I/O Concepts

The Java platform provides a rich set of I/O metaphors. Some of these metaphors are more

abstract than others. With all abstractions, the further you get from hard, cold reality,

the tougher it becomes to connect cause and effect. The NIO packages of JDK 1.4 introduce

a new set of abstractions for doing I/O. Unlike previous packages, these are focused on

shortening the distance between abstraction and reality. The NIO abstractions have very real

and direct interactions with real-world entities. Understanding these new abstractions and, just

as importantly, the I/O services they interact with, is key to making the most of I/O-intensive

Java applications.

This book assumes that you are familiar with basic I/O concepts. This section provides

a whirlwind review of some basic ideas just to lay the groundwork for the discussion of how

the new NIO classes operate. These classes model I/O functions, so it's necessary to grasp

how things work at the operating-system level to understand the new I/O paradigms.

In the main body of this book, it's important to understand the following topics:

•

Buffer handling

• Kernel versus user space

• Virtual memory

• Paging

• File-oriented versus stream I/O

•

Multiplexed I/O (readiness selection)

1.4.1 Buffer Handling

Buffers, and how buffers are handled, are the basis of all I/O. The very term "input/output"

means nothing more than moving data in and out of buffers.

Processes perform I/O by requesting of the operating system that data be drained from

a buffer (write) or that a buffer be filled with data (read). That's really all it boils down to. All

data moves in or out of a process by this mechanism. The machinery inside the operating

system that performs these transfers can be incredibly complex, but conceptually, it's very

straightforward.

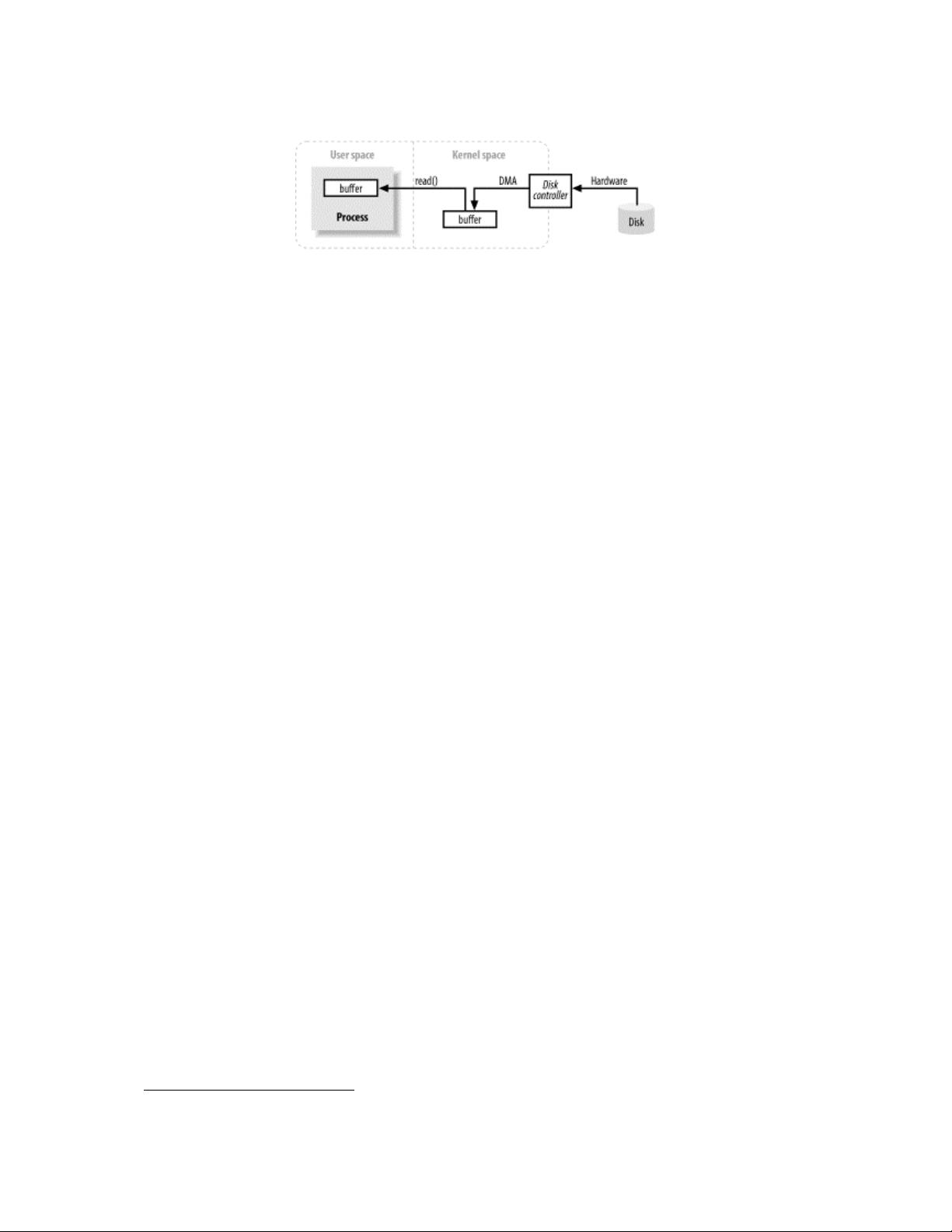

Figure 1-1 shows a simplified logical diagram of how block data moves from an external

source, such as a disk, to a memory area inside a running process. The process requests that

its buffer be filled by making the read( ) system call. This results in the kernel issuing

a command to the disk controller hardware to fetch the data from disk. The disk controller

writes the data directly into a kernel memory buffer by DMA without further assistance from

the main CPU. Once the disk controller finishes filling the buffer, the kernel copies the data

from the temporary buffer in kernel space to the buffer specified by the process when it

requested the read( ) operation.

Java NIO

14

Figure 1-1. Simplified I/O buffer handling

This obviously glosses over a lot of details, but it shows the basic steps involved.

Note the concepts of user space and kernel space in Figure 1-1. User space is where regular

processes live. The JVM is a regular process and dwells in user space. User space is

a nonprivileged area: code executing there cannot directly access hardware devices, for

example. Kernel space is where the operating system lives. Kernel code has special privileges:

it can communicate with device controllers, manipulate the state of processes in user space,

etc. Most importantly, all I/O flows through kernel space, either directly (as decsribed here) or

indirectly (see Section 1.4.2).

When a process requests an I/O operation, it performs a system call, sometimes known as

a trap, which transfers control into the kernel. The low-level open( ), read( ), write( ), and

close( ) functions so familiar to C/C++ coders do nothing more than set up and perform the

appropriate system calls. When the kernel is called in this way, it takes whatever steps are

necessary to find the data the process is requesting and transfer it into the specified buffer in

user space. The kernel tries to cache and/or prefetch data, so the data being requested by the

process may already be available in kernel space. If so, the data requested by the process is

copied out. If the data isn't available, the process is suspended while the kernel goes about

bringing the data into memory.

Looking at Figure 1-1, it's probably occurred to you that copying from kernel space to the

final user buffer seems like extra work. Why not tell the disk controller to send it directly to

the buffer in user space? There are a couple of problems with this. First, hardware is usually

not able to access user space directly.

2

Second, block-oriented hardware devices such as disk

controllers operate on fixed-size data blocks. The user process may be requesting an oddly

sized or misaligned chunk of data. The kernel plays the role of intermediary, breaking down

and reassembling data as it moves between user space and storage devices.

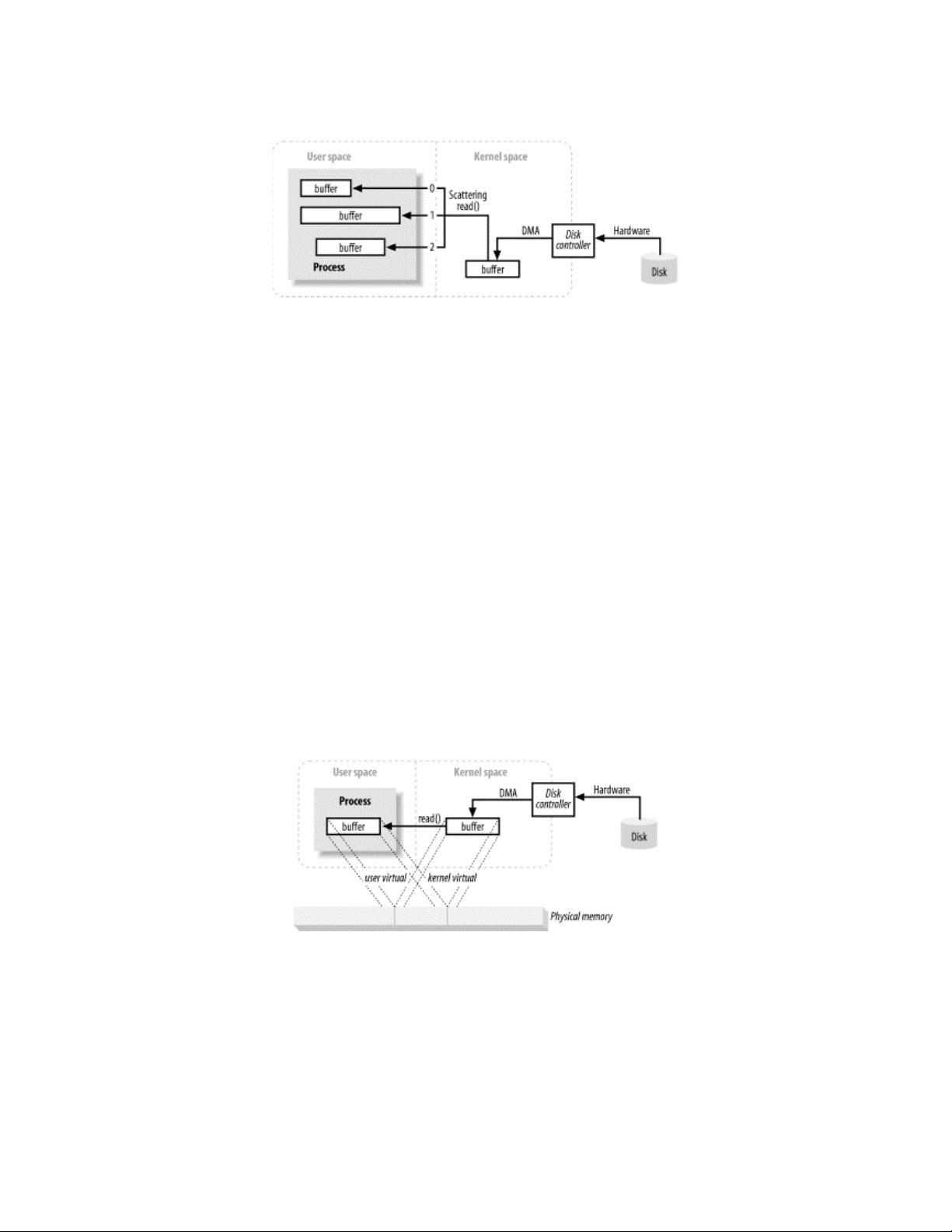

1.4.1.1 Scatter/gather

Many operating systems can make the assembly/disassembly process even more efficient. The

notion of scatter/gather allows a process to pass a list of buffer addresses to the operating

system in one system call. The kernel can then fill or drain the multiple buffers in sequence,

scattering the data to multiple user space buffers on a read, or gathering from several buffers

on a write (Figure 1-2).

2

There are many reasons for this, all of which are beyond the scope of this book. Hardware devices usually cannot directly use virtual memory

addresses.

Java NIO

15

Figure 1-2. A scattering read to three buffers

This saves the user process from making several system calls (which can be expensive) and

allows the kernel to optimize handling of the data because it has information about the total

transfer. If multiple CPUs are available, it may even be possible to fill or drain several buffers

simultaneously.

1.4.2 Virtual Memory

All modern operating systems make use of virtual memory. Virtual memory means that

artificial, or virtual, addresses are used in place of physical (hardware RAM) memory

addresses. This provides many advantages, which fall into two basic categories:

1. More than one virtual address can refer to the same physical memory location.

2. A virtual memory space can be larger than the actual hardware memory available.

The previous section said that device controllers cannot do DMA directly into user space, but

the same effect is achievable by exploiting item 1 above. By mapping a kernel space address

to the same physical address as a virtual address in user space, the DMA hardware (which can

access only physical memory addresses) can fill a buffer that is simultaneously visible to both

the kernel and a user space process. (See Figure 1-3.)

Figure 1-3. Multiply mapped memory space

This is great because it eliminates copies between kernel and user space, but requires the

kernel and user buffers to share the same page alignment. Buffers must also be a multiple of

the block size used by the disk controller (usually 512 byte disk sectors). Operating systems

divide their memory address spaces into pages, which are fixed-size groups of bytes. These

memory pages are always multiples of the disk block size and are usually powers of 2 (which

simplifies addressing). Typical memory page sizes are 1,024, 2,048, and 4,096 bytes. The

virtual and physical memory page sizes are always the same. Figure 1-4 shows how virtual

memory pages from multiple virtual address spaces can be mapped to physical memory.

Java NIO

16

Figure 1-4. Memory pages

1.4.3 Memory Paging

To support the second attribute of virtual memory (having an addressable space larger than

physical memory), it's necessary to do virtual memory paging (often referred to as swapping,

though true swapping is done at the process level, not the page level). This is a scheme

whereby the pages of a virtual memory space can be persisted to external disk storage to make

room in physical memory for other virtual pages. Essentially, physical memory acts as a

cache for a paging area, which is the space on disk where the content of memory pages is

stored when forced out of physical memory.

Figure 1-5 shows virtual pages belonging to four processes, each with its own virtual memory

space. Two of the five pages for Process A are loaded into memory; the others are stored on

disk.

Figure 1-5. Physical memory as a paging-area cache

Aligning memory page sizes as multiples of the disk block size allows the kernel to issue

direct commands to the disk controller hardware to write memory pages to disk or reload

them when needed. It turns out that all disk I/O is done at the page level. This is the only way

data ever moves between disk and physical memory in modern, paged operating systems.

Modern CPUs contain a subsystem known as the Memory Management Unit (MMU). This

device logically sits between the CPU and physical memory. It contains the mapping

information needed to translate virtual addresses to physical memory addresses. When

the CPU references a memory location, the MMU determines which page the location resides

in (usually by shifting or masking the bits of the address value) and translates that virtual page

number to a physical page number (this is done in hardware and is extremely fast). If there is

no mapping currently in effect between that virtual page and a physical memory page, the

MMU raises a page fault to the CPU.

A page fault results in a trap, similar to a system call, which vectors control into the kernel

along with information about which virtual address caused the fault. The kernel then takes

steps to validate the page. The kernel will schedule a pagein operation to read the content of

the missing page back into physical memory. This often results in another page being stolen

to make room for the incoming page. In such a case, if the stolen page is dirty (changed since

剩余253页未读,继续阅读

wwgavin

- 粉丝: 1

- 资源: 5

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

最新资源

- AirKiss技术详解:无线传递信息与智能家居连接

- Hibernate主键生成策略详解

- 操作系统实验:位示图法管理磁盘空闲空间

- JSON详解:数据交换的主流格式

- Win7安装Ubuntu双系统详细指南

- FPGA内部结构与工作原理探索

- 信用评分模型解析:WOE、IV与ROC

- 使用LVS+Keepalived构建高可用负载均衡集群

- 微信小程序驱动餐饮与服装业创新转型:便捷管理与低成本优势

- 机器学习入门指南:从基础到进阶

- 解决Win7 IIS配置错误500.22与0x80070032

- SQL-DFS:优化HDFS小文件存储的解决方案

- Hadoop、Hbase、Spark环境部署与主机配置详解

- Kisso:加密会话Cookie实现的单点登录SSO

- OpenCV读取与拼接多幅图像教程

- QT实战:轻松生成与解析JSON数据

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功