没有合适的资源?快使用搜索试试~ 我知道了~

首页ØMQ指南:Python实现的零MQ入门教程

ØMQ指南:Python实现的零MQ入门教程

需积分: 10 1 下载量 91 浏览量

更新于2024-07-16

收藏 5.28MB PDF 举报

"ØMQ - The Guide 是一本详细介绍 ZeroMQ 的标准使用教程,主要针对 Python 实现者,适用于零基础入门者和希望深入了解这个强大的消息传递库的学习者。作者 Pieter Hintjens 是 iMatix 公司的 CEO,该指南最初是用 C 语言编写的,同时也提供了其他多种编程语言版本(如 PHP、Lua、Haxe 等)以及相应的代码示例。 本书涵盖了 ZeroMQ 3.2 的最新稳定版本,对于使用旧版 ZeroMQ 的用户,某些示例和解释可能不完全适用。教程内容广泛,包括如何创建原子消息的 socket,并支持在不同传输方式间(如进程内、进程间通信、TCP 和多播)进行可靠的数据交换。它强调了 N 对 N 的连接模式,例如扇出(fan-out)、发布-订阅(pub-sub)、任务分发和请求-响应模式,使其能够构建高性能的集群产品。 ZeroMQ 的核心特点是其异步 I/O 模型,这使得开发者能够在高并发场景下轻松处理多个连接和数据流,实现灵活的并行处理。由于其轻量级和高效性,它被广泛用于构建实时应用、分布式系统和微服务架构中。书中详细介绍了 DEALER-to-DEALER 结合模式,这是 ZeroMQ 中的一种常见通信模式,适用于需要双方都能发送和接收消息的场景。 要深入学习,读者应从第一章开始逐步探索,掌握基础知识。前三章通常会涵盖基本概念和常用功能,而后续章节则涉及更高级的主题和实战技巧。练习和实践是提升理解的关键,无论是编写简单的客户端服务器应用,还是构建复杂的网络架构,这本书都是一个宝贵的资源。务必关注官方 issue tracker 以获取更新和修正,确保使用的知识是最新的。"

资源详情

资源推荐

2018/2/14 ØMQ - The Guide - ØMQ - The Guide

http://zguide.zeromq.org/py:all#The-DEALER-to-DEALER-Combination 16/411

# Python 3

raw_input = input

context = zmq.Context()

# Socket to send messages on

sender = context.socket(zmq.PUSH)

sender.bind("tcp://*:5557")

# Socket with direct access to the sink: used to syncronize start of

batch

sink = context.socket(zmq.PUSH)

sink.connect("tcp://localhost:5558")

print("Press Enter when the workers are ready: ")

_ = raw_input()

print("Sending tasks to workers…")

# The first message is "0" and signals start of batch

sink.send(b'0')

# Initialize random number generator

random.seed()

# Send 100 tasks

total_msec = 0

for task_nbr in range(100):

# Random workload from 1 to 100 msecs

workload = random.randint(1, 100)

total_msec += workload

sender.send_string(u'%i' % workload)

print("Total expected cost: %s msec" % total_msec)

# Give 0MQ time to deliver

time.sleep(1)

C | C++ | C# | Clojure | CL | Delphi | Erlang | F# | Felix | Go | Haskell | Haxe | Java | Lua | Node.js |

Objective-C | Perl | PHP | Ruby | Scala | Tcl | Ada | Basic | ooc | Q | Racket

Here is the worker application. It receives a message, sleeps for that number of seconds, and

then signals that it's finished:

taskwork: Parallel task worker in Pythonin Python

# Task worker

# Connects PULL socket to tcp://localhost:5557

# Collects workloads from ventilator via that socket

# Connects PUSH socket to tcp://localhost:5558

# Sends results to sink via that socket

#

# Author: Lev Givon <lev(at)columbia(dot)edu>

import sys

import time

2018/2/14 ØMQ - The Guide - ØMQ - The Guide

http://zguide.zeromq.org/py:all#The-DEALER-to-DEALER-Combination 17/411

import zmq

context = zmq.Context()

# Socket to receive messages on

receiver = context.socket(zmq.PULL)

receiver.connect("tcp://localhost:5557")

# Socket to send messages to

sender = context.socket(zmq.PUSH)

sender.connect("tcp://localhost:5558")

# Process tasks forever

while True:

s = receiver.recv()

# Simple progress indicator for the viewer

sys.stdout.write('.')

sys.stdout.flush()

# Do the work

time.sleep(int(s)*0.001)

# Send results to sink

sender.send(b'')

C | C++ | C# | Clojure | CL | Delphi | Erlang | F# | Felix | Go | Haskell | Haxe | Java | Lua | Node.js |

Objective-C | Perl | PHP | Ruby | Scala | Tcl | Ada | Basic | ooc | Q | Racket

Here is the sink application. It collects the 100 tasks, then calculates how long the overall

processing took, so we can confirm that the workers really were running in parallel if there

are more than one of them:

tasksink: Parallel task sink in Pythonin Python

# Task sink

# Binds PULL socket to tcp://localhost:5558

# Collects results from workers via that socket

#

# Author: Lev Givon <lev(at)columbia(dot)edu>

import sys

import time

import zmq

context = zmq.Context()

# Socket to receive messages on

receiver = context.socket(zmq.PULL)

receiver.bind("tcp://*:5558")

# Wait for start of batch

s = receiver.recv()

# Start our clock now

tstart = time.time()

2018/2/14 ØMQ - The Guide - ØMQ - The Guide

http://zguide.zeromq.org/py:all#The-DEALER-to-DEALER-Combination 18/411

# Process 100 confirmations

for task_nbr in range(100):

s = receiver.recv()

if task_nbr % 10 == 0:

sys.stdout.write(':')

else:

sys.stdout.write('.')

sys.stdout.flush()

# Calculate and report duration of batch

tend = time.time()

print("Total elapsed time: %d msec" % ((tend-tstart)*1000))

C | C++ | C# | Clojure | CL | Delphi | Erlang | F# | Felix | Go | Haskell | Haxe | Java | Lua | Node.js |

Objective-C | Perl | PHP | Ruby | Scala | Tcl | Ada | Basic | ooc | Q | Racket

The average cost of a batch is 5 seconds. When we start 1, 2, or 4 workers we get results

like this from the sink:

1 worker: total elapsed time: 5034 msecs.

2 workers: total elapsed time: 2421 msecs.

4 workers: total elapsed time: 1018 msecs.

Let's look at some aspects of this code in more detail:

The workers connect upstream to the ventilator, and downstream to the sink. This

means you can add workers arbitrarily. If the workers bound to their endpoints, you

would need (a) more endpoints and (b) to modify the ventilator and/or the sink each

time you added a worker. We say that the ventilator and sink are stable parts of our

architecture and the workers are dynamic parts of it.

We have to synchronize the start of the batch with all workers being up and running.

This is a fairly common gotcha in ZeroMQ and there is no easy solution. The

zmq_connect method takes a certain time. So when a set of workers connect to the

ventilator, the first one to successfully connect will get a whole load of messages in that

short time while the others are also connecting. If you don't synchronize the start of the

batch somehow, the system won't run in parallel at all. Try removing the wait in the

ventilator, and see what happens.

The ventilator's PUSH socket distributes tasks to workers (assuming they are all

connected before the batch starts going out) evenly. This is called load balancing and

it's something we'll look at again in more detail.

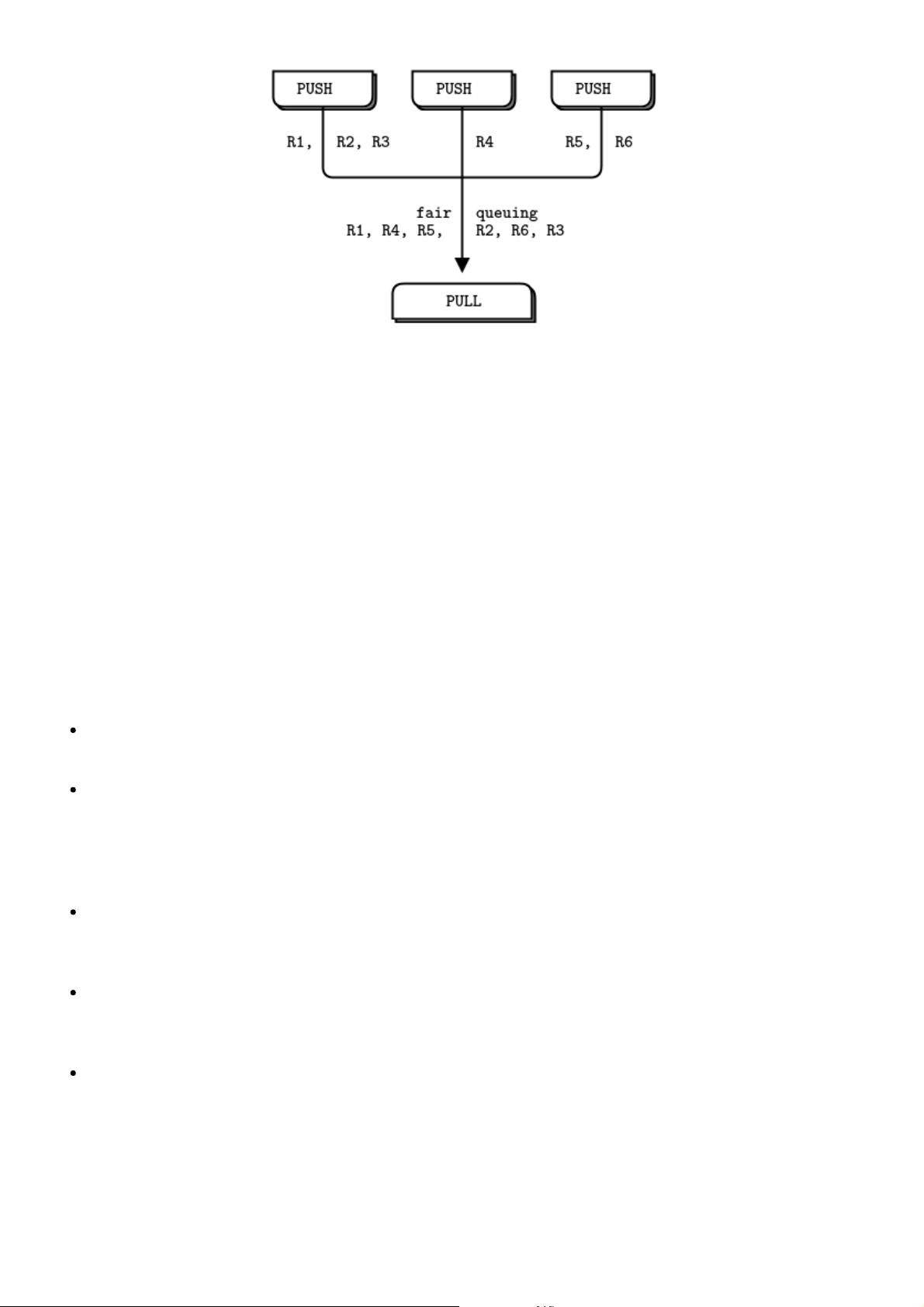

The sink's PULL socket collects results from workers evenly. This is called fair-queuing.

Figure 6 - Fair Queuing

2018/2/14 ØMQ - The Guide - ØMQ - The Guide

http://zguide.zeromq.org/py:all#The-DEALER-to-DEALER-Combination 19/411

The pipeline pattern also exhibits the "slow joiner" syndrome, leading to accusations that

PUSH sockets don't load balance properly. If you are using PUSH and PULL, and one of your

workers gets way more messages than the others, it's because that PULL socket has joined

faster than the others, and grabs a lot of messages before the others manage to connect. If

you want proper load balancing, you probably want to look at the load balancing pattern in

Chapter 3 - Advanced Request-Reply Patterns.

Programming with ZeroMQ

top prev next

Having seen some examples, you must be eager to start using ZeroMQ in some apps. Before

you start that, take a deep breath, chillax, and reflect on some basic advice that will save

you much stress and confusion.

Learn ZeroMQ step-by-step. It's just one simple API, but it hides a world of possibilities.

Take the possibilities slowly and master each one.

Write nice code. Ugly code hides problems and makes it hard for others to help you. You

might get used to meaningless variable names, but people reading your code won't. Use

names that are real words, that say something other than "I'm too careless to tell you

what this variable is really for". Use consistent indentation and clean layout. Write nice

code and your world will be more comfortable.

Test what you make as you make it. When your program doesn't work, you should know

what five lines are to blame. This is especially true when you do ZeroMQ magic, which

just won't work the first few times you try it.

When you find that things don't work as expected, break your code into pieces, test

each one, see which one is not working. ZeroMQ lets you make essentially modular

code; use that to your advantage.

Make abstractions (classes, methods, whatever) as you need them. If you copy/paste a

lot of code, you're going to copy/paste errors, too.

Getting the Context Right

top prev next

2018/2/14 ØMQ - The Guide - ØMQ - The Guide

http://zguide.zeromq.org/py:all#The-DEALER-to-DEALER-Combination 20/411

ZeroMQ applications always start by creating a context, and then using that for creating

sockets. In C, it's the zmq_ctx_new() call. You should create and use exactly one context in

your process. Technically, the context is the container for all sockets in a single process, and

acts as the transport for inproc sockets, which are the fastest way to connect threads in one

process. If at runtime a process has two contexts, these are like separate ZeroMQ instances.

If that's explicitly what you want, OK, but otherwise remember:

Call zmq_ctx_new() once at the start of a process, and zmq_ctx_destroy() once at the

end.

If you're using the fork() system call, do zmq_ctx_new() after the fork and at the beginning

of the child process code. In general, you want to do interesting (ZeroMQ) stuff in the

children, and boring process management in the parent.

Making a Clean Exit

top prev next

Classy programmers share the same motto as classy hit men: always clean-up when you

finish the job. When you use ZeroMQ in a language like Python, stuff gets automatically freed

for you. But when using C, you have to carefully free objects when you're finished with them

or else you get memory leaks, unstable applications, and generally bad karma.

Memory leaks are one thing, but ZeroMQ is quite finicky about how you exit an application.

The reasons are technical and painful, but the upshot is that if you leave any sockets open,

the zmq_ctx_destroy() function will hang forever. And even if you close all sockets,

zmq_ctx_destroy() will by default wait forever if there are pending connects or sends unless

you set the LINGER to zero on those sockets before closing them.

The ZeroMQ objects we need to worry about are messages, sockets, and contexts. Luckily it's

quite simple, at least in simple programs:

Use zmq_send() and zmq_recv() when you can, as it avoids the need to work with

zmq_msg_t objects.

If you do use zmq_msg_recv(), always release the received message as soon as you're

done with it, by calling zmq_msg_close().

If you are opening and closing a lot of sockets, that's probably a sign that you need to

redesign your application. In some cases socket handles won't be freed until you

destroy the context.

When you exit the program, close your sockets and then call zmq_ctx_destroy(). This

destroys the context.

This is at least the case for C development. In a language with automatic object destruction,

sockets and contexts will be destroyed as you leave the scope. If you use exceptions you'll

have to do the clean-up in something like a "final" block, the same as for any resource.

If you're doing multithreaded work, it gets rather more complex than this. We'll get to

multithreading in the next chapter, but because some of you will, despite warnings, try to run

before you can safely walk, below is the quick and dirty guide to making a clean exit in a

multithreaded ZeroMQ application.

First, do not try to use the same socket from multiple threads. Please don't explain why you

think this would be excellent fun, just please don't do it. Next, you need to shut down each

socket that has ongoing requests. The proper way is to set a low LINGER value (1 second),

剩余410页未读,继续阅读

naocwz

- 粉丝: 0

- 资源: 3

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

最新资源

- 计算机人脸表情动画技术发展综述

- 关系数据库的关键字搜索技术综述:模型、架构与未来趋势

- 迭代自适应逆滤波在语音情感识别中的应用

- 概念知识树在旅游领域智能分析中的应用

- 构建is-a层次与OWL本体集成:理论与算法

- 基于语义元的相似度计算方法研究:改进与有效性验证

- 网格梯度多密度聚类算法:去噪与高效聚类

- 网格服务工作流动态调度算法PGSWA研究

- 突发事件连锁反应网络模型与应急预警分析

- BA网络上的病毒营销与网站推广仿真研究

- 离散HSMM故障预测模型:有效提升系统状态预测

- 煤矿安全评价:信息融合与可拓理论的应用

- 多维度Petri网工作流模型MD_WFN:统一建模与应用研究

- 面向过程追踪的知识安全描述方法

- 基于收益的软件过程资源调度优化策略

- 多核环境下基于数据流Java的Web服务器优化实现提升性能

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功