基于浏览器辅助的长文本问答模型,以人工反馈提高答案质量

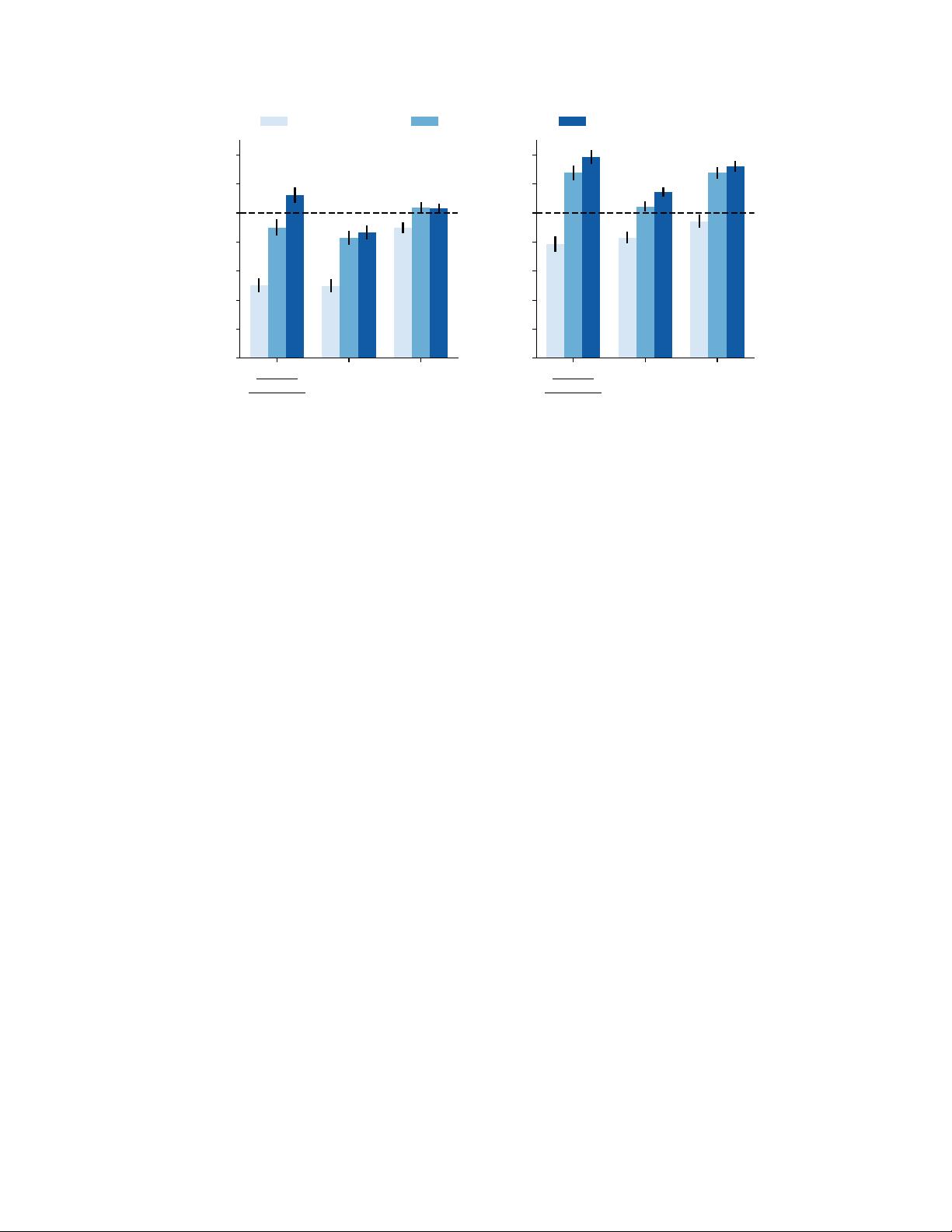

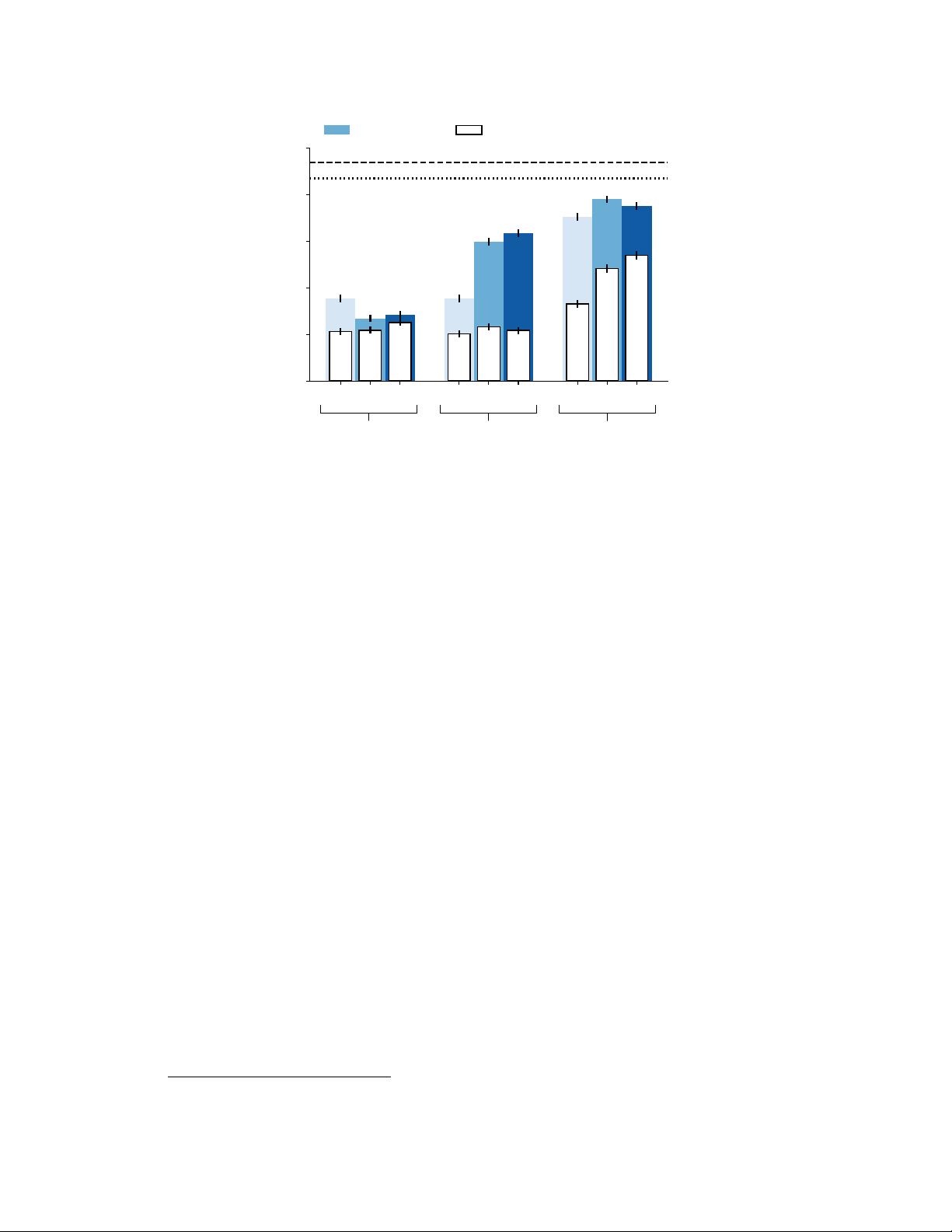

Browser-assisted Question-Answering with Human Feedback Browser-assisted question-answering with human feedback 是一篇关于使用人工智能和 Web 浏览环境来回答长格式问题的研究论文。论文的作者使用了 GPT-3 模型,并对其进行了微调,以便在文本基于的 Web 浏览环境中回答问题。 论文的主要贡献在于,作者提出了使用人类反馈来优化答案质量的方法。具体来说,就是通过让模型在 Web 浏览环境中搜索和浏览信息,然后使用人类反馈来评价答案的正确性。这种方法可以让模型更好地理解问题的上下文,并提供更加准确的答案。 论文中使用了 ELI5 数据集,该数据集来自 Reddit 用户提出的问题。作者使用行为克隆和拒绝采样来训练模型,并使用人类反馈来评价答案的质量。实验结果显示,使用人类反馈的模型比人类演示者的答案要好56%。 本论文的贡献在于: 1. 提出了使用 Web 浏览环境来回答长格式问题的方法,这可以让模型更好地理解问题的上下文。 2. 使用人类反馈来优化答案质量,这可以让模型提供更加准确的答案。 3. 实验结果显示,使用人类反馈的模型可以获得更好的答案质量。 本论文的研究结果对自然语言处理和人工智能领域具有重要意义,可以应用于各种需要回答长格式问题的场景,例如客服聊天机器人、搜索引擎等。 相关知识点: * GPT-3 模型:是一种基于 transformer 架构的语言模型,可以生成长格式的文本。 * 行为克隆:是一种机器学习算法,用于模仿人类的行为。 * 拒绝采样:是一种机器学习算法,用于选择最优的答案。 * 人类反馈:是指人类对模型答案的评价和反馈,以便优化答案质量。 * ELI5 数据集:是一种自然语言处理数据集,来自 Reddit 用户提出的问题。 * 文本基于的 Web 浏览环境:是一种使用 Web 浏览器来回答问题的方法,可以让模型更好地理解问题的上下文。 本论文提出了使用 Web 浏览环境和人类反馈来回答长格式问题的方法,并获得了较好的实验结果。这项研究对自然语言处理和人工智能领域具有重要意义。

剩余31页未读,继续阅读

- 粉丝: 831

- 资源: 2334

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

最新资源

- WebLogic集群配置与管理实战指南

- AIX5.3上安装Weblogic 9.2详细步骤

- 面向对象编程模拟试题详解与解析

- Flex+FMS2.0中文教程:开发流媒体应用的实践指南

- PID调节深入解析:从入门到精通

- 数字水印技术:保护版权的新防线

- 8位数码管显示24小时制数字电子钟程序设计

- Mhdd免费版详细使用教程:硬盘检测与坏道屏蔽

- 操作系统期末复习指南:进程、线程与系统调用详解

- Cognos8性能优化指南:软件参数与报表设计调优

- Cognos8开发入门:从Transformer到ReportStudio

- Cisco 6509交换机配置全面指南

- C#入门:XML基础教程与实例解析

- Matlab振动分析详解:从单自由度到6自由度模型

- Eclipse JDT中的ASTParser详解与核心类介绍

- Java程序员必备资源网站大全

信息提交成功

信息提交成功