MonoPerfCap: Human Performance Capture from Monocular Video • 39:3

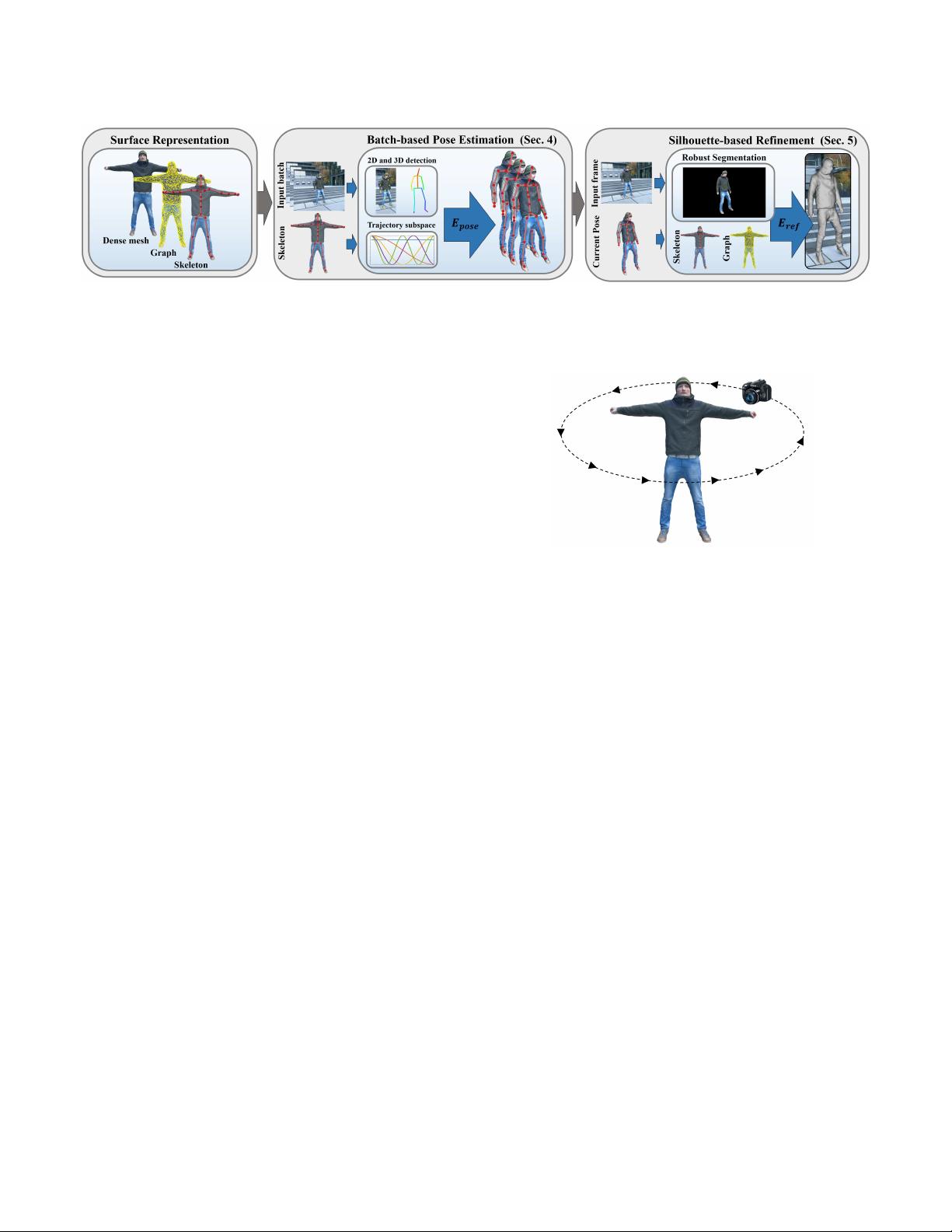

Fig. 2. Given a monocular video and a personalized actor rig, our approach reconstructs the actor motion as well as medium-scale surface deformations.

The monocular reconstruction problem is solved by joint recovery of temporally coherent per-batch motion based on a low dimensional trajectory subspace.

Non-rigid alignment based on automatically extracted silhouees is used to beer match the input.

manual initialization [Wren et al

.

1997] and pose correction. [Wei

and Chai 2010] obtain high quality 3D pose from challenging sport

video sequences using physical constraints, but require manual

joint position annotations for each keyframe (every 30 frames). Also

simpler temporal priors have been applied [Sidenbladh et al

.

2000;

Urtasun et al

.

2005, 2006]. With recent advances in convolutional

neural networks (CNNs), fully-automatic, high accuracy 2D pose

estimation [Jain et al

.

2014; Newell et al

.

2016; Pishchulin et al

.

2016;

Toshev and Szegedy 2014; Wei et al

.

2016] is feasible from a single

image. Lifting the 2D detections to the corresponding 3D pose is

common [Akhter and Black 2015; Li et al

.

2015; Mori and Malik 2006;

Simo-Serra et al

.

2012; Taylor 2000; Wang et al

.

2014; Yasin et al

.

2016], but is a hard and underconstrained problem [Sminchisescu

and Triggs 2003b]. [Bogo et al

.

2016] employ a pose prior based on

a mixture of Gaussians in combination with penetration constraints.

The approach of [Zhou et al

.

2015] reconstructs 3D pose as a sparse

linear combination of a set of example poses. Direct regression from

a single image to the 3D pose is an alternative [Ionescu et al

.

2014a;

Li and Chan 2014; Mehta et al

.

2016; Pavlakos et al

.

2016; Tekin

et al

.

2016; Zhou et al

.

2016a], but leads to temporally incoherent

reconstructions.

Promising are hybrid approaches that combine discriminative 2D-

[Elhayek et al

.

2015] and 3D-pose estimation techniques [Rosales

and Sclaro 2006; Sminchisescu et al

.

2006] with generative image

formation models, but these approches require multiple views of

the scene. Recently, a real-time 3D human pose estimation approach

has been proposed [Mehta et al

.

2017], which also relies on monoc-

ular video input. It is a very fast method, but does not achieve the

temporal stability and robustness to dicult poses of our approach.

In contrast to this previous work, our method not only estimates

the 3D skeleton more robustly, by leveraging the complimentary

strength of 2D and 3D discriminative models, and trajectory sub-

space constraints, but also recovers medium-scale non-rigid surface

deformations that can not be modeled using only skeleton subspace

deformation. We extensively compare to the approach of [Mehta

et al. 2017] in Sec. 6.

Dense Monocular Shape Reconstruction. Reconstructing strongly

deforming non-rigid objects and humans in general apparel given

just monocular input is an ill-posed problem. By constraining the

solution to a low-dimensional space, coarse human shape can be

reconstructed based on a foreground segmentation [Chen et al

.

2010;

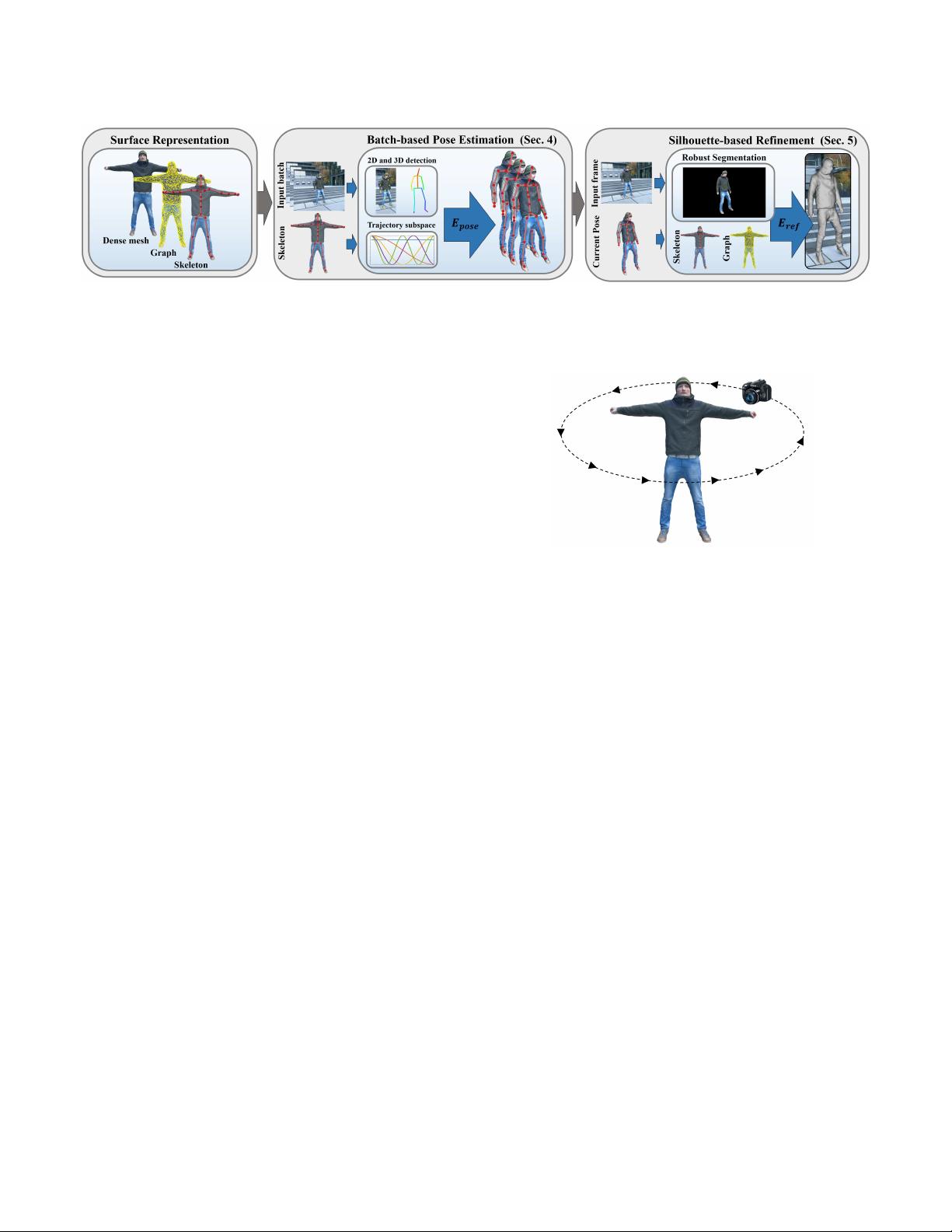

Fig. 3. Acquisition of a textured template mesh from handheld video footage

of the actor in a static pose.

Grest et al

.

2005; Guan et al

.

2009; Jain et al

.

2010; Rogge et al

.

2014;

Zhou et al

.

2010]. Still, these approaches rely on manual initial-

ization and correction steps. Fully automatic approaches combine

generative body models with discriminative pose and shape estima-

tion, e.g. conditioned on silhouette cues [Sigal et al

.

2007] and 2D

pose [Bogo et al

.

2016], but can also only capture skin-tight clothing

without surface details. The recent work of [Huang et al

.

2017],

which ts a parametric human body model to the 2D pose detection

and the silhouettes over time, has demonstrated compelling results

on both multi-view and monocular data. But again, their method

is not able to model loose clothing. Model-free reconstructions are

based on rigidity and temporal smoothness assumptions [Garg et al

.

2013; Russell et al

.

2014] and only apply to medium-scale deforma-

tions and simple motions. Template-based approaches enable fast

sequential tracking [Bartoli et al

.

2015; Salzmann and Fua 2011; Yu

et al

.

2015], but are unable to capture the fast and highly articulated

motion of the human body. Automatic monocular performance cap-

ture of more general human motion is still an unsolved problem,

especially if non-rigid surface deformations are taken into account.

Our approach tackles this challenging problem.

3 METHOD OVERVIEW

Non-rigid 3D reconstruction from monocular RGB video is a chal-

lenging and ill-posed problem, since the subjects are partially visible

at each time instance and depth cues are implicit. To tackle the

problem of partial visibility, similar to many previous works, we

employ a template mesh, pre-acquired by image based monocular

reconstruction of the actor in a static pose. When it comes to the

2018-02-26 01:54 page 3 (pp. 1-15) ACM Transactions on Graphics, Vol. 9, No. 4, Article 39. Publication date: March 2018.

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

信息提交成功

信息提交成功