没有合适的资源?快使用搜索试试~ 我知道了~

首页"谷歌PaLM 2技术报告:多语言与推理能力更强,计算效率更高"

"谷歌PaLM 2技术报告:多语言与推理能力更强,计算效率更高"

本报告介绍了PaLM 2,这是一种新的最先进的语言模型,具有更好的多语言和推理能力,比其前身PaLM(Chowdhery等,2022)更具计算效率。PaLM 2是一个基于Transformer的模型,使用与UL2(Tay等,2023)类似的目标混合进行训练。通过对英语和多语言以及推理任务的广泛评估,我们证明了PaLM 2在不同模型尺寸下在下游任务上的质量显着提高,同时相比于PaLM,展现出了更快速和更高效的推理能力。这种改进的效率使得模型能够更广泛地部署,同时也让模型能够更快地响应,使交互更加自然。PaLM 2展现了健壮的推理能力。

资源详情

资源推荐

Table 6: BIG-Bench Hard 3-shot results. PaLM and PaLM-2 use direct prediction and chain-of-thought prompting (Wei

et al., 2022) following the experimental setting of Suzgun et al. (2022).

Task Metric

PaLM PaLM 2 Absolute Gain Percent Gain

Direct/CoT Direct/CoT Direct/CoT Direct/CoT

boolean_expressions multiple choice grade 83.2/80.0 89.6/86.8 +6.4/+6.8 +8%/+8%

causal_judgment multiple choice grade 61.0/59.4 62.0/58.8 +1.0/-0.6 +2%/-1%

date_understanding multiple choice grade 53.6/79.2 74.0/91.2 +20.4/+12.0 +38%/+15%

disambiguation_qa multiple choice grade 60.8/67.6 78.8/77.6 +18.0/+10.0 +30%/+15%

dyck_languages multiple choice grade 28.4/28.0 35.2/63.6 +6.8/+35.6 +24%/+127%

formal_fallacies_syllogism_negation multiple choice grade 53.6/51.2 64.8/57.2 +11.2/+6.0 +21%/+12%

geometric_shapes multiple choice grade 37.6/43.6 51.2/34.8 +13.6/-8.8 +36%/-20%

hyperbaton multiple choice grade 70.8/90.4 84.8/82.4 +14.0/-8.0 +20%/-9%

logical_deduction multiple choice grade 42.7/56.9 64.5/69.1 +21.8/+12.2 +51%/+21%

movie_recommendation multiple choice grade 87.2/92.0 93.6/94.4 +6.4/+2.4 +7%/+3%

multistep_arithmetic_two exact string match 1.6/19.6 0.8/75.6 -0.8/+56.0 -50%/+286%

navigate multiple choice grade 62.4/79.6 68.8/91.2 +6.4/+11.6 +10%/+15%

object_counting exact string match 51.2/83.2 56.0/91.6 +4.8/+8.4 +9%/+10%

penguins_in_a_table multiple choice grade 44.5/65.1 65.8/84.9 +21.3/+19.8 +48%/+30%

reasoning_about_colored_objects multiple choice grade 38.0/74.4 61.2/91.2 +23.2/+16.8 +61%/+23%

ruin_names multiple choice grade 76.0/61.6 90.0/83.6 +14.0/+22.0 +18%/+36%

salient_translation_error_detection multiple choice grade 48.8/54.0 66.0/61.6 +17.2/+7.6 +35%/+14%

snarks multiple choice grade 78.1/61.8 78.7/84.8 +0.6/+23.0 +1%/+37%

sports_understanding multiple choice grade 80.4/98.0 90.8/98.0 +10.4/+0.0 +13%/+0%

temporal_sequences multiple choice grade 39.6/78.8 96.4/100.0 +56.8/+21.2 +143%/+27%

tracking_shuffled_objects multiple choice grade 19.6/52.9 25.3/79.3 +5.7/+26.4 +29%/+50%

web_of_lies multiple choice grade 51.2/100.0 55.2/100.0 +4.0/+0.0 +8%/+0%

word_sorting exact string match 32.0/21.6 58.0/39.6 +26.0/+18.0 +81%/+83%

Average - 52.3/65.2 65.7/78.1 +13.4/+12.9 +26% / 20%

Table 7: Evaluation results on MATH, GSM8K, and MGSM with chain-of-thought prompting (Wei et al., 2022) /

self-consistency (Wang et al., 2023). The PaLM result on MATH is sourced from (Lewkowycz et al., 2022), while

the PaLM result on MGSM is taken from (Chung et al., 2022).

a

Minerva (Lewkowycz et al., 2022),

b

GPT-4 (OpenAI,

2023b),

c

Flan-PaLM (Chung et al., 2022).

Task SOTA PaLM Minerva GPT-4 PaLM 2 Flan-PaLM 2

MATH 50.3

a

8.8 33.6 / 50.3 42.5 34.3 / 48.8 33.2 / 45.2

GSM8K 92.0

b

56.5 / 74.4 58.8 / 78.5 92.0 80.7 / 91.0 84.7 / 92.2

MGSM 72.0

c

45.9 / 57.9 - - 72.2 / 87.0 75.9 / 85.8

15

Table 8: Results on coding evaluations from the PaLM and PaLM 2-S* models. The PaLM 2-S* model is a version of

the PaLM 2-S model trained with additional code-related tokens, similar to PaLM-540B-Coder.

a

PaLM (Chowdhery

et al., 2022).

HumanEval MBPP ARCADE

pass@1 pass@100 pass@1 pass@80 pass@1 pass@30

PaLM 2-S* 37.6 88.4 50.0 86.6 16.2 43.6

PaLM-Coder-540B 35.9

a

88.4

a

47.0

a

80.8

a

7.9

a

33.6

a

4.4 Coding

Code language models are among the most economically significant and widely-deployed LLMs today; code LMs

are deployed in diverse developer tooling (Github, 2021; Tabachnyk & Nikolov, 2022), as personal programming

assistants (OpenAI, 2022; Hsiao & Collins, 2023; Replit, 2022), and as competent tool-using agents (OpenAI, 2023a).

For low-latency, high-throughput deployment in developer workflows, we built a small, coding-specific PaLM 2 model

by continuing to train the PaLM 2-S model on an extended, code-heavy, heavily multilingual data mixture. We call the

resulting model

PaLM 2-S*

which shows significant improvement on code tasks while preserving the performance on

natural language tasks. We evaluate PaLM 2-S*’s coding ability on a set of few-shot coding tasks, including HumanEval

(Chen et al., 2021), MBPP (Austin et al., 2021), and ARCADE (Yin et al., 2022). We also test PaLM 2-S*’s multilingual

coding ability using a version of HumanEval translated into a variety of lower-resource languages (Orlanski et al.,

2023).

Code Generation

We benchmark PaLM 2 on 3 coding datasets: HumanEval (Chen et al., 2021), MBPP (Austin et al.,

2021), and ARCADE (Yin et al., 2022). HumanEval and MBPP are natural language to code datasets which test the

model’s ability to generate self-contained Python programs that pass a set of held-out test cases. ARCADE is a Jupyter

Notebook completion task that requires the model to complete the next cell in a notebook given a textual description and

the preceding notebook cells. As in (Chen et al., 2021; Austin et al., 2021; Yin et al., 2022), we benchmark models in a

pass@1 and pass@k setting. We use greedy sampling for all pass@1 evals and temperature 0.8 with nucleus sampling

p = 0.95

for all pass@k evals. All samples are executed in a code sandbox with access to a small number of relevant

modules and careful isolation from the system environment. For ARCADE, we use the New Tasks split containing

problems from newly curated notebooks to avoid evaluation data leakage.

Results are shown in Table 8. PaLM 2-S* outperforms PaLM-540B-Coder on all benchmarks, often by a significant

margin (e.g. ARCADE), despite being dramatically smaller, cheaper, and faster to serve.

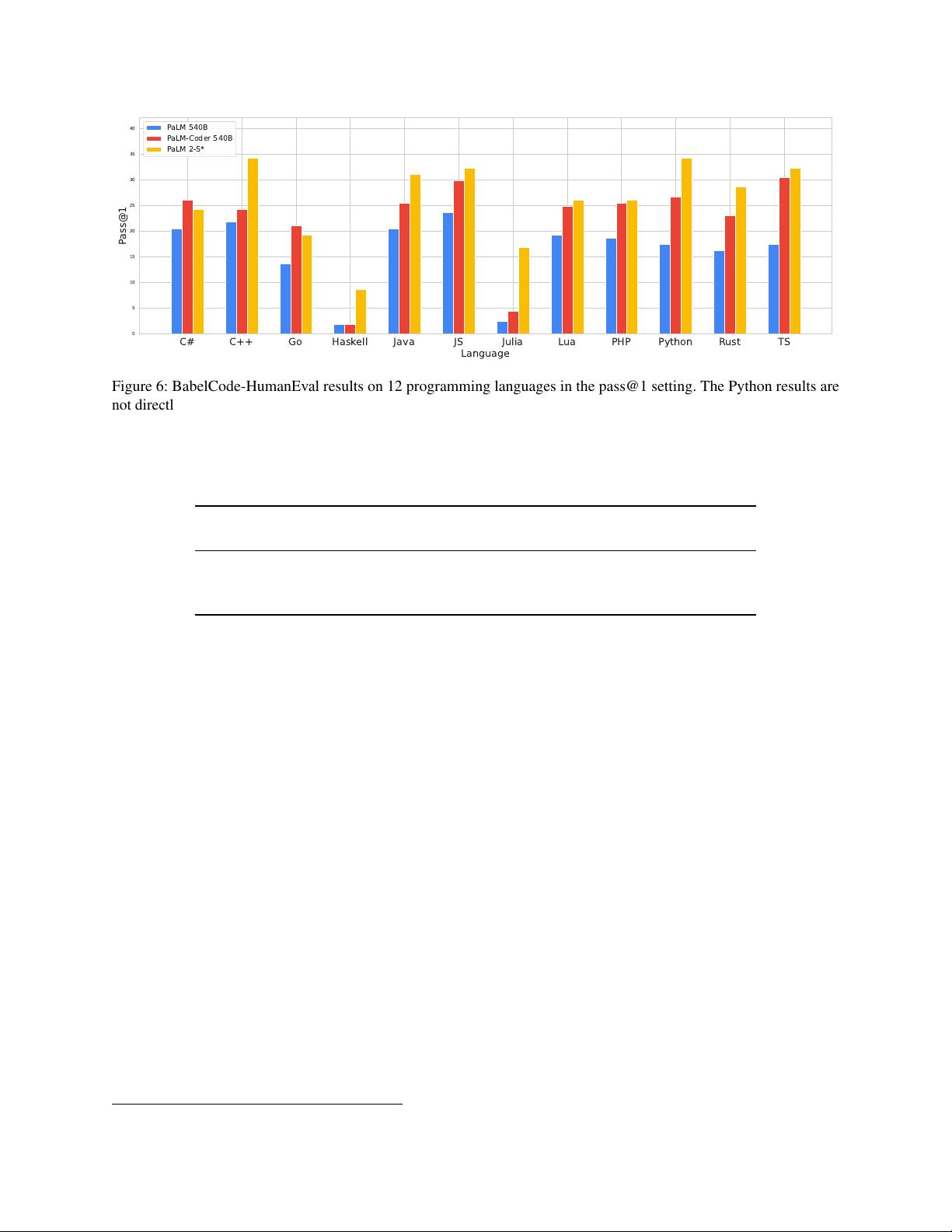

Multilingual Evaluation

We also evaluate PaLM 2-S*’s multilingual coding abilities using BabelCode (Orlanski

et al., 2023) which translates HumanEval into a variety of other programming languages, including high-resource

languages like C++, Java, and Go and low-resource languages like Haskell and Julia. The PaLM 2 code training data is

significantly more multilingual than PaLM’s, which we hope yields significant gains on coding evals. Figure 6 shows

PaLM 2-S*’s results compared to the original PaLM models. We show an example of multilingual program generation

in Figure 7.

PaLM 2-S* outperforms PaLM on all but two languages, with surprisingly little degradation on low-resource languages

like Julia and Haskell; for instance PaLM 2-S* improves upon the much larger PaLM-Coder-540B by

6.3×

on Haskell

and on Julia by

4.7×

. Remarkably, Java, JavaScript and TypeScript performance is actually higher than Python, the

original language.

4.5 Translation

An explicit design choice of PaLM 2 is an improved translation capability. In this section, we evaluate sentence-level

translation quality using recommended practices for high-quality machine translation (Vilar et al., 2022), and measure

16

C# C++ Go Haskell Java JS Julia Lua PHP Python Rust TS

Language

0

5

10

15

20

25

30

35

40

Pass@1

PaLM 540B

PaLM-Coder 540B

PaLM 2-S*

Figure 6: BabelCode-HumanEval results on 12 programming languages in the pass@1 setting. The Python results are

not directly comparable to standard HumanEval due to differences in the evaluation procedure. Raw numeric results are

shown in Table 18.

Table 9: Results on WMT21 translation sets. We observe improvement over both PaLM and the Google Translate

production system according to our primary metric: MQM human evaluations by professional translators.

Chinese−→English English−→German

BLEURT ↑ MQM (Human) ↓ BLEURT ↑ MQM (Human) ↓

PaLM 67.4 3.7 71.7 1.2

Google Translate 68.5 3.1 73.0 1.0

PaLM 2 69.2 3.0 73.3 0.9

potential misgendering harms from translation errors.

WMT21 Experimental Setup

We use the recent WMT 2021 sets (Akhbardeh et al., 2021) to guard against train/test

data leakage, and to facilitate comparison with the state of the art. We compare PaLM 2 against PaLM and Google

Translate. For PaLM and PaLM 2, we prompt the model with 5-shot exemplars; for Google Translate, we send the

source text directly to the model, as this is the format it expects.

We use two metrics for evaluation:

1. BLEURT

(Sellam et al., 2020): We use BLEURT

9

(Sellam et al., 2020) as a SOTA automatic metric instead of

BLEU (Papineni et al., 2002) due to BLEU’s poor correlation with human judgements of quality, especially for

high-quality translations (Freitag et al., 2022).

2. MQM

(Freitag et al., 2021): To compute Multidimensional Quality Metrics (MQM), we hired professional

translators (7 for English-to-German, 4 for Chinese-to-English) and measured translation quality with a document

context version of MQM that mimics the setup proposed in Freitag et al. (2021), which includes the same error

categories, severity levels and error weighting schema. Following Freitag et al. (2021), we assign the following

weights: 5 for each major error, 1 for each minor error, and 0.1 for minor punctuation errors. The final system-level

score is an average over scores from all annotations.

We present the results of an MQM study for Chinese-to-English and English-to-German in Table 9. MQM represents

the average errors per segment, with lower numbers indicating better results. We observe that PaLM 2 improves quality

both over PaLM and Google Translate.

9

We used BLEURT version 0p2p1 for our measurements.

17

Coding in many languages

Please write a function that takes a string ‘s‘ as input and returns a mapping of words to the number of

occurrences of that word, but only containing the words that occur a prime number of times. Write the answer

in Rust and add line-by-line comments in Turkish!

1 use std :: co llec t ion s :: HashM a p ;

2

3 pub fn pr ime _w o rd _oc cu r re nce s (s : & str ) -> HashMap < String , u 3 2 > {

4 // Girdi dizesini boşluklara göre parçalara ayırın.

5 let words = s . sp l it _ wh i te s pac e () ;

6

7 // Her kelimenin kaç kez geçtiğini sayın.

8 let mut counts = HashMap :: new () ;

9 for word in words {

10 let count = counts . en t r y ( w ord . t o_str i ng () ). or _ inser t ( 0 ) ;

11 * coun t += 1;

12 }

13

14 // Yalnızca asal sayıda geçen kelimeleri içeren yeni bir HashMap oluşturun.

15 let mut pr ime_ cou n ts = HashMa p :: new () ;

16 for (word , co u n t ) in counts . iter () {

17 if * count > 1 && is_ p rime (* count ) {

18 pr i me_ cou n ts . i n sert ( word . to_s t ring () , * cou n t ) ;

19 }

20 }

21

22 // HashMap’i döndürün.

23 prim e_c o unt s

24 }

25

26 // Bir sayının asal olup olmadığını kontrol eden bir fonksiyon.

27 fn is_ p rime (n : u 3 2) -> bool {

28 // 1 asal değildir.

29 if n == 1 { return false ; }

30

31 // 2’den n’ye kadar olan tüm sayılar için döngü yapın.

32 for i in 2.. n {

33 // n’yi i’ye bölerseniz kalan 0 ise, n asal değildir.

34 if n % i == 0 {

35 return false ;

36 }

37 }

38

39 // n’yi 2’den n’ye kadar olan hiçbir sayıya bölemediğiniz için, n asaldır.

40 true

41 }

42

43 fn ma in () {

44 let s = "the quick brown fox jumps over the lazy lazy dog dog " ;

45 let counts = pr im e _w ord _o c cu rre nc e s (s );

46 pr i ntln !( " {:?} " , counts ) ;

47 }

Figure 7: PaLM 2 can generate code and natural language across programming and natural languages.

18

Table 10: Results on the FRMT (Few-shot Regional Machine Translation) benchmark of dialect-specific translation.

Inputs are 5-shot exemplars and scores are computed with BLEURT.

Portuguese Portuguese Chinese Chinese

(Brazil) (Portugal) (Mainland) (Taiwan)

PaLM 78.5 76.1 70.3 68.6

Google Translate 80.2 75.3 72.3 68.5

PaLM 2 81.1 78.3 74.4 72.0

Regional translation experimental setup

We also report results on the FRMT benchmark (Riley et al., 2023) for

Few-shot Regional Machine Translation. By focusing on region-specific dialects, FRMT allows us to measure PaLM

2’s ability to produce translations that are most appropriate for each locale—translations that will feel natural to each

community. We show the results in Table 10. We observe that PaLM 2 improves not only over PaLM but also over

Google Translate in all locales.

Potential misgendering harms

We measure PaLM 2 on failures that can lead to potential misgendering harms in

zero-shot translation. When translating into English, we find stable performance on PaLM 2 compared to PaLM, with

small improvements on worst-case disaggregated performance across 26 languages. When translating out of English into

13 languages, we evaluate gender agreement and translation quality with human raters. Surprsingly, we find that even in

the zero-shot setting PaLM 2 outperforms PaLM and Google Translate on gender agreement in three high-resource

languages: Spanish, Polish and Portuguese. We observe lower gender agreement scores when translating into Telugu,

Hindi and Arabic with PaLM 2 as compared to PaLM. See Appendix E.5 for results and analysis.

4.6 Natural language generation

Due to their generative pre-training, natural language generation (NLG) rather than classification or regression has

become the primary interface for large language models. Despite this, however, models’ generation quality is rarely

evaluated, and NLG evaluations typically focus on English news summarization. Evaluating the potential harms or

bias in natural language generation also requires a broader approach, including considering dialog uses and adversarial

prompting. We evaluate PaLM 2’s natural language generation ability on representative datasets covering a typologically

diverse set of languages

10

:

• XLSum

(Hasan et al., 2021), which asks a model to summarize a news article in the same language in a single

sentence, in Arabic, Bengali, English, Japanese, Indonesian, Swahili, Korean, Russian, Telugu, Thai, and Turkish.

• WikiLingua

(Ladhak et al., 2020), which focuses on generating section headers for step-by-step instructions

from WikiHow, in Arabic, English, Japanese, Korean, Russian, Thai, and Turkish.

• XSum (Narayan et al., 2018), which tasks a model with generating a news article’s first sentence, in English.

We compare PaLM 2 to PaLM using a common setup and re-compute PaLM results for this work. We use a custom

1-shot prompt for each dataset, which consists of an instruction, a source document, and its generated summary,

sentence, or header. As evaluation metrics, we use ROUGE-2 for English, and SentencePiece-ROUGE-2, an extension

of ROUGE that handles non-Latin characters using a SentencePiece tokenizer—in our case, the mT5 (Xue et al., 2021)

tokenizer—for all other languages.

We focus on the 1-shot-learning setting, as inputs can be long. We truncate extremely long inputs to about half the max

input length, so that instructions and targets can always fit within the model’s input. We decode a single output greedily

and stop at an exemplar separator (double newline), or continue decoding until the maximum decode length, which is

set to the 99th-percentile target length.

10

We focus on the set of typologically diverse languages also used in TyDi QA (Clark et al., 2020).

19

剩余91页未读,继续阅读

流水不腐程序

- 粉丝: 651

- 资源: 952

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

最新资源

- 新型矿用本安直流稳压电源设计:双重保护电路

- 煤矿掘进工作面安全因素研究:结构方程模型

- 利用同位素位移探测原子内部新型力

- 钻锚机钻臂动力学仿真分析与优化

- 钻孔成像技术在巷道松动圈检测与支护设计中的应用

- 极化与非极化ep碰撞中J/ψ的Sivers与cos2φ效应:理论分析与COMPASS验证

- 新疆矿区1200m深孔钻探关键技术与实践

- 建筑行业事故预防:综合动态事故致因理论的应用

- 北斗卫星监测系统在电网塔形实时监控中的应用

- 煤层气羽状水平井数值模拟:交替隐式算法的应用

- 开放字符串T对偶与双空间坐标变换

- 煤矿瓦斯抽采半径测定新方法——瓦斯储量法

- 大倾角大采高工作面设备稳定与安全控制关键技术

- 超标违规背景下的热波动影响分析

- 中国煤矿选煤设计进展与挑战:历史、现状与未来发展

- 反演技术与RBF神经网络在移动机器人控制中的应用

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功