没有合适的资源?快使用搜索试试~ 我知道了~

首页Gallager《随机过程理论应用》习题精选解答

"《随机过程:理论与应用》是罗伯特·G·加拉格尔(Robert G. Gallager)所著的一本经典教材,该书源自他在麻省理工学院多年教学的经验和讲义,对于学习随机过程的学生来说是一份宝贵的资源。加拉格尔教授以其在随机过程和信息论领域的深厚造诣而知名,这本书旨在将理论知识与实际应用相结合。 该PDF文件包含了加拉格尔教授精心挑选的练习题的答案,适用于参加课程学习的人群。书中提供的解答有时会引用文本中的定理、推论和引理,但请注意,书中的编号系统与作者网站上章节1-3的草稿略有不同。网页上的定理、推论等单独编号,而在文本中则统一编号,因此读者在查找时需细心核对。 加拉格尔教授特别感谢了Shan-Yuan Ho的帮助,她对许多答案进行了编辑,以及Natasha Blitvic和Mina Karzand等教学助理的贡献,他们为早期的解决方案草案作出了努力。此外,书中的一些习题也得到了Kluwer Academic Press的许可,这些题目曾在加拉格尔1995年的著作《离散随机过程》中出现,其原始解答由Shan-Yuan Ho准备。 这本手册不仅提供了理论学习的补充,也是理解和掌握随机过程概念、公式和方法的重要参考工具。通过解决书中的习题,学生能够检验自己的理解,提升问题解决能力,并能在实际问题中灵活运用随机过程理论。对于希望深入研究随机过程或准备相关课程考试的学生来说,这份解决方案手册无疑是一份不可或缺的学习资料。"

资源详情

资源推荐

16 APPENDIX A. SOLUTIONS TO EXERCISES

where a(n) ⇠ b(n) means that lim

n!1

a(n)/b(n) = 1.

Solution: Note that (A.6) provides an upper bound to

n

k+`

and a slight modification of

the same argument provides the lower bound

n

k+`

n

k

nk`

k+`

`

. Taking the ratio of the

upper to lower bound for fixed ` and ˜p as n ! 1, we see that

✓

n

k + `

◆

⇠

✓

n

k

◆

n k

k

`

so that

p

S

n

(k + `) ⇠ p

S

n

(k)(˜qp/˜pq)

`

(A.9)

follows. Replacing the upper bound in (A.8) with the asymptotic equality in (A.9), and

letting ` grow very slowly with n, we get Pr{S

n

k} ⇠

˜pq

˜pp

p

S

n

(k).

Exercise 1.39: Let {X

i

; i 1} be IID binary rv’s. Let Pr{X

i

= 1} = , Pr{X

i

= 0} = 1 . Let

S

n

= X

1

+ ··· + X

n

. Let m be an arbitrary but fixed positive integer. Think! then evaluate the following

and explain your answers:

a) lim

n!1

i: nmin+m

Pr{S

n

= i}.

Solution: It is easier to reason about the problem if we restate the sum in the following

way:

X

i: nmin+m

Pr{S

n

= i} = Pr{n m S

n

n + m}

= Pr

m S

n

nX m

= Pr

⇢

m

p

n

S

n

nX

p

n

m

p

n

,

where is the standard deviation of X. Now in the limit n ! 1, (S

n

nX)/

p

n

approaches a normalized Gaussian rv in distribution, i.e.,

lim

n!1

Pr

⇢

m

p

n

S

n

nX

p

n

m

p

n

= lim

n!1

⇥

m

p

n

m

p

n

⇤

= 0.

This can also be seen immediately from the binomial distribution as it approaches a discrete

Gaussian distribution. We are looking only at essentially the central 2m terms of the

binomial, and each of those terms goes to 0 as 1/

p

n with increasing n.

b) lim

n!1

i :0in+m

Pr{S

n

= i}.

Solution: Here all terms on lower side of the distribution are included and the upper side

is bounded as in (a). Arguing in the same way as in (a), we see that

X

i:0in+m

Pr{S

n

= i} = Pr

⇢

S

n

nX

p

n

m

p

n

.

In the limit, this is (0) = 1/2.

c) lim

n!1

i :n(1/m)in(+1/m)

Pr{S

n

= i}.

A.1. SOLUTIONS FOR CHAPTER 1 17

Solution: Here the number of terms included in the sum is increasing linearly with n, and

the appropriate mechanism is the WLLN.

X

i:n(1/m)in(+1/m)

Pr

{

S

n

= i

}

= Pr

⇢

1

m

S

n

nX

n

1

m

.

In the limit n ! 1, this is 1 by the WLLN. The essence of this exercise has been to scale

the random variables properly to go the limit. We have used the CLT and the WLLN, but

one could guess the answers immediately by recognizing what part of the distribution is

being looked at.

Exercise 1.44: Let X

1

, X

2

. . . be a sequence of IID rv’s each with mean 0 and variance

2

. Let

S

n

= X

1

+ ··· + X

n

for all n and consider the random variable S

n

/

p

n S

2n

/

p

2n. Find the limiting

CDF for this sequence of rv’s as n ! 1. The point of this exercise is to see clearly that the CDF of

S

n

/

p

n S

2n

/

p

2n is converging in n but that the sequence of rv’s is not converging in any reasonable

sense.

Solution: If we write out the above expression in terms of the X

i

, we get

S

n

p

n

S

2n

p

2n

=

n

X

i=1

X

i

1

p

n

1

p

2n

2n

X

i=n+1

X

i

p

2n

.

The first sum above approaches a Gaussian distribution of variance (1 1/

p

2)

2

and the

second sum approaches a Gaussian distribution of variance 1/2. Since these two terms are

independent, the di↵erence approaches a Gaussian distribution of variance 1 + (1 1/

p

2)

2

.

This means that the distribution of the di↵erence does converge to this Gaussian rv as

n ! 1. As n increases, however, this di↵erence slowly changes, and each time n is doubled,

the new di↵erence is only weakly correlated with the old di↵erence.

Note that S

n

/n

X

behaves in this same way. The CDF converges as n ! 1, but the rv’s

themselves do not approach each other in any reasonable way. The point of the problem

was to emphasize this property.

Exercise 1.47: Consider a discrete rv X with the PMF

p

X

(1) = (1 10

10

)/2,

p

X

(1) = (1 10

10

)/2,

p

X

(10

12

) = 10

10

.

a) Find the mean and variance of X. Assuming that {X

m

; m 1} is an IID sequence with the distribution

of X and that S

n

= X

1

+ ···+ X

n

for each n, find the mean and variance of S

n

. (no explanations needed.)

Solution: X = 100 and

2

X

= 10

14

+ (1 10

10

) 10

4

⇡ 10

14

. Thus S

n

= 100n and

2

S

n

⇡ n ⇥ 10

14

.

b) Let n = 10

6

and describe the event {S

n

10

6

} in words. Find an exact expression for Pr

S

n

10

6

=

F

S

n

(10

6

).

Solution: This is the event that all 10

6

trials result in ±1. That is, there are no occurrences

of 10

12

. Thus Pr

S

n

10

6

= (1 10

10

)

10

6

18 APPENDIX A. SOLUTIONS TO EXERCISES

c) Find a way to use the union bound to get a simple upper bound and approximation of 1 F

S

n

(10

6

).

Solution: From the union bound, the probability of one or more occurrences of the sample

value 10

12

out of 10

6

trials is bounded by a sum of 10

6

terms, each equal to 10

10

, i.e.,

1 F

S

n

(10

6

) 10

4

. This is also a good approximation, since we can write

(1 10

10

)

10

6

= exp

⇣

10

6

ln(1 10

10

)

⌘

⇡ exp(10

6

· 10

10

) ⇡ 1 10

4

.

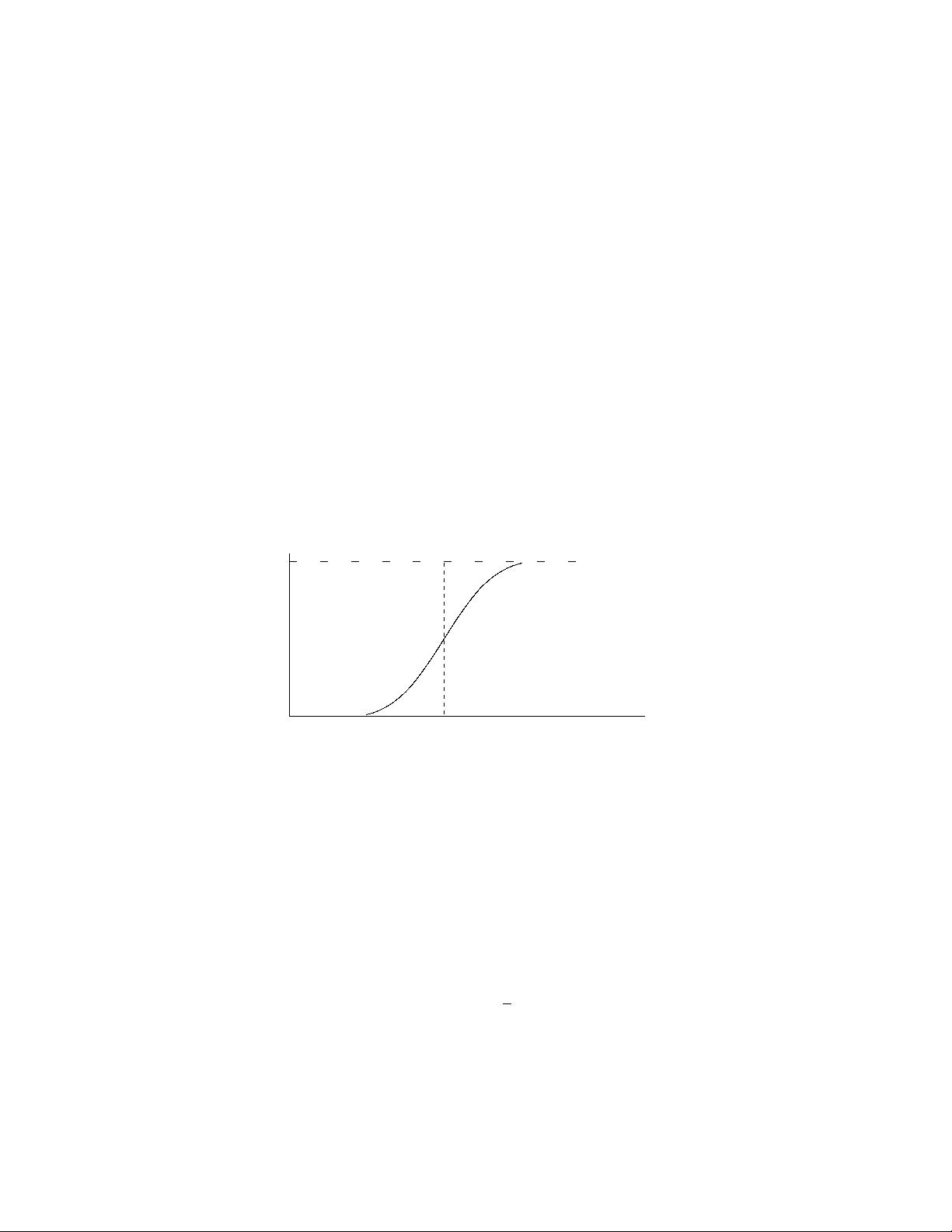

d) Sketch the CDF of S

n

for n = 10

6

. You can choose the horizontal axis for your sketch to go from 1

to +1 or from 3 ⇥ 10

3

to 3 ⇥ 10

3

or from 10

6

to 10

6

or from 0 to 10

12

, whichever you think will best

describe this CDF.

Solution: Conditional on no occurrences of 10

12

, S

n

simply has a binomial distribution.

We know from the central limit theorem for the binomial case that S

n

will be approxi-

mately Gaussian with mean 0 and standard deviation 10

3

. Since one or more occurrences

of 10

12

occur only with probability 10

4

, this can be neglected in the sketch, so the CDF is

approximately Gaussian with 3 sigma points at ±3 ⇥ 10

3

. There is a little blip, in the 4th

decimal place out at 10

12

which doesn’t show up well in the sketch, but of course could be

important for some purp oses such as calculating

2

.

10

3

3 ⇥ 10

3

1

0

0

F

S

n

e) Now let n = 10

10

. Give an exact expression for Pr

S

n

10

10

and show that this can be approximated

by e

1

. Sketch the CDF of S

n

for n = 10

10

, using a horizontal axis going from slightly below 0 to slightly

more than 2 ⇥ 10

12

. Hint: First view S

n

as conditioned on an appropriate rv.

Solution: First consider the PMF p

B

(j) of the numb er B = j of occurrences of the value

10

12

. We have

p

B

(j) =

✓

10

10

j

◆

p

j

(1 p)

10

10

j

where p = 10

10

p

B

(0) = (1 p)

10

10

= exp{10

10

ln[1 p]} ⇡ exp(10

10

p) = e

1

p

B

(1) = 10

10

p(1 p)

10

10

1

= (1 p)

10

10

1

⇡ e

1

p

B

(2) =

✓

10

10

2

◆

p

2

(1 p)

10

10

2

⇡

1

2

e

1

.

Conditional on B = j, S

n

will be approximately Gaussian with mean 10

12

j and standard

deviation 10

5

. Thus F

S

n

(s) rises from 0 to e

1

over a range from about 3⇥10

5

to +3⇥10

5

.

It then stays virtually constant up to about 10

12

3 ⇥10

5

. It rises to 2/e by 10

12

+ 3 ⇥10

5

.

A.1. SOLUTIONS FOR CHAPTER 1 19

It stays virtually constant up to 2 ⇥10

12

3 ⇥10

5

and rises to 2.5/e by 2 ⇥10

12

+ 3 ⇥10

5

.

When we sketch this, it looks like a staircase function, rising from 0 to 1/e at 0, from 1/e

to 2/e at 10

12

and from 2/e to 2.5/e at 2 ⇥ 10

12

. There are smaller steps at larger values,

but they would not show up on the sketch.

d) Can you make a qualitative statement about how the distribution function of a rv X a↵ects the required

size of n before the WLLN and the CLT provide much of an indication about S

n

.

Solution: It can be seen that for this peculiar rv, S

n

/n is not concentrated around its

mean even for n = 10

10

and S

n

/

p

n does not look Gaussian even for n = 10

10

. For this

particular distribution, n has to be so large that B, the number of occurrences of 10

12

, is

large, and this requires n >> 10

10

. This illustrates a common weakness of limit theorems.

They say what happens as a parameter (n in this case) becomes sufficiently large, but it

takes extra work to see how large that is.

Exercise 1.48: Let {Y

n

; n 1} be a sequence of rv’s and assume that lim

n!1

E [|Y

n

|] = 0. Show that

{Y

n

; n 1} converges to 0 in probability. Hint 1: Look for the easy way. Hint 2: The easy way uses the

Markov inequality.

Solution: Applying the Markov inequality to |Y

n

| for arbitrary n and arbitrary ✏ > 0, we

have

Pr{|Y

n

| ✏}

E

[

|Y

n

|

]

✏

.

Thus going to the limit n ! 1 for the given ✏,

lim

n!1

Pr

{

|Y

n

| ✏

}

= 0.

Since this is true for every ✏ > 0, this satisfies the definition for convergence to 0 in proba-

bility.

20 APPENDIX A. SOLUTIONS TO EXERCISES

A.2 Solutions for Chapter 2

Exercise 2.1: a) Find the Erlang density f

S

n

(t) by convolving f

X

(x) = exp(x), x 0 with itself n

times.

Solution: For n = 2, we convolve f

X

(x) with itself.

f

S

2

(t) =

Z

t

0

f

X

1

(x)f

X

2

(t x) dx =

Z

t

0

e

x

e

(tx)

dx =

2

te

t

.

For larger n, convolving f

X

(x) with itself n times is found by taking the convolution n1

times, i.e., f

S

n1

(t), and convolving this with f

X

(x). Starting with n = 3,

f

S

3

(t) =

Z

t

0

f

S

2

(x)f

X

3

(t x) dx =

Z

t

0

2

xe

x

e

(tx)

dx =

3

t

2

2

e

t

f

S

4

(t) =

Z

t

0

3

x

2

2

e

x

· e

(tx)

dx =

4

t

3

3!

e

t

.

We now see the pattern; each additional integration increases the power of and t by 1

and multiplies the denominator by n 1. Thus we hypothesize that f

S

n

(t) =

n

t

n1

n!

e

t

.

If one merely wants to verify the well-known Erlang density, one can simply use induction

from the beginning, but it is more satisfying, and not that much more difficult, to actually

derive the Erlang density, as done above.

b) Find the moment generating function of X (or find the Laplace transform of f

X

(x)), and use this to find

the moment generating function (or Laplace transform) of S

n

= X

1

+ X

2

+ ··· + X

n

.

Solution: The formula for the MGF is almost trivial here,

g

X

(r) =

Z

1

0

e

x

e

rx

dx =

r

for r < .

Since S

n

is the sum of n IID rv’s,

g

S

n

(r) =

⇥

g

X

(r)

⇤

n

=

✓

r

◆

n

.

c) Find the Erlang density by starting with (2.15) and then calculating the marginal density for S

n

.

Solution: To find the marginal density, f

S

n

(s

n

), we start with the joint density in (2.15)

and integrate over the region of space where s

1

s

2

··· s

n

. It is a peculiar integral,

since the integrand is constant and we are just finding the volume of the n 1 dimensional

space in s

1

, . . . , s

n1

with the inequality constraints above. For n = 2 and n = 3, we have

f

S

2

(s

2

) =

2

e

s

2

Z

s

2

o

ds

1

=

⇣

2

e

s

2

⌘

s

2

f

S

3

(s

3

) =

3

e

s

3

Z

s

3

0

Z

s

2

0

ds

1

ds

2

=

3

e

s

3

Z

s

3

0

s

2

ds

2

=

⇣

3

e

s

3

⌘

s

2

3

2

.

剩余137页未读,继续阅读

weixin_43417896

- 粉丝: 0

- 资源: 1

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

最新资源

- 前端面试必问:真实项目经验大揭秘

- 永磁同步电机二阶自抗扰神经网络控制技术与实践

- 基于HAL库的LoRa通讯与SHT30温湿度测量项目

- avaWeb-mast推荐系统开发实战指南

- 慧鱼SolidWorks零件模型库:设计与创新的强大工具

- MATLAB实现稀疏傅里叶变换(SFFT)代码及测试

- ChatGPT联网模式亮相,体验智能压缩技术.zip

- 掌握进程保护的HOOK API技术

- 基于.Net的日用品网站开发:设计、实现与分析

- MyBatis-Spring 1.3.2版本下载指南

- 开源全能媒体播放器:小戴媒体播放器2 5.1-3

- 华为eNSP参考文档:DHCP与VRP操作指南

- SpringMyBatis实现疫苗接种预约系统

- VHDL实现倒车雷达系统源码免费提供

- 掌握软件测评师考试要点:历年真题解析

- 轻松下载微信视频号内容的新工具介绍

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功